Decoding Viral Evolution: How AI-Powered Pattern Recognition is Revolutionizing Pathogen Genomics and Drug Discovery

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on the application of artificial intelligence in viral sequence pattern recognition.

Decoding Viral Evolution: How AI-Powered Pattern Recognition is Revolutionizing Pathogen Genomics and Drug Discovery

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on the application of artificial intelligence in viral sequence pattern recognition. It explores foundational concepts from sequence motifs to evolutionary dynamics, details cutting-edge methodological approaches including deep learning architectures and real-world applications in surveillance and therapeutic design. The guide addresses critical challenges in model robustness, data scarcity, and computational efficiency, and offers a framework for rigorous model validation and comparison with traditional bioinformatics tools. By synthesizing current research and practical insights, this article serves as a roadmap for integrating AI into virology to accelerate pandemic preparedness and antiviral development.

From Sequences to Signals: Foundational AI Concepts for Viral Genomics

Defining Pattern Recognition in the Context of Viral Nucleotide and Amino Acid Sequences

Pattern recognition in virology is the systematic identification of statistically significant motifs, conserved domains, mutation signatures, and structural patterns within viral genetic and protein sequences. Framed within a broader thesis on AI-driven viral research, this process is foundational for tracking evolution, predicting host tropism, identifying drug targets, and facilitating rapid response to emerging threats. This guide details the technical methodologies and computational frameworks enabling this critical analysis.

Core Pattern Types and Quantitative Analysis

Patterns in viral sequences manifest at multiple, interconnected levels. The table below summarizes the primary categories and their research applications.

Table 1: Categories of Patterns in Viral Sequences

| Pattern Category | Definition | Key Analysis Methods | Primary Research Application |

|---|---|---|---|

| Conserved Motifs | Short, invariant sequences critical for function (e.g., catalytic sites, polymerase motifs). | Multiple Sequence Alignment (MSA), Hidden Markov Models (HMMs), MEME Suite. | Vaccine design (target invariant epitopes), broad-spectrum antiviral drug target identification. |

| Mutation Signatures | Non-random patterns of substitutions (e.g., CpG depletion, APOBEC-mediated hypermutation). | Entropy analysis, machine learning classifiers (e.g., Random Forest), phylodynamic models. | Tracking transmission clusters, understanding host adaptation, inferring selective pressures. |

| Recombination Signals | Breakpoints indicating genetic material exchange between viral strains or species. | Bootscan/Simplot, phylogenetic incongruence tests, recombination detection programs (RDP5). | Identifying novel variants, assessing pandemic potential, understanding genome plasticity. |

| Structural Patterns | RNA secondary structures (e.g., IRES, frameshift elements) or protein domains. | Free energy minimization (mfold, ViennaRNA), homology modeling, AlphaFold2. | Disrupting replication mechanisms, designing antisense oligonucleotides (ASOs). |

| Host Interaction Motifs | Short linear motifs (SLiMs) or domains that bind host proteins (e.g., SH3, PDZ binders). | Regular expression scanning, motif enrichment analysis, yeast two-hybrid screens. | Understanding pathogenesis, identifying host-directed therapeutic targets. |

Table 2: Quantitative Metrics for Pattern Analysis (Example: SARS-CoV-2 Spike Protein RBD)

| Metric | Value/Result | Interpretation |

|---|---|---|

| Shannon Entropy (Pos. 501) | ~1.2 (High) | Position 501 (N→Y, etc.) is a highly variable site under positive selection. |

| Conservation Score (% Identity) | >85% across sarbecoviruses | High conservation suggests functional constraint; potential target for pan-sarbecovirus vaccines. |

| Glycosylation Sites (N-linked) | 22 predicted sites | Extensive glycosylation shields the protein from immune recognition. |

| Average Mutation Rate | ~1x10⁻³ substitutions/site/year | Establishes a molecular clock for dating divergence events. |

Experimental and Computational Methodologies

Protocol: High-Throughput Sequencing and Variant Calling Pipeline

This protocol outlines the steps from sample to pattern identification for viral genomic surveillance.

Sample Preparation & Sequencing:

- Extract viral RNA/DNA from clinical or cultured samples.

- Perform reverse transcription (for RNA viruses) and amplify whole genomes using tiling multiplex PCR or metagenomic approaches.

- Prepare libraries (e.g., Illumina Nextera, Oxford Nanopore ligation kits) and sequence on an appropriate platform (Illumina for accuracy, Nanopore for real-time).

Bioinformatic Pre-processing:

- Quality Control: Use

FastQCandNanoplot. Trim adapters and low-quality bases withTrimmomaticorPorechop. - Alignment: Map reads to a reference genome using

BWA-MEM(Illumina) orminimap2(Nanopore). Generate consensus sequences withsamtoolsandbcftools.

- Quality Control: Use

Pattern Recognition Analysis:

- Variant Calling: Identify SNPs and indels using

ivar,bcftools, ormedaka. Filter based on depth (>100x) and frequency (>5% for minority variants). - Multiple Sequence Alignment: Align consensus sequences with

MAFFTorClustal Omega. - Pattern Identification: Feed the MSA into tools like

HMMER(for building family profiles),Geneious(for visual motif discovery), or custom Python/R scripts for entropy calculation.

- Variant Calling: Identify SNPs and indels using

Protocol: Identifying Host Interaction Motifs via Affinity Purification-Mass Spectrometry (AP-MS)

This experimental protocol identifies viral proteins' host binding partners, revealing functional motifs.

Cloning & Expression:

- Clone the viral gene of interest (e.g., SARS-CoV-2 ORF6) into an expression vector with an affinity tag (e.g., FLAG, HA).

- Transfect the construct into human cell lines (e.g., HEK293T, A549).

Affinity Purification:

- Lyse cells 48h post-transfection in a mild non-denaturing buffer.

- Incubate lysate with anti-FLAG M2 magnetic agarose beads for 2-4 hours at 4°C.

- Wash beads stringently (e.g., with 0.5M KCl) to remove non-specific interactors.

- Elute bound protein complexes using FLAG peptide or low-pH buffer.

Mass Spectrometry & Analysis:

- Digest eluted proteins with trypsin. Analyze peptides by liquid chromatography-tandem mass spectrometry (LC-MS/MS).

- Identify host proteins using database search engines (e.g., MaxQuant, Proteome Discoverer).

- Perform Gene Ontology (GO) enrichment analysis. Scan the viral protein sequence for known SLiMs using the

ELMdatabase to map interaction domains.

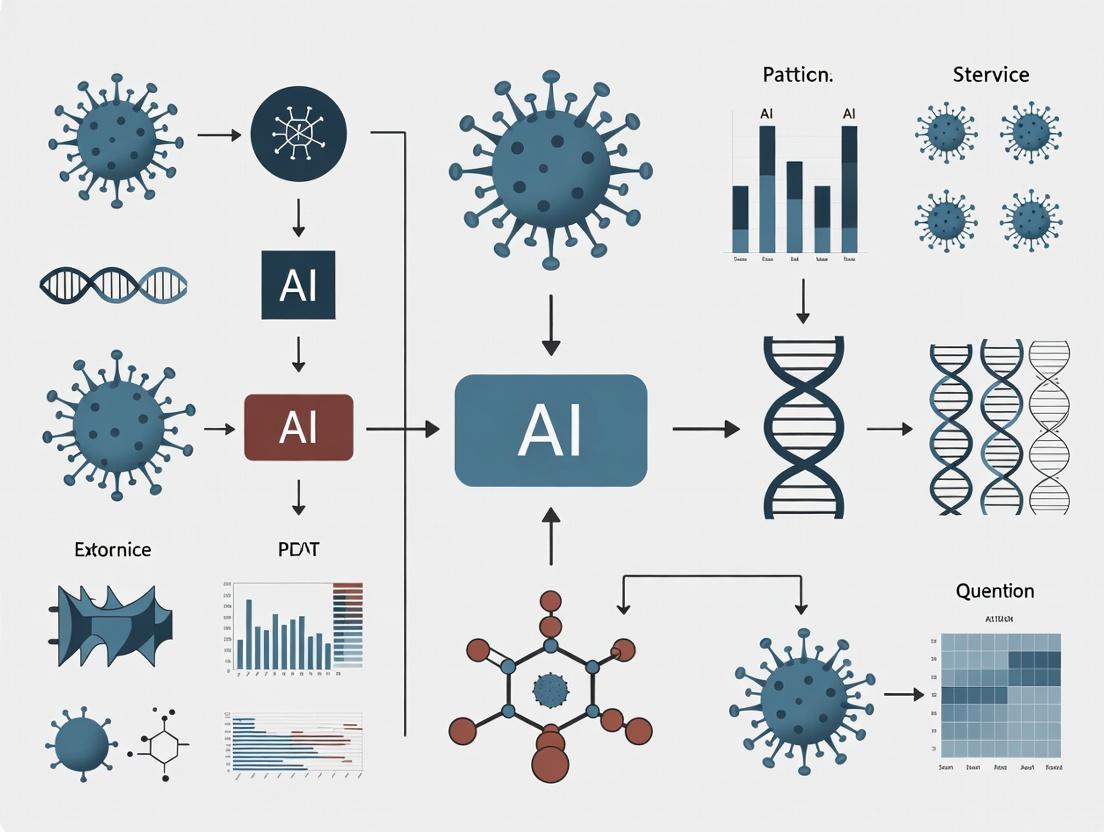

Visualization of Workflows and Relationships

Workflow: Viral Pattern Recognition Pathways

Logic: From Pattern Discovery to Application

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Research Reagent Solutions for Viral Sequence Pattern Studies

| Reagent/Material | Function/Application | Example Product/Kit |

|---|---|---|

| High-Fidelity Polymerase | Accurate amplification of viral genomes for sequencing; minimizes PCR-induced errors. | Q5 High-Fidelity DNA Polymerase, SuperScript IV for RT. |

| Metagenomic Sequencing Kit | Unbiased capture of viral sequences from complex samples (e.g., wastewater, tissue). | Illumina Nextera XT, Oxford Nanopore SQK-RBK114. |

| Variant Calling Pipeline Software | Specialized tools for identifying low-frequency variants in viral populations. | iVar, LoFreq, VirVarSeq. |

| Multiple Sequence Alignment Tool | Aligns hundreds to thousands of sequences to identify conserved/variable regions. | MAFFT, Clustal Omega, MUSCLE. |

| Motif Discovery Suite | Identifies overrepresented sequence motifs in unaligned or aligned sequences. | MEME Suite, HMMER, GLAM2. |

| Affinity Purification Beads | Isolate tagged viral protein complexes from host cell lysates for interactome mapping. | Anti-FLAG M2 Magnetic Beads, Streptactin XT beads. |

| Phylogenetic Analysis Software | Reconstructs evolutionary relationships to trace patterns in time and geography. | Nextstrain, BEAST2, IQ-TREE. |

| Structural Prediction Platform | Infers 3D structure of viral proteins/RNA from sequence to guide functional insights. | AlphaFold2, RoseTTAFold, ViennaRNA. |

The advent of high-throughput sequencing (HTS) has transformed virology, generating datasets of unprecedented scale and complexity. The manual analytical techniques that sufficed a decade ago are now fundamentally incapable of extracting meaningful biological insights from these data streams. This whitepaper, framed within a broader thesis on AI for pattern recognition in viral sequences, details the technical limitations of manual analysis and presents the computational methodologies required to advance research and therapeutic development.

Quantitative Scale of the Challenge

The following table summarizes the quantitative gap between data generation capacity and manual analysis capability.

Table 1: Scale of Viral Genomics Data vs. Manual Analysis Capacity

| Metric | Current Scale (2024-2025 Estimates) | Manual Analysis Capacity | Disparity Factor |

|---|---|---|---|

| Sequences in Public Repositories (e.g., GISAID, NCBI Virus) | >300 million viral sequences | ~10-100 sequences per deep manual study | >10^6 |

| Data Generation Rate (per major sequencing project) | 1 TB - 10 TB raw data | <1 GB analyzable via manual inspection | >10^3 |

| Time for Phylogenetic Tree Construction (per 1,000 sequences) | Computational: Minutes to hours | Manual alignment & tree drawing: Weeks to months | >10^3 |

| Variant Surveillance (Number of mutations to track in real-time) | Millions of novel mutations/year (e.g., SARS-CoV-2) | Hundreds per analyst/year | >10^4 |

| Host-Pathogen Interaction Prediction (Potential epitopes per genome) | 100s - 1000s of potential epitopes | <10 characterized manually per study | >10^2 |

Core Technical Limitations of Manual Analysis

Dimensionality and Complexity

Viral genome analysis involves high-dimensional data (nucleotides, codons, structural elements, phenotypic metadata). Manual methods cannot integrate >3 dimensions effectively, leading to oversimplified models.

Temporal Dynamics and Real-Time Surveillance

Global surveillance platforms generate thousands of sequences daily. Manual curation and annotation pipelines introduce lags of weeks, crippling pandemic response.

Detection of Weak, High-Dimensional Signals

Complex patterns—like convergent evolution across non-contiguous genomic regions or subtle recombination signals—are statistically defined and invisible to manual review.

Experimental Protocols: From Data to Insight

This section outlines standard protocols that generate the data volumes necessitating automated, AI-driven analysis.

Protocol 1: Large-Scale Viral Metagenomic Sequencing for Outbreak Surveillance

- Sample Collection & Nucleic Acid Extraction: Use automated platforms (e.g., QIAcube) for high-throughput extraction from environmental or clinical samples.

- Library Preparation: Employ shotgun or target-enrichment (e.g., Twist Pan-Viral Panel) protocols on robotic liquid handlers.

- Sequencing: Run on platforms like Illumina NovaSeq X (up to 16Tb/run) or Oxford Nanopore GridION/PromethION for real-time output.

- Primary Computational Analysis: This is where manual methods fail. Requires:

- Basecalling & Demultiplexing: (Nanopore: Dorado, Illumina: bcl2fastq).

- Quality Trimming: Fastp, Trimmomatic.

- Host Subtraction: Alignment to host genome (Bowtie2, BWA).

- De novo Assembly & Contig Binning: MetaSPAdes, CLC Assembly Cell.

- Taxonomic Assignment: Alignment (BLAST, DIAMOND) to curated DBs (RefSeq) or k-mer based (Kraken2).

Protocol 2: Longitudinal Intra-Host Viral Evolution Study

- Time-Series Sampling: Collect serial samples from infected host (human, animal model).

- Deep Sequencing: Achieve high coverage (>10,000x) to detect low-frequency variants.

- Variant Calling:

- Map reads to reference genome (BWA-MEM, Minimap2).

- Identify variants (LoFreq, iVar) with minimum frequency thresholds (e.g., 0.1%).

- Critical Analysis Step: Linkage disequilibrium and haplotype reconstruction across the genome (requires computational tools like PredictHaplo or QuasiRecomb).

- Phenotypic Correlation: Link variant patterns to clinical/metadata (e.g., drug resistance, virulence). This multi-variable correlation is impossible at scale manually.

Visualizing the Analytical Workflow and AI Integration

The following diagrams, created using Graphviz DOT language, illustrate the required computational workflows.

Diagram 1: Manual bottleneck vs AI path in viral data analysis.

Diagram 2: AI pattern recognition engine for integrated viral analysis.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Research Reagents & Computational Tools for Viral Genomics

| Item | Function & Relevance | Example Product/Software |

|---|---|---|

| High-Fidelity Polymerase | Reduces sequencing errors during amplification, crucial for accurate variant calling. | Q5 High-Fidelity DNA Polymerase (NEB) |

| Pan-Viral Enrichment Probes | Capture viral sequences from complex samples for sensitive detection. | Twist Comprehensive Viral Research Panel |

| Ultra-Pure Nucleic Acid Kits | Prepares high-integrity RNA/DNA for long-read sequencing. | ZymoBIOMICS Miniprep Kit |

| Metatranscriptomic Library Prep Kits | Enables direct sequencing of viral RNA, capturing replication intermediates. | Illumina Stranded Total RNA Prep |

| Barcoded Multiplexing Kits | Allows pooling of hundreds of samples, enabling cost-effective large-scale studies. | Oxford Nanopore Native Barcoding Kit 96 |

| AI-Ready Reference Databases | Curated, annotated databases for training and validating AI models. | NCBI Virus, GISAID EpiCoV database |

| Cloud Computing Platform | Provides scalable compute for genome assembly, phylogenetics, and AI model training. | Google Cloud Life Sciences, AWS HealthOmics |

| Specialized AI Frameworks | Libraries for building custom deep learning models on biological sequences. | TensorFlow with BioSeq-API, PyTorch Geometric for graphs |

The accelerated evolution of viruses presents a formidable challenge to global public health. Traditional sequence analysis methods are increasingly insufficient for deciphering the complex patterns that govern viral adaptation, immune evasion, and pathogenesis. This whitepaper details the four fundamental pattern types—Motifs, Variants, Recombination Signals, and Evolutionary Signatures—which form the core substrate for advanced artificial intelligence (AI) models in viral research. The systematic identification and interpretation of these patterns are critical for developing broad-spectrum antivirals, universal vaccines, and predictive outbreak models.

Defining the Core Pattern Types

Motifs: Conserved Functional Signatures

Motifs are short, conserved sequence or structure patterns associated with a specific biological function. In viral genomes, they often represent enzyme active sites, receptor-binding domains, packaging signals, or regulatory elements.

Table 1: Key Viral Motif Types and Functions

| Motif Type | Typical Length | Primary Function | Example (Virus) | AI Detection Method |

|---|---|---|---|---|

| Linear Sequence | 5-20 bp/aa | Protein binding, cleavage sites | Furin cleavage site (SARS-CoV-2 S protein) | Position-Specific Scoring Matrices (PSSMs), CNNs |

| Structural RNA | 50-200 nt | Genome packaging, replication | HIV-1 psi (Ψ) packaging signal | Graph Neural Networks (GNNs) on secondary structure |

| Phosphorylation Sites | 3-7 aa | Regulation of protein activity | NS5A phosphosites (HCV) | Logistic regression on kinase-specific patterns |

| Nuclear Localization Signal (NLS) | 4-8 aa | Nuclear import of viral proteins | SV40 Large T-antigen NLS | Motif finding algorithms (e.g., MEME, DREME) |

Variants: Population-Level Mutations

Variants are mutations that achieve significant frequency within a viral population. Their patterns of emergence and fixation are key to understanding viral fitness and transmissibility.

Table 2: Quantitative Impact of Key Variant Classes (2020-2023)

| Variant Class | Avg. Mutation Rate (nt/genome/replication) | Typical Selection Coefficient (s) | Key Driver of Emergence | Dominant AI Analysis Tool |

|---|---|---|---|---|

| Immune Escape | 1-2 x 10^-3 (RNA viruses) | 0.05 - 0.3 | Host immune pressure | Transformer models (e.g., ESM-2) |

| Transmissibility-Enhancing | 1-5 x 10^-4 | 0.1 - 0.5 | Human-to-human adaptation | Phylogenetic Inference with ML (PAML, BEAST2) |

| Drug Resistance | 1 x 10^-5 - 1 x 10^-4 | 0.2 - 1.0 (strong selection) | Antiviral therapy | 3D Convolutional Networks on protein structures |

| Host Range Expansion | Variable | 0.01 - 0.2 | Cross-species transmission | Random Forests on host-specific residue features |

Recombination Signals: Genomic Rearrangements

Recombination involves the exchange of genetic material between viral co-infections, leading to novel chimeric genomes. Breakpoint signals and parental strand identification are critical detection targets.

Experimental Protocol: Identification of Recombination Breakpoints via Deep Sequencing

Objective: To accurately identify recombination breakpoints in mixed viral populations using next-generation sequencing (NGS) and AI-based signal processing.

Materials:

- Viral RNA from co-infected cell culture or clinical sample.

- Reverse transcriptase and high-fidelity PCR kits.

- NGS platform (Illumina MiSeq/NextSeq).

- Bioinformatics pipelines (RDP5, Simplot, in-house ML scripts).

Procedure:

- Library Preparation: Perform RT-PCR with overlapping amplicons spanning the full genome. Use barcoded adapters for multiplexing.

- Sequencing: Run on NGS platform to achieve minimum 10,000x coverage per sample.

- Primary Alignment: Map reads to reference genomes using BWA-MEM or Bowtie2.

- Signal Detection: Apply sliding-window analysis (200-nt windows, 20-nt step) to calculate similarity scores to potential parental strains.

- AI-Based Confirmation: Input window scores into a trained Gradient Boosting classifier (e.g., XGBoost) trained on known recombinant/non-recombinant sequences to identify statistically supported breakpoints (p < 0.001).

- Validation: Sanger sequence across predicted breakpoints from original sample.

Evolutionary Signatures: Long-Term Adaptive Patterns

These are patterns of change across phylogenies, including convergent evolution, adaptive radiation, and selective sweeps, which reveal long-term strategies of viral adaptation.

Table 3: Metrics for Quantifying Evolutionary Signatures

| Signature | Primary Metric | Calculation Method | Interpretation | AI/Statistical Model |

|---|---|---|---|---|

| Positive Selection | dN/dS (ω) | Ratio of non-synonymous to synonymous substitution rates | ω > 1 indicates adaptive evolution | FUBAR, FEL, MEME (HyPhy package) |

| Convergent Evolution | Homoplasy Count | Independent emergence of identical mutations | Suggests strong selective pressure | Bayesian phylogenetic mapping (BEAST2) |

| Selective Sweep | Reduction in Diversity (π) | π in region vs. genome background (πregion/πbackground) | Value near 0 indicates recent sweep | Hidden Markov Models (HMMs) on SNP density |

| Evolutionary Rate Acceleration | Branch-Specific Rate (r) | Substitutions/site/year on specific phylogenetic branch | Spike in r indicates rapid adaptation | Gaussian Process regression on time-scaled trees |

AI Methodologies for Pattern Recognition

Workflow for Integrated Pattern Analysis

AI Pattern Recognition Workflow in Viral Genomics

Signaling Pathway of Viral Adaptation Driven by Pattern Interplay

Viral Adaptation Pathway via Pattern Interplay

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Reagents and Materials for Viral Pattern Research

| Reagent/Material | Supplier Examples | Function in Pattern Analysis | Critical Specification |

|---|---|---|---|

| High-Fidelity RT-PCR Kit | Thermo Fisher, Takara | Amplification for NGS; minimizes artificial recombination. | Error rate < 2 x 10^-6 /nt. |

| Target Enrichment Probes (Viral Panels) | Twist Bioscience, IDT | Capture viral sequences from complex clinical samples for deep variant calling. | Coverage uniformity > 95%. |

| Synthetic Viral Controls (RNA) | ATCC, GenScript | Positive controls for mutation/recombination detection assays. | Quantified mutation mix. |

| NGS Library Prep with UMIs | Illumina, New England Biolabs | Unique Molecular Identifiers (UMIs) enable error correction for accurate variant frequency. | > 90% UMI utilization. |

| Neutralization Antibody Panel | BEI Resources, Sino Biological | Assess functional impact of variant/motif changes in pseudovirus assays. | WHO international standard traceable. |

| CRISPR-based Viral Activation (CRISPRa) | Synthego, Santa Cruz Biotech | Activate latent or low-frequency variants for phenotypic characterization. | > 50-fold activation efficiency. |

| Phylogenetic Analysis Suite (Software) | Nextstrain, Geneious Prime | Integrated platform for evolutionary signature analysis and visualization. | Real-time data integration. |

| AI/ML Cloud Compute Credits | AWS, Google Cloud | Resources for training large models (ESM-2, AlphaFold) on viral protein sequences. | GPU (A100/V100) access. |

Experimental Protocols

Protocol: Deep Mutational Scanning (DMS) for Variant Effect Prediction

Objective: Empirically measure the fitness effect of all possible single amino acid substitutions in a viral protein domain.

Materials:

- Plasmid library encoding all possible single mutants of target protein.

- Mammalian cell line (e.g., HEK293T) for viral protein expression.

- Flow cytometer with cell sorting capability.

- NGS platform (Illumina).

Procedure:

- Library Transfection: Transfect mutant plasmid library into cells in triplicate.

- Functional Selection: Apply selection pressure (e.g., antibody binding for RBD, enzyme activity assay).

- Cell Sorting: Use FACS to separate high-fitness and low-fitness populations based on fluorescent reporter.

- NGS Recovery: Isolve plasmid DNA from sorted populations and amplify mutant region for NGS.

- Variant Frequency Analysis: Sequence each population to >500x coverage. Count reads for each mutant.

- Fitness Score Calculation: Compute enrichment score (log2( frequencypost-selection / frequencyinput )).

- AI Model Training: Use scores as ground truth to train a neural network on protein sequence/structure features.

Protocol: Detecting Recombination in Circulating Viral Populations

Objective: Identify and characterize novel recombinant viruses from surveillance sequencing data.

Materials:

- De-identified bulk RNA-seq or targeted sequencing data from surveillance.

- High-performance computing cluster.

- Reference genome database (NCBI, GISAID).

Procedure:

- Read Mapping: Map all reads to a comprehensive reference panel using a sensitive aligner (minimap2).

- Chimeric Read Identification: Extract reads with secondary alignments or split alignments (using samtools).

- Bootscanning: For each sample, perform bootscan analysis (in RDP5) with 1000 permutations, 500-nt window, 20-nt step.

- Confidence Assignment: Recombination events are accepted if supported by ≥3 independent methods in RDP5 (RDP, GENECONV, MaxChi, etc.) with p < 0.05.

- Breakpoint Refinement: Use NGS read depth and soft-clipping patterns at predicted breakpoints for precise localization.

- Phenotype Prediction: Input recombinant sequence into trained AI model (e.g., on host tropism or antibody escape) to prioritize for in vitro testing.

The systematic decomposition of viral genomics into Motifs, Variants, Recombination Signals, and Evolutionary Signatures provides a robust framework for AI-driven discovery. The integration of these patterns, through the workflows and experimental protocols detailed herein, enables a shift from reactive to predictive viral research. The next frontier lies in building multimodal AI systems that combine these sequence patterns with structural, epidemiological, and clinical data to anticipate viral emergence and design preemptive countermeasures, ultimately forming the core of a comprehensive thesis on AI for pandemic preparedness.

In the field of viral genomics, the rapid identification and analysis of genetic patterns is critical for pandemic preparedness, vaccine design, and antiviral drug development. This technical guide examines the core artificial intelligence (AI) paradigms—traditional Machine Learning (ML) and Deep Learning (DL)—applied to nucleotide and amino acid sequence analysis. The choice of paradigm directly impacts the accuracy of identifying virulence factors, predicting mutation impacts, and classifying novel viral strains. This overview is framed within a broader thesis on optimizing AI-driven pattern recognition for accelerated virological research and therapeutic discovery.

Foundational Concepts: ML vs. DL for Sequences

Machine Learning for sequences typically involves a two-stage pipeline: 1) Feature engineering, where domain knowledge is used to extract meaningful representations (e.g., k-mer frequencies, physicochemical properties, entropy scores), and 2) Model training using algorithms like Support Vector Machines (SVMs) or Random Forests on these hand-crafted features.

Deep Learning, specifically using architectures like Recurrent Neural Networks (RNNs), Long Short-Term Memory networks (LSTMs), and Transformers, aims to automate feature extraction. These models ingest raw or minimally preprocessed sequences (e.g., one-hot encoded nucleotides) and learn hierarchical representations directly from the data.

The distinction is crucial in virology, where the relationship between sequence variation and phenotypic outcome (e.g., transmissibility, antigenic drift) can be complex and non-linear.

Comparative Quantitative Analysis

The following table summarizes the performance and resource characteristics of ML and DL approaches based on recent benchmarking studies in viral bioinformatics.

Table 1: Comparative Performance Metrics for Viral Sequence Classification Tasks

| Aspect | Traditional ML (e.g., SVM with k-mers) | Deep Learning (e.g., CNN/Transformer) | Notes & Source |

|---|---|---|---|

| Typical Accuracy (SARS-CoV-2 lineage classification) | 92-95% | 96-99% | DL models edge out with larger (>10k samples) datasets. (Recent benchmarks, 2024) |

| Feature Engineering Requirement | High (Manual) | Low (Automatic) | ML requires domain expertise for k-mer selection, etc. |

| Training Data Size Requirement | Lower (Can work on 100s of sequences) | High (Requires 1000s+ for robustness) | DL performance scales significantly with data volume. |

| Computational Cost (GPU hrs) | Low (1-10 hrs on CPU) | High (10-100+ hrs on GPU) | DL training is resource-intensive but inference is fast. |

| Interpretability | Moderate (Feature importance) | Low (Black-box) | SHAP values for ML; attention maps in DL offer partial insights. |

| Robustness to Novel Mutations | Can degrade without retraining | Better at generalizing from patterns | DL models infer based on learned latent spaces. |

Table 2: Common Model Architectures in Viral Sequence Analysis

| Model Type | Best For | Example Application in Virology | Key Limitation |

|---|---|---|---|

| SVM with string kernels | Small datasets, clear margins | Hepatitis C virus genotype classification | Scalability to billions of base pairs. |

| Random Forest | Feature importance analysis | Identifying key genomic regions for virulence | May miss complex long-range dependencies. |

| 1D Convolutional Neural Net (CNN) | Local motif detection | Influenza hemagglutinin antigenic site prediction | Struggles with very long-range interactions. |

| Bidirectional LSTM (BiLSTM) | Modeling sequence dependencies | HIV drug resistance prediction | Computationally slower than CNNs. |

| Transformer (e.g., DNABERT) | Context-aware long-range modeling | Pan-viral genome classification, variant effect prediction | Extreme data and computational requirements. |

Detailed Experimental Protocols

Protocol 4.1: Traditional ML Pipeline for Viral Variant Classification

Objective: Classify viral sequence reads into known variants (e.g., Alpha, Delta, Omicron).

Materials: See "The Scientist's Toolkit" (Section 6).

Methodology:

- Data Curation: Gather FASTA files from public repositories (GISAID, NCBI Virus). Perform multiple sequence alignment (MSA) using MAFFT or Clustal Omega.

- Feature Engineering:

- Extract k-mer frequencies (typical k=3 to 7 for nucleotides). This converts each sequence into a vector counting all possible sub-sequences of length k.

- Dimensionality Reduction: Apply Principal Component Analysis (PCA) or SelectKBest to reduce the very high-dimensional k-mer feature space.

- Model Training & Validation:

- Split data into training (70%), validation (15%), and test (15%) sets, ensuring no data leakage between variant groups.

- Train an SVM with a radial basis function (RBF) kernel or a Random Forest classifier on the training set.

- Optimize hyperparameters (e.g., SVM's C and gamma, Random Forest's tree depth) using grid search on the validation set.

- Evaluation: Report precision, recall, F1-score, and confusion matrix on the held-out test set.

Protocol 4.2: Deep Learning (Transformer) Protocol for Mutation Impact Prediction

Objective: Predict the functional impact (e.g., neutral, increasing infectivity) of a point mutation in a viral spike protein gene.

Methodology:

- Data Preparation:

- Use labeled datasets from biochemical assays or epidemiological fitness estimates.

- Tokenization: For nucleotide sequences, use byte-pair encoding (BPE) or wordpiece tokenization. For amino acid sequences, use standard residue tokens.

- Format input as:

[CLS] + sequence_context + [SEP] + mutant_residue_info + [SEP].

- Model Architecture & Training:

- Initialize a pre-trained genomic Transformer model (e.g., AlphaFold's EvoFormer module, DNABERT).

- Add a task-specific head: a global average pooling layer followed by a fully connected layer for regression or classification.

- Employ transfer learning: Fine-tune all layers on the specific viral dataset using a low learning rate (e.g., 1e-5).

- Use a masked language modeling (MLM) objective in pre-training to help the model learn biophysical constraints.

- Training Regimen: Use the AdamW optimizer with gradient clipping. Apply heavy regularization (dropout, weight decay) due to limited labeled data.

- Interpretation: Generate attention maps to visualize which parts of the sequence the model "attends to" when making a prediction, offering biological insight.

Mandatory Visualizations

Diagram 1: ML vs DL workflow for sequence analysis

Diagram 2: Transformer architecture for viral sequences

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions & Computational Tools

| Item / Tool Name | Category | Primary Function in Viral Sequence AI |

|---|---|---|

| GISAID EpiCoV Database | Data Repository | Primary source for curated, annotated SARS-CoV-2 and influenza sequences with epidemiological metadata. |

| NCBI Virus | Data Repository | Comprehensive database for viral sequence data across all species, integrated with Entrez. |

| MAFFT / Clustal Omega | Bioinformatics Tool | Performs Multiple Sequence Alignment (MSA), a critical pre-processing step for many ML feature extraction methods. |

| scikit-learn | ML Library | Provides robust implementations of SVM, Random Forest, and other classical ML algorithms for model building. |

| TensorFlow / PyTorch | DL Framework | Flexible ecosystems for building, training, and deploying custom deep neural network architectures (CNNs, RNNs, Transformers). |

| Hugging Face Transformers | DL Library | Offers pre-trained Transformer models (e.g., DNABERT, ProteinBERT) adaptable for viral genomics via fine-tuning. |

| SHAP (SHapley Additive exPlanations) | Interpretability Tool | Explains output of any ML model, highlighting which sequence regions (k-mers) drove a prediction. |

| NVIDIA V100/A100 GPU | Hardware | Accelerates the training of large DL models, reducing time from weeks to days or hours. |

| DeepVariant (Google) | Specialized Tool | Uses a CNN to call genetic variants from sequencing data, improving accuracy over traditional methods. |

The application of Artificial Intelligence (AI) to viral genomics represents a paradigm shift in our ability to predict pathogen evolution, identify therapeutic targets, and accelerate drug discovery. This technical guide delineates the essential biological features—encoding sequences, conserved regions, and epistatic interactions—that must be accurately represented for AI models to succeed in this domain. Framed within a broader thesis on AI-driven pattern recognition, this document provides methodologies for data preparation, feature extraction, and experimental validation critical for researchers and drug development professionals.

Encoding Viral Sequences for Machine Learning

Numerical Representation Schemes

Raw nucleotide or amino acid sequences are not directly interpretable by machine learning algorithms. Multiple encoding strategies transform biological sequences into numerical vectors, each with distinct advantages for model learning.

Table 1: Comparative Analysis of Sequence Encoding Methods

| Encoding Method | Dimensionality per residue | Captured Information | Best suited for Model Type | Key Limitation |

|---|---|---|---|---|

| One-Hot | 4 (NT) or 20 (AA) | Identity only | CNN, RNN | No physicochemical data |

| k-mer Frequency | 4^k (NT) or 20^k (AA) | Local context | SVM, Logistic Regression | High dimensionality for large k |

| Learned Embeddings (e.g., NLP-based) | 50-1024 (custom) | Contextual semantics | Transformer, LSTM | Requires large pre-training dataset |

| Physicochemical Property Vectors | 5-10 (custom) | Biochemical features | Random Forest, Regression | Incomplete representation |

Experimental Protocol: Generating k-mer Frequency Vectors

Objective: Convert a set of viral genome sequences into fixed-length numerical feature vectors based on k-mer counts.

- Sequence Preprocessing: Gather FASTA files. Perform multiple sequence alignment (MSA) using MAFFT v7 or Clustal Omega to ensure positional homology. Remove low-quality or incomplete sequences.

- k-mer Enumeration: For each aligned sequence, slide a window of length k (typically 3-6 for nucleotides, 2-3 for amino acids) across the entire length, counting the occurrence of every possible k-mer. For unaligned sequences, use a sliding window across the raw sequence.

- Normalization: Convert raw counts to frequencies by dividing each k-mer count by the total number of k-mers in the sequence, or use Term Frequency-Inverse Document Frequency (TF-IDF) normalization across the dataset to de-emphasize common k-mers.

- Vector Construction: Assemble the normalized frequency for each possible k-mer into a vector in a consistent order, creating a feature vector of length 4^k or 20^k for each input sequence.

Diagram 1: Workflow for k-mer based sequence encoding.

Research Reagent Solutions: Sequence Encoding

| Item/Reagent | Function in Encoding | Example Product/Software |

|---|---|---|

| Multiple Sequence Alignment Tool | Aligns homologous sequences for positional encoding | MAFFT, Clustal Omega, MUSCLE |

| k-mer Counting Library | Efficiently generates k-mer frequency vectors | Jellyfish, KMC3, Biopython |

| NLP Embedding Framework | Learns continuous vector representations of sequences | ProtTrans (for proteins), DNABERT (for nucleotides) |

| Feature Normalization Library | Scales and normalizes numerical vectors for model stability | scikit-learn StandardScaler, Normalizer |

Identifying and Utilizing Conserved Regions

Conservation as a Feature for AI

Conserved genomic regions across viral strains indicate essential functions, such as structural integrity or enzymatic activity, making them prime targets for broad-spectrum therapeutics. AI models can use conservation scores as input features or as constraints to guide learning.

Experimental Protocol: Calculating Conservation Scores

Objective: Generate a per-position conservation score from a viral protein MSA.

- Curation of Dataset: Compile amino acid sequences for a specific viral protein (e.g., SARS-CoV-2 Spike, HIV-1 protease) from a public database (NCBI Virus, GISAID). Filter for high-quality, full-length sequences.

- Alignment: Perform a rigorous MSA. For best results, use profile-based methods like HMMER or PSI-BLAST for deep homolog detection.

- Score Calculation: Apply an information-theoretic metric. The most common is Shannon Entropy: H(i) = - Σ p(a,i) log₂ p(a,i), where p(a,i) is the frequency of amino acid a at alignment column i. Low entropy indicates high conservation.

- Alternative Scores: Use the BLOSUM62 substitution matrix-based Score or Relative Entropy to weight biochemically similar residues.

- Feature Integration: Append the conservation score for each position (or a window-averaged score) as an additional channel to the sequence encoding vector for that position.

Table 2: Conservation Metrics and Their Interpretation

| Metric | Formula | Range | Interpretation | Computational Cost |

|---|---|---|---|---|

| Shannon Entropy | H(i) = -Σ p(a,i) log₂ p(a,i) | 0 (invariant) to ~4.32 (max diversity) | Pure frequency-based diversity | Low |

| Relative Entropy (Kullback-Leibler) | D(i) = Σ p(a,i) log₂ (p(a,i)/q(a)) | 0 (match background) to ∞ | Divergence from background distribution | Medium |

| Score (e.g., from BLOSUM) | S(i) = Σ Σ p(a,i) p(b,i) BLOSUM(a,b) | Varies by matrix | Sum of pairwise substitution likelihoods | Medium-High |

Diagram 2: From sequences to conserved targets.

Modeling Epistatic Interactions

The Challenge of Epistasis

Epistasis—where the effect of one mutation depends on the presence of others—is a fundamental driver of viral evolution and drug resistance. Modeling these high-order interactions is computationally challenging but critical for accurate phenotype prediction.

Experimental Protocol: Detecting Epistatic Pairs via Statistical Coupling Analysis (SCA)

Objective: Identify pairs of co-evolving positions in a viral protein MSA that suggest functional or structural coupling.

- Generate Large, Diverse MSA: Assemble a deep, evolutionarily diverse MSA (thousands of sequences) for the viral protein family.

- Compute Positional Covariance: For each pair of alignment columns (i, j), calculate a covariance metric. A common method is Direct Information (DI) from global statistical models like Potts models or Mutual Information (MI) corrected for phylogenetic bias.

- MI(i,j) = Σ Σ p(ab,i,j) log₂ [ p(ab,i,j) / (p(a,i) p(b,j)) ]

- Correct MI using methods like APC (Average Product Correction): DI(i,j) = MI(i,j) - [MI(i,)MI(,j)]/MI(,)

- Statistical Significance: Perform permutation tests (shuffling columns) to generate a null distribution and assign p-values to each pair's DI score.

- Network Construction & Validation: Build an epistatic network where nodes are positions and edges are significant DI scores. Validate predicted couplings through known 3D structures (contacts in PDB) or deep mutational scanning experiments.

Table 3: Results from a Notional SCA of HIV-1 Integrase

| Position i | Position j | Direct Information (DI) Score | p-value | Validated in 3D Structure? | Implication |

|---|---|---|---|---|---|

| 148 | 155 | 0.12 | <0.001 | Yes (4.5 Å) | Catalytic loop stability |

| 92 | 101 | 0.09 | 0.003 | No | Potential allosteric network |

| 66 | 153 | 0.07 | 0.015 | Yes (8.2 Å) | Drug resistance pathway |

Diagram 3: Epistatic network from SCA.

Research Reagent Solutions: Epistasis Analysis

| Item/Reagent | Function in Epistasis Analysis | Example Product/Software |

|---|---|---|

| Coevolution Analysis Suite | Calculates DI, MI, and builds Potts models | EVcouplings, GREMLIN, plmDCA |

| Deep Mutational Scanning Platform | Empirically tests mutational combinations | CombiGEM, ORF libraries, next-gen sequencing |

| Molecular Dynamics Simulation Suite | Validates predicted couplings via in silico structural analysis | GROMACS, AMBER, NAMD |

Integrating Features for Predictive AI Models

Multi-Modal Architecture

Effective models combine encoded sequence data, conservation profiles, and epistatic graphs. A proposed architecture uses:

- Convolutional Neural Networks (CNNs) to scan for local motifs in one-hot or embedding-encoded sequences.

- An Attention or Graph Neural Network (GNN) layer to process the epistatic interaction network, allowing information flow between coupled positions.

- Conservation scores used as attention weights or as a separate input channel to prioritize invariant regions.

Experimental Protocol: Training an Integrated Model for Drug Resistance Prediction

Objective: Train a model to predict phenotypic drug resistance from viral protease sequences.

- Data Compilation:

- Sequence & Label: Curate paired data: HIV-1 protease sequences and associated measured IC₅₀ fold-change for protease inhibitors (e.g., Darunavir).

- Features: For each sequence, generate: a) Learned embedding vector. b) Conservation profile from a large reference MSA. c) Epistatic edge list from a family-wide SCA.

- Model Design: Implement a hybrid model. Sequence embeddings pass through a CNN. The output per-position features are concatenated with conservation scores. These are then passed through a GNN layer whose connectivity is defined by the epistatic edge list (shared across all sequences). Final layers produce a regression prediction.

- Training & Validation: Use strict strain-based clustering for train/test splits to prevent data leakage. Optimize for mean squared error (MSE) on log-transformed fold-change values.

- Interpretation: Use GNN explainability tools (e.g., GNNExplainer) or attention weights to highlight positions driving the prediction, guiding experimental validation.

Diagram 4: Integrated AI model architecture.

The accurate representation of encoding sequences, conserved regions, and epistatic interactions forms the biological feature bedrock for AI in viral sequence analysis. The methodologies outlined here—from k-mer vectorization and entropy calculations to statistical coupling analysis and hybrid model design—provide a reproducible framework for researchers. As these techniques mature, their integration will be pivotal in realizing the thesis of AI as a transformative tool for preempting viral evolution and discovering next-generation antivirals.

AI in Action: Methodologies and Real-World Applications for Viral Pattern Detection

The application of artificial intelligence (AI) to viral genomics represents a paradigm shift in our ability to decode evolutionary dynamics, predict host-virus interactions, and identify targets for therapeutic intervention. This whitepaper provides an in-depth technical analysis of three foundational neural network architectures—Convolutional Neural Networks (CNNs), Recurrent Neural Networks (RNNs) with Long Short-Term Memory (LSTM) units, and Transformers—applied specifically to sequential viral data. The broader thesis framing this work posits that systematic architectural comparison and hybridization are critical for advancing pattern recognition in viral sequences, ultimately accelerating the pace of discovery in virology and antiviral drug development.

Core Architectures & Applications to Viral Data

Convolutional Neural Networks (CNNs)

CNNs, renowned for spatial hierarchy learning in images, are adapted for viral nucleotide or amino acid sequences via 1D convolutions. They excel at detecting local motifs and conserved domains independent of their precise position, which is valuable for identifying protein family signatures or transcription factor binding sites in viral genomes.

- Key Mechanism: Filters (kernels) slide across the embedded sequence, generating feature maps that highlight the presence of specific k-mer patterns.

- Viral Research Application: Prediction of viral host range from genome composition, identification of protease cleavage sites, and classification of viral subtypes from sequence fragments.

Recurrent Neural Networks (RNNs) & Long Short-Term Memory (LSTM) Networks

RNNs are designed for native sequential processing by maintaining a hidden state that propagates information forward. Standard RNNs suffer from vanishing gradients. LSTMs address this with a gated architecture (input, forget, output gates) that regulates information flow, enabling the learning of long-range dependencies across thousands of nucleotides or residues.

- Key Mechanism: The cell state acts as a "conveyor belt," with gates adding or removing information, allowing relevant context to be preserved over long distances.

- Viral Research Application: Modeling viral genome evolution and recombination, predicting RNA secondary structure from primary sequence, and generating functional viral protein sequences.

Transformer Networks

Transformers bypass recurrence entirely, relying on a self-attention mechanism to compute pairwise relationships between all elements in a sequence simultaneously. This allows for direct modeling of global dependencies and massively parallel computation. Positional encodings are added to inject order information.

- Key Mechanism: Self-attention calculates a weighted sum of values for each token, where weights are derived from compatibility queries and keys. Multi-head attention enables focus on different representational subspaces.

- Viral Research Application: Predicting the effects of combinatorial mutations across a viral genome (e.g., SARS-CoV-2 variant fitness), antigenic cartography from hemagglutinin sequences, and protein structure prediction from viral amino acid sequences (as demonstrated by AlphaFold2, a Transformer-derived model).

Comparative Architectural Analysis

The table below synthesizes recent performance metrics from benchmark studies on viral sequence tasks, such as next-token prediction in genome assembly, variant effect prediction, and host prediction.

Table 1: Architectural Performance on Benchmark Viral Sequence Tasks

| Architecture | Task (Dataset) | Key Metric | Reported Score | Primary Strength | Computational Cost (Relative) |

|---|---|---|---|---|---|

| 1D-CNN | Viral Host Prediction (ICTV Benchmark) | Accuracy | 94.2% | Local Motif Detection | Low |

| Bi-LSTM | Viral Genome Completion (Influenza A) | Perplexity | 8.7 | Long-Range Context | Medium |

| Transformer (Encoder) | Variant Effect Prediction (SARS-CoV-2 Spike) | AUROC | 0.891 | Global Dependency Modeling | High |

| Hybrid CNN-LSTM | Protease Cleavage Site ID (Viral Polyproteins) | F1-Score | 0.92 | Local + Temporal Features | Medium |

| Transformer (Decoder) | De Novo Viral Protein Design | Recovery Rate | 41% | Generative Sequence Design | Very High |

Detailed Experimental Protocol for a Benchmark Study

Protocol: Training a Transformer Model for Viral Variant Fitness Prediction

1. Objective: To predict the replicative fitness score of SARS-CoV-2 Spike protein variants from their amino acid sequence.

2. Data Curation:

- Source: GISAID EpiCoV database & associated in vitro fitness assays from recent literature (last 24 months).

- Preprocessing: Perform multiple sequence alignment (MSA) using MAFFT against reference sequence (Wuhan-Hu-1). Encode sequences using a learned byte-pair encoding (BPE) tokenizer with a vocabulary size of 512. Fitness scores are log-transformed and normalized to a [0,1] scale.

3. Model Architecture & Training:

- Model: A 12-layer encoder-only Transformer.

- Hyperparameters: Embedding dimension=512, Attention heads=8, Feed-forward dimension=2048, Dropout=0.1.

- Input: Tokenized variant sequence (max length 1500). Positional encoding is sinusoidal.

- Output: A single scalar value from a regression head (linear layer on [CLS] token representation).

- Loss Function: Mean Squared Error (MSE).

- Optimizer: AdamW with learning rate=5e-5, linear warmup for first 10% of steps, followed by cosine decay.

- Hardware: 4 x NVIDIA A100 GPUs (80GB).

4. Validation & Interpretation:

- Validation: 5-fold time-split cross-validation (train on older variants, test on newer ones) to prevent temporal data leakage.

- Interpretation: Use attention rollout and integrated gradients to identify residues and interaction pairs that most influence the fitness prediction.

Visualizing Key Concepts & Workflows

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Reagents for Viral Sequence AI Research

| Item / Solution | Provider / Example (Open Source) | Primary Function in Research |

|---|---|---|

| Multiple Sequence Alignment (MSA) Tool | MAFFT, Clustal Omega, MUSCLE | Aligns homologous viral sequences for comparative analysis and model input preparation. |

| Genome Annotation Database | NCBI Virus, GISAID, BV-BRC | Provides curated, metadata-rich viral sequences for training and testing models. |

| Deep Learning Framework | PyTorch, TensorFlow, JAX | Provides the core library for building, training, and deploying neural network architectures. |

| Sequence Tokenizer | Byte-Pair Encoding (BPE) via HuggingFace Tokenizers, k-mer tokenization | Converts raw nucleotide/amino acid strings into discrete tokens suitable for model input. |

| Variant Effect Dataset | Stanford Coronavirus Antiviral & Resistance Database (CoV-RDB) | Provides experimentally measured fitness/activity labels for supervised learning of variant impact. |

| Model Interpretation Library | Captum (for PyTorch), SHAP, DeepLIFT | Attributes model predictions to input features, identifying critical residues or motifs. |

| High-Performance Computing (HPC) Environment | AWS EC2 (P4d instances), Google Cloud TPUs, NVIDIA DGX | Provides the necessary GPU/TPU acceleration for training large models on massive sequence datasets. |

| Workflow Management | Nextflow, Snakemake | Orchestrates reproducible pipelines from data preprocessing to model evaluation. |

This whitepaper details a comprehensive workflow for applying machine learning to pattern recognition in viral genomic sequences. The overarching thesis posits that a meticulous, end-to-end computational pipeline is critical for identifying actionable patterns—such as regions of high mutability, conserved epitopes, or recombination hotspots—that can accelerate vaccine design and antiviral drug development.

Data Curation

Data curation establishes the foundation for robust model development. For viral genomics, this involves aggregation, stringent quality control, and systematic annotation.

Key Sources & Quantitative Summary (2024-2025) Table 1: Primary Data Sources for Viral Genomics Research

| Source | Data Type | Example Volume | Key Attributes |

|---|---|---|---|

| NCBI Virus, GISAID | Nucleotide Sequences | ~15M SARS-CoV-2 sequences | Isolate, collection date, host, lineage |

| VIPR, BV-BRC | Annotated Genomes | ~2M across Flaviviridae | Gene annotations, protein products |

| PDB, IEDB | 3D Structures & Epitopes | ~2,000 viral proteins | Structural coordinates, immune recognition data |

Experimental Protocol: Curation & QC Pipeline

- Aggregation: Programmatically download target sequences (e.g., all Orthomyxoviridae) via APIs using tools like

Bio.Entrezandgisaid_cli. - Deduplication: Remove identical sequences based on MD5 hash of the aligned sequence.

- Quality Filtering: Apply thresholds: sequence length within 3 standard deviations of the median, ambiguity (N) content <1%, and no stop codons in conserved ORFs.

- Annotation Enhancement: Cross-reference with UniProt to add protein function annotations. Use Nextclade for preliminary lineage/clade assignment.

- Stratified Sampling: For class-imbalanced datasets (e.g., rare variants), use stratified sampling to create balanced subsets for exploratory analysis.

Feature Engineering

Feature engineering transforms raw sequences into quantifiable descriptors that capture biologically meaningful patterns.

Methodologies for Feature Extraction

- K-mer Frequency Vectors: Generate normalized counts of all possible nucleotide subsequences of length k (typically 3-6). This captures sequence composition without alignment.

- Position-Specific Scoring Matrices (PSSM): For aligned sequences, compute log-likelihood of each residue at each position relative to a background model. Critical for conserved region identification.

- Physicochemical Properties: Translate sequences and compute properties like hydrophobicity index, charge, and molecular weight per sliding window.

- Phylogenetic Features: Extract distance from a defined reference strain or embed sequences via

Bio.Phylotree-based metrics. - One-Hot Encoding: For deep learning models, directly encode nucleotides (A,C,G,T,U) as sparse orthogonal vectors.

Table 2: Feature Engineering Techniques & Output Dimensionality

| Technique | Typical Dimensionality | Best For | Computational Load |

|---|---|---|---|

| k-mer (k=6) | 4⁶ = 4096 features | Sequence classification | Medium |

| PSSM (L=1000) | L x 20 = 20,000 | Motif discovery, alignment | High |

| Physicochemical (5 props) | Sequence Length x 5 | Structural property prediction | Low |

| Phylogenetic | 1-10 distance metrics | Evolutionary analysis | Very High |

Model Training

The curated feature set is used to train models for classification, regression, or clustering tasks relevant to viral research.

Experimental Protocol: Model Training & Validation

- Task Definition: Example: Classify sequences into "high" vs "low" host-cell entry efficiency based on labelled in vitro data.

- Train-Test Split: Perform a temporal split (e.g., train on pre-2023, test on 2024+) to simulate real-world forecasting and avoid data leakage.

- Model Selection: Benchmark:

- Baseline: Logistic Regression with L1 regularization.

- Ensemble: Gradient Boosting Machines (XGBoost) with hyperparameter tuning via Bayesian optimization.

- Deep Learning: 1D Convolutional Neural Network (CNN) for sequence data, or Transformer encoder for embedded features.

- Training: Use 5-fold cross-validation on the training set. Employ early stopping for neural networks.

- Evaluation Metrics: Report precision, recall, F1-score, and AUC-ROC. For imbalanced datasets, prioritize AUC-PR.

Table 3: Model Performance on a Hypothetical Variant Pathogenicity Prediction Task

| Model | AUC-ROC | Precision | Recall | Key Features Used |

|---|---|---|---|---|

| Logistic Regression | 0.82 | 0.76 | 0.68 | PSSM, k-mer (k=4) |

| XGBoost | 0.91 | 0.85 | 0.82 | All, with PSSM top |

| 1D-CNN | 0.89 | 0.87 | 0.78 | One-Hot Encoded Sequence |

Deployment

Deployment translates a trained model into a usable tool for researchers, often via a web application or a REST API.

Deployment Architecture Protocol

- Model Serialization: Save the final model (e.g., XGBoost classifier) and its feature encoder (e.g.,

StandardScaler) usingpickleorjoblib. - API Development: Create a FastAPI or Flask application with a

/predictendpoint. The endpoint should:- Accept a FASTA sequence.

- Run the same curation and feature engineering pipeline.

- Load the serialized model and scaler.

- Return a JSON with prediction and confidence score.

- Containerization: Package the API, model, and all dependencies into a Docker container for portability.

- Cloud Deployment: Deploy the container on a cloud service (e.g., AWS ECS, Google Cloud Run) with auto-scaling.

- Continuous Integration: Use GitHub Actions to retrain the model on a scheduled basis as new public sequence data becomes available.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Computational Tools & Resources

| Item/Resource | Function/Description | Example/Provider |

|---|---|---|

| BV-BRC | Comprehensive platform for viral 'omics data analysis, including annotation and comparative genomics. | Bacterial & Viral Bioinformatics Resource Center |

| Nextclade | Web & CLI tool for phylogenetic clade assignment, QC, and mutation calling of viral sequences. | Nextstrain |

| MAFFT | Multiple sequence alignment algorithm essential for creating accurate PSSMs and phylogenetic trees. | Katoh & Standley |

| XGBoost | Optimized gradient boosting library for building high-performance classification models on tabular features. | DMLC |

| PyTorch / TensorFlow | Deep learning frameworks for building custom neural network architectures (CNNs, Transformers). | Meta / Google |

| Biopython | Python library for computational biology, enabling sequence manipulation, parsing, and analysis. | Biopython Consortium |

| Docker | Containerization platform ensuring the computational environment and pipeline are reproducible. | Docker Inc. |

| FastAPI | Modern Python web framework for building high-performance, documented APIs to serve models. | FastAPI |

| GISAID EpiCoV | Primary global repository for sharing influenza and coronavirus sequences with associated metadata. | GISAID Initiative |

Within the broader thesis that artificial intelligence represents a paradigm shift for pattern recognition in viral sequences research, the identification of emerging viral variants and lineages stands as a critical application. The rapid evolution of viruses like SARS-CoV-2 and Influenza necessitates tools that can move beyond simple phylogenetic comparison to detect, classify, and predict the functional implications of novel mutations in near real-time. AI-driven approaches are now central to this task, integrating genomic surveillance, phenotypic prediction, and epidemiological tracking into a cohesive framework for public health response and therapeutic development.

Core AI Methodologies in Variant Identification

Pattern Recognition Foundations

AI models, particularly deep learning architectures, are trained to recognize complex, non-linear patterns in nucleotide or amino acid sequences that may elude traditional consensus-building methods.

| AI Model Type | Primary Application in Variant ID | Key Advantage | Example Tools/Implementations |

|---|---|---|---|

| Convolutional Neural Networks (CNNs) | Detecting local sequence motifs and spatial dependencies associated with lineage-defining mutations. | Excels at identifying conserved local patterns despite background noise. | Pangolin lineage classifier, Nextclade. |

| Recurrent Neural Networks (RNNs/LSTMs) | Modeling sequential dependencies across the whole genome for predicting evolutionary pathways. | Handles variable-length sequences and long-range dependencies. | Used in prophetic models of variant emergence. |

| Transformer Models | Context-aware embedding of entire viral genomes; understanding the interplay of distant mutations. | Captures global sequence context; state-of-the-art for many tasks. | Genome-scale language models (e.g., DNABERT, Nucleotide Transformer). |

| Graph Neural Networks (GNNs) | Analyzing viral evolution as a graph of sequences, capturing transmission dynamics and clade relationships. | Naturally models relational data (phylogenetic trees, contact networks). | Applied to transmission cluster identification. |

Integrated Workflow for AI-Powered Surveillance

The standard pipeline integrates wet-lab sequencing with dry-lab AI analysis.

Diagram Title: AI-Integrated Genomic Surveillance Workflow

Experimental Protocols for Validation

Protocol: Benchmarking AI Lineage Classification

This protocol validates a novel AI classifier against established tools.

Objective: To assess the accuracy, sensitivity, and computational efficiency of an AI model for SARS-CoV-2 lineage assignment. Materials: See "Scientist's Toolkit" below. Procedure:

- Dataset Curation: Assemble a benchmark dataset of N=10,000 high-quality SARS-CoV-2 genomes from GISAID, ensuring representation across all Variants of Concern (VOCs) and Variants of Interest (VOIs). Split into training/validation/test sets (70/15/15).

- Baseline Establishment: Run the test set sequences through established classifiers (Pangolin, Nextclade) to generate "ground truth" lineage assignments. Resolve discrepancies via manual phylogenetic analysis.

- AI Model Inference: Input the test set FASTA files into the candidate AI model (e.g., a fine-tuned transformer) and generate lineage predictions.

- Analysis: Generate a confusion matrix. Calculate key metrics: Accuracy, Precision, Recall, and F1-score for each major lineage. Compare processing time per sequence against baseline tools.

- Functional Annotation: For sequences with discrepant calls, perform detailed mutational analysis (using USHER or scorpio) to determine if the AI model identified a recombinant or emerging lineage earlier than traditional methods.

Protocol: In Silico Prediction of Antigenic Drift

This protocol uses AI to predict the antigenic impact of novel influenza mutations.

Objective: To predict the antigenic distance between a circulating influenza strain and existing vaccine strains using AI models trained on hemagglutination inhibition (HI) assay data. Materials: AI model (e.g., hierarchical Bayesian model or CNN), curated HI dataset from WHO CCs, viral HA sequence data. Procedure:

- Data Integration: Create a paired dataset of Influenza A/H3N2 HA1 domain sequences and their corresponding empirical HI titers against a panel of reference antisera.

- Model Training: Train an AI model to map the sequence to a low-dimensional antigenic space. The model learns to output a predicted antigenic distance.

- Prediction: Input the HA sequences of newly sequenced isolates into the trained model.

- Validation: For a held-out test set, compare the AI-predicted antigenic distances with upcoming, lab-confirmed HI assay results. Calculate the Pearson correlation coefficient (r) between predicted and observed values. An r > 0.8 indicates strong predictive performance.

| Virus | Primary AI Tool | Classification Speed | Accuracy vs. Lab Data | Key Mutations Tracked |

|---|---|---|---|---|

| SARS-CoV-2 | Pangolin (CNN-based) | ~1000 genomes/hour | >99% for major lineages | Spike: RBD (e.g., 452, 478, 501); Non-Spike: ORF1a, N |

| Influenza A | Nextflu (PhyloDynamics) | Real-time pipeline | >95% clade assignment | HA1: antigenic sites A-E; NA: catalytic/resistance sites |

| HIV-1 | COMET (RNN-based) | ~2 min/sequence | 98% Subtype/CRF accuracy | PR, RT drug resistance positions; GP120 V-loops |

| Prediction Task | AI Model Used | Performance Metric | Current Benchmark | Clinical/Biological Impact |

|---|---|---|---|---|

| Variant Transmissibility | GNN on contact networks | ROC-AUC | 0.76-0.89 | Informs early warning systems |

| Antibody Escape | Transformer (Protein Language Model) | Spearman's ρ | 0.85 (vs. deep mutational scan) | Guides mAb therapy development |

| Vaccine Cross-Protection | CNN on antigenic maps | Prediction Error (log2 titer) | ± 0.8-1.2 log2 | Supports vaccine strain selection |

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function in Variant Identification Research | Example Product/Provider |

|---|---|---|

| High-Throughput Sequencing Kits | Generate raw genomic data from viral samples with high fidelity and low error rates. | Illumina COVIDSeq Test, Oxford Nanopore ARTIC protocol amplicon kits. |

| Synthetic Control Genomes | Act as positive controls for wet-lab protocols and benchmarks for AI algorithm validation. | Twist Bioscience SARS-CoV-2 RNA Positive Control, NIBSC influenza antigenic calibration panels. |

| AI Training Datasets | Curated, high-quality genomic and metadata for model training and fine-tuning. | GISAID EpiCoV database, NCBI Influenza Virus Database, Los Alamos HIV Sequence Database. |

| Cloud Computing Credits | Provide scalable computational resources for training large AI models and processing population-scale genomic data. | AWS Credits for Research, Google Cloud Research Credits, Microsoft Azure for Research. |

| Containerized Software | Ensure reproducible and portable deployment of complex AI analysis pipelines across different computing environments. | Docker/Singularity containers for Pangolin, USHER, Nextclade, and custom models. |

Signaling Pathway of AI-Driven Public Health Response

This diagram illustrates the logical flow from sequence data to public health action.

Diagram Title: AI-Informed Public Health Decision Pathway

The application of AI for emerging variant identification is a cornerstone of modern viral genomics, providing the speed, scale, and sophistication required to keep pace with viral evolution. By transforming raw sequence data into actionable biological and epidemiological insights, these systems directly support the development of targeted drugs, effective vaccines, and evidence-based public health policies. As part of the overarching thesis, this field demonstrates that AI is not merely an auxiliary tool but an essential component of the pattern recognition framework needed to understand and mitigate ongoing and future pandemic threats.

This whitepaper explores a critical application of artificial intelligence (AI) in virology: the prediction of antigenic drift and host tropism shifts from viral sequence data. Within the broader thesis on AI for pattern recognition in viral sequences, this represents a pinnacle of applied machine learning. It moves beyond descriptive genomics to predictive analytics, aiming to forecast evolutionary trajectories of pathogens like influenza, SARS-CoV-2, and others. By identifying subtle, high-dimensional patterns in amino acid substitutions and structural constraints, AI models can anticipate phenotypic changes affecting vaccine efficacy and cross-species transmission risk long before they become evident in surveillance data.

Core Predictive Models & Quantitative Performance

Recent advances employ deep learning architectures, including Graph Neural Networks (GNNs) for structural data, Transformers for sequential context, and ensemble methods integrating multiple data types. The table below summarizes the performance metrics of leading contemporary models as identified in current literature.

Table 1: Performance of Recent AI Models for Predicting Viral Evolution

| Model Name (Architecture) | Primary Application | Key Input Features | Reported Accuracy / AUC | Key Metric & Value | Reference Year |

|---|---|---|---|---|---|

| EVEscape (Deep Generative + Biophysical) | Antigenic Drift & Escape | Protein sequence, Structure (PDB), Phylogeny | AUC: 0.87 | Rank correlation (ρ): 0.78 for SARS-CoV-2 | 2023 |

| EGRET (Ensemble GNN/Transformer) | Host Tropism Prediction | HA/Spike sequence, Predicted binding affinity, Host receptor features | Accuracy: 91.2% | Macro F1-Score: 0.89 on avian/mammal classes | 2024 |

| DeepAntigen (Convolutional NN) | Linear B-cell Epitope Change | Sequence, Physicochemical profiles, Solvent accessibility | AUC: 0.94 | Precision@10: 0.85 for influenza H3N2 | 2023 |

| TropismNet (Attention Networks) | Receptor Binding Specificity | Viral protein structural pockets, Molecular dynamics frames | Specificity: 96% | Sensitivity: 88% for α2,3 vs α2,6 sialic acid | 2024 |

Detailed Experimental Protocol for an AI-Driven Prediction Pipeline

This protocol outlines a standard workflow for training a model to predict antigenic drift from hemagglutinin (HA) sequences.

3.1 Data Curation & Pre-processing

- Sequence & Antigenic Data Collection: Download all available HA protein sequences for target virus (e.g., Influenza A/H3N2) from GISAID and NCBI Influenza Virus Database. Pair with corresponding hemagglutination inhibition (HI) assay titer data from sources like the WHO Collaborating Centres.

- Antigenic Distance Matrix: Calculate a pairwise antigenic distance matrix from HI titers using the antigenic cartography method (Smith et al., 2004). Binarize into

significant drift(distance > threshold) vs.no significant driftlabels for supervised learning. - Feature Engineering:

- Evolutionary Features: Generate Position-Specific Scoring Matrix (PSSM) via PSI-BLAST against a non-redundant database.

- Structural Features: For each sequence, use AlphaFold2 or ESMFold to predict a 3D structure. Extract per-residue features: solvent accessible surface area (SASA), secondary structure, and pairwise atom distances.

- Network Features: Construct a phylogenetic tree; calculate evolutionary centrality and clade information.

3.2 Model Training & Validation (Using a GNN Approach)

- Graph Construction: Represent each HA sequence as a graph

G(V, E). NodesVare amino acid residues. EdgesEconnect residues within a 10Å radius in the predicted structure. - Node Feature Vector: For each residue

i, concatenate: one-hot encoding, PSSM vector (20D), SASA (1D), secondary structure (3D). - Model Architecture: Implement a 3-layer Graph Convolutional Network (GCN). Follow with a global mean pooling layer and a fully connected layer with softmax output for binary classification.

- Training Regime: Use a temporally split validation: train on data from seasons 2010-2018, validate on 2019, and hold out 2020-2022 for final testing. Optimize using Adam optimizer with cross-entropy loss. Employ early stopping based on validation AUC.

3.3 In Silico Validation & Prediction

- Escaped Mutant Prediction: For a circulating strain, generate in silico mutants for all possible single-point mutations in the Receptor Binding Domain (RBD).

- Forward Prediction: Feed mutant graphs through the trained model to predict antigenic drift probability.

- Wet-Lab Correlation: Prioritize top 10 predicted high-drift mutants for synthesis and validation via pseudovirus neutralization assays.

AI-Driven Antigenic Drift Prediction Pipeline

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents and Resources for Validation Experiments

| Item/Category | Function in Validation | Example Product/Code |

|---|---|---|

| Pseudovirus System | Safe, BSL-2 compatible platform to study entry of enveloped viruses with mutant spikes. | InvivoGen: psPAX2 & pLVX-EF1α, or commercial SARS-CoV-2/Influva Pseudotyping Kits. |

| Cell Lines (Overexpressing Receptors) | Assess binding tropism and entry efficiency for mutant viral proteins. | HEK-293T-hACE2, MDCK-SIAT1 (high α2,6-SA), Primary chicken DF1 cells. |

| Human/Animal Sera Panel | Benchmark neutralization against predicted drifted variants. | WHO Influenza Reagent Kit, NIBSC convalescent & vaccinated human serum panels. |

| Surface Plasmon Resonance (SPR) Chip | Quantify binding affinity (KD) between mutant RBD and host receptors. | Cytiva Series S sensor chip CMS; biotinylated receptor (e.g., hACE2, α2,6-sialyllactose). |

| Monoclonal Antibody Panel | Map precise epitope disruption caused by predicted escape mutations. | Anti-Spike/RBD neutralizing mAbs (e.g., S309, REGN10987), Anti-Influenza HA head/stem mAbs. |

| Next-Gen Sequencing Library Prep Kit | Track viral population diversity in vitro post-selection pressure. | Illumina COVIDSeq or NEBNext Ultra II FS DNA for amplicon sequencing. |

Signaling & Structural Logic of Tropism Determination

Host tropism shifts are often governed by changes in receptor binding specificity. A canonical example is avian influenza adapting to human hosts by shifting binding preference from α2,3-linked to α2,6-linked sialic acid receptors in the respiratory tract, driven by key mutations in the HA protein (e.g., Q226L, G228S in H2/H3 subtypes).

Logic of HA Mutations Driving Host Tropism Shift

The integration of advanced AI pattern recognition with foundational virological data presents a transformative approach to anticipating viral evolution. By accurately modeling the complex constraints and probabilities of antigenic drift and tropism shifts, these tools empower researchers and drug developers to stay ahead of the evolutionary curve, guiding vaccine strain selection and the development of broadly protective countermeasures. The continuous refinement of these models with new experimental data creates a virtuous cycle of prediction and validation, embodying the core promise of AI in accelerating biological discovery and pandemic preparedness.

This whitepaper details the application of artificial intelligence (AI) for pattern recognition in viral genomics, a core discipline enabling two critical objectives: the rational design of next-generation vaccines and the discovery of novel host-based antiviral targets. By decoding complex, high-dimensional patterns within viral sequences and host-pathogen interaction data, AI transforms raw genomic information into actionable biological insight.

Core AI Methodologies and Quantitative Outcomes

AI models are trained on vast corpora of viral genomic and proteomic data, alongside experimentally validated immunological and virological datasets.

Table 1: Comparative Performance of AI Models in Key Predictive Tasks

| AI Model Type | Primary Application | Key Performance Metric | Reported Value | Dataset/Reference |

|---|---|---|---|---|

| Transformer (e.g., AlphaFold2, ESM-2) | Protein structure prediction of viral surface glycoproteins & host receptors | RMSD (Å) for antigen binding site | 1.2 - 3.5 Å | SARS-CoV-2 Spike, Influenza HA |

| Convolutional Neural Network (CNN) | Epitope immunogenicity & conservancy prediction | AUC-ROC (Immunogenicity) | 0.78 - 0.87 | IEDB, VIPR database |

| Recurrent Neural Network (RNN/LSTM) | Predicting viral escape mutations & evolution | Mutation pathway prediction accuracy | > 80% | HIV-1 Env, SARS-CoV-2 Spike longitudinal data |

| Graph Neural Network (GNN) | Modeling host-virus protein-protein interaction networks | AUPRC (novel interaction prediction) | 0.72 - 0.91 | STRING, BioGRID, viral PPI data |

Experimental Protocols for AI-Guided Vaccine Antigen Design

Protocol 1: In Silico Design of Stabilized Viral Glycoprotein Immunogens

- Objective: Generate a vaccine antigen with enhanced expression, stability, and immunogenic focus on neutralization-sensitive epitopes.

- Methodology:

- Sequence Input & Multiple Sequence Alignment (MSA): Curate thousands of target viral glycoprotein sequences (e.g., HIV-1 Env, RSV F) from public databases.

- AI-Powered Stabilization: Use a protein language model (e.g., ESM-2) to identify evolutionarily constrained residues. Employ RosettaFold or AlphaFold2 to model the prefusion state.

- Computational Mutagenesis & Scoring: Proline substitutions and disulfide bond designs are introduced in silico. Each variant is scored for stability (predicted ΔΔG) and structural deviation from the target state (RMSD).

- Immunogenicity Filter: Pass top-scoring designs through a CNN-based epitope predictor to ensure preservation of key neutralizing epitopes.

- In Vitro Validation: Express top candidate antigens, validate structure via cryo-EM, and assess stability via differential scanning calorimetry (DSC).

Diagram 1: AI-Driven Vaccine Antigen Design Workflow

Experimental Protocols for AI-Driven Antiviral Target Discovery

Protocol 2: Identifying Host Dependency Factors via Network Analysis

- Objective: Discover critical host proteins involved in viral replication that can serve as targets for broad-spectrum antivirals.

- Methodology:

- Network Construction: Build a comprehensive host-virus protein-protein interaction (PPI) network using known data from BioGRID, STRING, and recent AP-MS studies.

- GNN Training & Prioritization: Train a Graph Neural Network on known essential host factors. The model learns topological features (centrality, betweenness) and functional annotations to score and prioritize novel candidate proteins.

- CRISPR Screen Integration: Integrate model predictions with genome-wide CRISPR knockout screen data. Candidates showing synergy (high AI score + essential phenotype in screen) are prioritized.

- In Vitro Validation: Knock down/out candidate genes in relevant cell lines (e.g., A549, HEK293T). Infect with virus and quantify replication (e.g., by plaque assay or qPCR). Assess cytotoxicity in parallel.

Diagram 2: Host Target Discovery via Network AI

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents for AI-Predicted Target & Antigen Validation

| Reagent / Material | Function in Validation | Example Product/Catalog |

|---|---|---|