FAIR Data for Pandemic Preparedness: Implementing FAIR Protocols for Pathogen Genomic Sequencing

This article provides a comprehensive guide for researchers and drug development professionals on implementing FAIR (Findable, Accessible, Interoperable, and Reusable) data principles for outbreak pathogen sequencing.

FAIR Data for Pandemic Preparedness: Implementing FAIR Protocols for Pathogen Genomic Sequencing

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on implementing FAIR (Findable, Accessible, Interoperable, and Reusable) data principles for outbreak pathogen sequencing. Covering foundational concepts, practical methodologies, common troubleshooting, and validation frameworks, it addresses the critical need for standardized, high-quality genomic data to accelerate outbreak response, therapeutic discovery, and global health surveillance.

Why FAIR Data is Non-Negotiable for Modern Outbreak Response

Defining FAIR Principles in the Context of Pathogen Genomics

Within the broader thesis on FAIR data protocols for outbreak sequencing research, the application of the FAIR Guiding Principles—Findable, Accessible, Interoperable, and Reusable—to pathogen genomic data is critical for accelerating pandemic preparedness and response. This document provides detailed Application Notes and Protocols for implementing these principles, ensuring genomic data from outbreaks can be rapidly integrated and analyzed across institutions and disciplines.

Application Notes: Quantitative Metrics for FAIRness in Pathogen Genomics

Effective implementation requires measurable indicators. The following table summarizes current targets and metrics for assessing FAIR compliance in pathogen genomics data repositories.

Table 1: Key Metrics for FAIR Pathogen Genomic Data

| FAIR Principle | Key Metric | Target / Example | Quantitative Benchmark |

|---|---|---|---|

| Findable | Persistent Identifier (PID) Coverage | Percentage of genomic datasets assigned a DOI or accession (e.g., ENA/NCBI SRA, GISAID EPI_SET ID) | >95% of submitted datasets |

| Richness of Metadata in a Searchable Registry | Number of structured fields (e.g., specimen, host, location, date) compliant with minimum information standards (e.g., MIxS) | ≥20 core fields per sample | |

| Accessible | Data Retrieval Success Rate | Percentage of successful automated retrieval attempts via standard protocols (e.g., FTP, API) over 30 days | >99% uptime and retrieval success |

| Clear Access Protocol Documentation | Existence of publicly documented, machine-readable data access statements (including any restrictions) | 100% of datasets | |

| Interoperable | Use of Controlled Vocabularies and Ontologies | Percentage of metadata fields linked to community standards (e.g., NCBI Taxonomy, Disease Ontology, ENVO for location) | >80% of applicable fields |

| Standard File Format Adoption | Percentage of data files in recommended formats (e.g., FASTQ, CRAM, VCF according to GA4GH specifications) | >90% of data files | |

| Reusable | Provision of Comprehensive Data Provenance | Percentage of datasets with a detailed, machine-actionable data lifecycle history (e.g., CWL, WDL workflows) | Increase from 50% to >80% |

| Licensing and Reuse Citation Clarity | Percentage of datasets with explicit usage licenses (e.g., CC0, CC-BY, GISAID terms) | 100% of datasets |

Experimental Protocols

Protocol 1: Generating FAIR-Compliant Genome Sequences from an Outbreak Isolate

Objective: To process a pathogen isolate from sample to submission in a FAIR-aligned public repository.

Materials & Reagents:

- Clinical specimen with confirmed pathogen (e.g., Nasopharyngeal swab in viral transport media).

- Nucleic acid extraction kit (e.g., QIAamp Viral RNA Mini Kit).

- Library preparation kit for sequencing (e.g., Illumina COVIDSeq Test or Nextera XT DNA Library Prep Kit).

- Sequencing platform (e.g., Illumina MiSeq, NovaSeq; or Oxford Nanopore MinION).

- Bioinformatics computing cluster or cloud instance.

- Metadata spreadsheet template (aligned with MIxS or repository-specific requirements).

Procedure:

- Sample Acquisition & Metadata Recording:

- At the point of collection, record all minimum information (see Table 1) into a structured template. Assign a unique, persistent local sample ID.

- Genomic Sequencing:

- Extract nucleic acids following manufacturer’s protocol. Quantify yield.

- Prepare sequencing library using the selected kit, incorporating the sample ID into the library name.

- Sequence the library to a minimum coverage of 100x for the target genome.

- Bioinformatics Processing (Standardized Workflow):

- Use a containerized or workflow-managed pipeline (e.g., Nextflow nf-core/viralrecon, Snakemake) to ensure reproducibility.

- Quality Control: Trim adapters and low-quality bases using Trimmomatic or fastp.

- Genome Assembly: Map reads to a reference genome using BWA-MEM or perform de novo assembly using SPAdes.

- Variant Calling: Identify SNPs and indels using iVar or bcftools.

- Output: Generate a consensus genome sequence (FASTA), aligned reads (BAM), and variant call file (VCF).

- FAIR Metadata Curation and Submission:

- Populate the metadata spreadsheet with wet-lab and computational parameters (sequencing instrument, software versions, reference genome accession).

- Validate metadata against the chosen repository's schema using provided validation tools (e.g.,

ena-webin-clifor ENA). - Submit the final dataset (raw reads, consensus genome, metadata) to an international repository (e.g., ENA, NCBI SRA, GISAID). Register the provided public accession number (PID) with the local sample ID.

Protocol 2: Establishing a Interoperable Metadata Pipeline Using Ontologies

Objective: To automate the annotation of sample metadata with controlled vocabulary terms for enhanced interoperability.

Materials & Reagents:

- A database of sample metadata (e.g., CSV file, LIMS system export).

- Access to ontology lookup services (e.g., OLS (Ontology Lookup Service) API, EBI Ontology API).

- Scripting environment (Python 3 with

requests,pandaslibraries).

Procedure:

- Identify Target Fields: Select metadata columns requiring standardization (e.g., "hostspecies", "anatomicalsite", "country").

- Map to Ontology Terms:

- For each unique value in a column (e.g., "human", "Homo sapiens"), programmatically query the OLS API to find the closest matching term URI from a specified ontology (e.g., NCBI Taxonomy ID: 9606).

- Store the original value, the preferred label, and the ontology term URI (e.g.,

http://purl.bioontology.org/ontology/NCBITAXON/9606) in a new mapping table.

- Create Annotated Metadata File:

- Generate a new metadata file where the original columns are supplemented with new columns (e.g.,

host_species_ontology_uri). - This file should be submitted alongside the data, or integrated into a LIMS, enabling machine-readable semantic interoperability.

- Generate a new metadata file where the original columns are supplemented with new columns (e.g.,

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for FAIR Pathogen Genomics Research

| Item / Solution | Function in FAIR Context |

|---|---|

| Sample-to-CLIMB Pipeline | Automated, UK-standardized pipeline for processing raw sequence data to consensus genomes with linked metadata. |

| nf-core/viralrecon (Nextflow) | Community-curated, containerized bioinformatics workflow for viral genome analysis; ensures computational reproducibility. |

| INSDC Submission Portals | Webin (ENA), Submission Portal (NCBI), DDBJ: Standardized portals for submitting data with rich metadata to global archives. |

| GISAID EpiCoV Platform | Specialized repository for sharing influenza and coronavirus genomes with associated epidemiological data. |

| CWL (Common Workflow Language) | Standard for describing command-line analysis workflows in a way that makes them portable and reproducible across platforms. |

| DataHub / LIMS (e.g., Galaxy, Mytardis) | Laboratory Information Management Systems that structure sample metadata from point of origin, promoting FAIR data capture. |

| Ontology Lookup Service (OLS) | Provides API access to hundreds of biomedical ontologies for consistent metadata annotation (critical for Interoperability). |

Visualizations

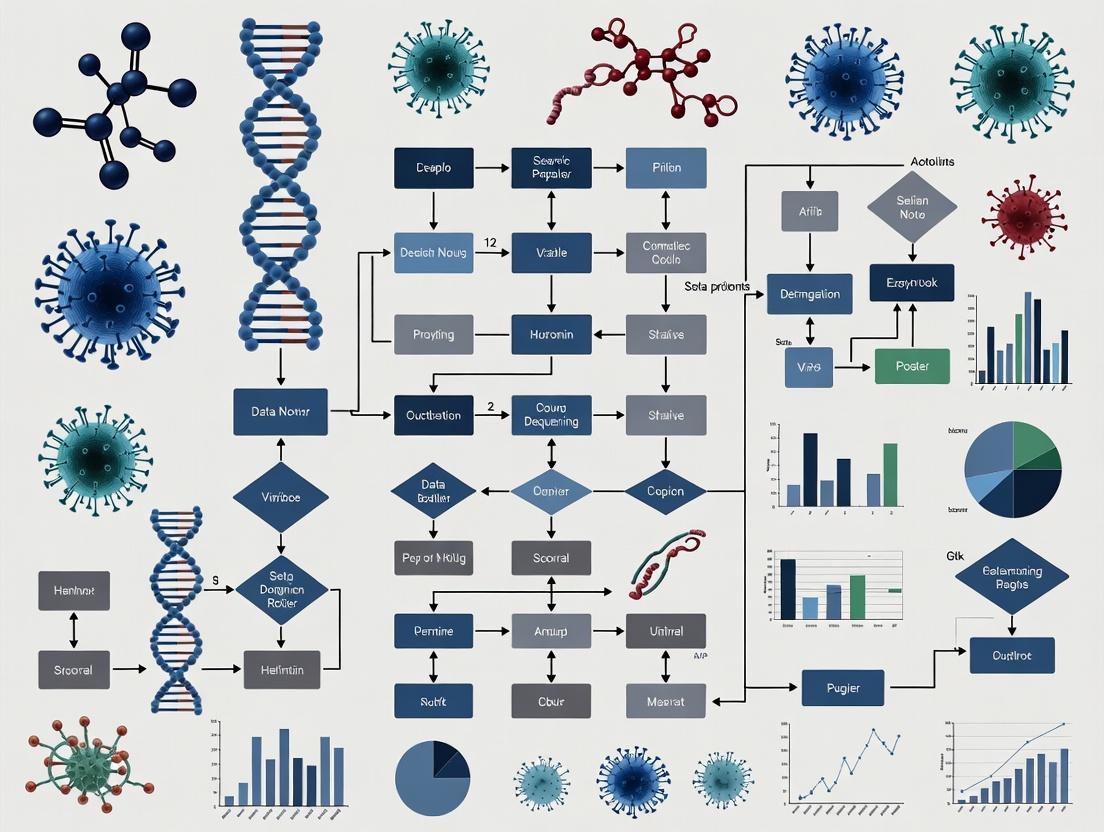

Diagram 1: FAIR Data in Outbreak Response Cycle

Diagram 2: Ontology-Based Metadata Annotation Pipeline

Application Note: Impact of Data UnFAIRness on Pandemic Response Timelines

The lack of Findable, Accessible, Interoperable, and Reusable (FAIR) data in recent outbreaks has directly impeded rapid research and countermeasure development. This note quantifies these delays.

Table 1: Comparative Timeline Delays Due to UnFAIR Data Practices in Recent Outbreaks

| Outbreak (Initial Detection) | Key Genomic Data Shared (Days Post-Detection) | First Major Genomic Dataset Publicly FAIR (Days Post-Detection) | Delay Attributable to UnFAIR Practices (Estimated Days) | Consequence of Delay |

|---|---|---|---|---|

| COVID-19 (Dec 2019) | ~14 (GISAID, Jan 2020) | ~30 (Full FAIR compliance on NCBI/GISAID) | 16-20 | Slowed diagnostics & early vaccine design |

| Mpox (Global, May 2022) | ~7 (Initial sequences) | ~21 (Structured, annotated datasets) | 14 | Delayed understanding of unusual transmission |

| Avian Influenza H5N1 (Cattle, Mar 2024) | ~30 (Initial cattle sequences) | >60 (Ongoing, incomplete metadata) | >30 (and ongoing) | Slowed assessment of mammalian adaptation |

Protocols for FAIR-Compliant Outbreak Sequencing Research

Protocol 1: FAIR Field Sample to Deposition Pipeline

Objective: To ensure genomic data from outbreak samples is collected, processed, and shared with maximum FAIR compliance from point of origin.

Materials & Reagent Solutions:

- Sample Collection: Viral transport medium (VTM), RNAlater.

- Nucleic Acid Extraction: Magnetic bead-based kits (e.g., Qiagen, Thermo Fisher).

- Sequencing: ARTIC Network primer pools, cDNA synthesis kits, high-throughput sequencer (Illumina/ONT).

- Analysis: Bioinformatic pipelines (Nextclade, Pangolin), computational resources.

- Metadata Standardization: INSDC pathogen metadata checklist, DataHarmonizer tool.

Procedure:

- Sample Collection & Annotation: Collect clinical/environmental sample with minimum metadata (date, location, host, specimen type) using standardized vocabulary.

- Nucleic Acid Extraction & QC: Extract RNA/DNA. Quantify and check quality via bioanalyzer.

- Library Preparation & Sequencing: Use standardized primer schemes (e.g., ARTIC). Perform sequencing on available platform.

- Bioinformatic Analysis: Assemble consensus genome using reference-based assembly. Assign lineage/clade using agreed-upon nomenclature.

- FAIR Metadata Curation: Compile all experimental and contextual metadata into the INSDC pathogen checklist.

- Data Deposition: Submit raw reads, consensus sequence, and complete metadata to both a public repository (e.g., SRA, ENA) and a specialized portal (e.g., GISAID, NCBI Virus).

- Persistent Identifier Assignment: Obtain accession numbers for all data objects. Link sample, sequence, and project accessions.

Workflow Visualization:

Diagram Title: FAIR Outbreak Data Generation Workflow

Protocol 2: Federated Analysis for Rapid Outbreak Characterization

Objective: To enable analysis across disparate, FAIR-compliant datasets without centralization, respecting data sovereignty.

Materials: Secure cloud or HPC environments, containerization software (Docker/Singularity), workflow language (Nextflow/CWL), GA4GH Passport & DRS standards.

Procedure:

- Dataset Discovery: Use search engines (e.g., EBI Search, NCBI Datasets) to find FAIR datasets via metadata queries.

- Data Access Negotiation: Use GA4GH Passports for authorized access to controlled datasets.

- Data Retrieval: Use GA4GH DRS URIs to pull specific data files to compute environment.

- Workflow Execution: Launch containerized, versioned analysis workflow (e.g., phylogenetics, variant calling).

- Result Aggregation: Combine results from multiple federated analysis runs.

- Provenance Capture: Record all datasets, software, and parameters using RO-Crate.

Logical Flow Visualization:

Diagram Title: Federated Analysis of FAIR Outbreak Data

Table 2: Essential Research Reagent Solutions

| Item | Function in FAIR Outbreak Research | Example/Note |

|---|---|---|

| Standardized Primer Panels | Ensure interoperable, comparable sequence data across labs. | ARTIC Network nCoV-2019 & monkeypox primer sets. |

| Control Materials | Act as positive controls and inter-lab calibration standards. | NIBSC WHO International Standards for SARS-CoV-2 RNA. |

| Metadata Schema Tools | Structure sample and experimental metadata for interoperability. | DataHarmonizer templates for INSDC pathogen reporting. |

| Bioinformatic Containers | Provide reproducible, versioned software environments. | Docker containers for Pangolin, Nextclade, IRMA. |

| Persistent ID Services | Assign unique, resolvable identifiers to data and samples. | DOI, BioSample accession, RRID for reagents. |

| Trusted Repositories | Provide accessible, long-term storage for FAIR data. | GISAID, NCBI SRA, ENA, Zenodo for analysis outputs. |

Within the thesis on FAIR (Findable, Accessible, Interoperable, Reusable) data protocols for outbreak sequencing research, identifying and serving core stakeholders is paramount. Effective pathogen genomics surveillance relies on a complex ecosystem where data, protocols, and tools flow from laboratory sequencers to public health decision-makers. This document outlines the key stakeholders, their specific needs, and provides detailed application notes and protocols to bridge gaps in the outbreak sequencing data pipeline, ensuring FAIR principles are operationalized from bench to policy.

Table 1: Core Stakeholders, Primary Needs, and Key FAIR Data Challenges

| Stakeholder Group | Primary Needs | Key FAIR Data Challenges | Typical Data Output/Requirement |

|---|---|---|---|

| Bench Researchers | Standardized, validated wet-lab protocols; access to positive controls/reference materials; streamlined data submission tools. | Interoperability of sample metadata; Reusability of protocols. | Raw sequencing reads (FASTQ), sample metadata. |

| Bioinformaticians | Access to raw & processed data; standardized, portable analysis pipelines; computational resources. | Findability & Accessibility of datasets; Interoperability of data formats. | Processed data (VCF, consensus sequences), analysis reports. |

| Epidemiologists | Contextualized data (time, location, host); lineage/clade assignments; visualization tools. | Interoperability of epidemiological & genomic data. | Annotated sequences, phylogenetic trees, outbreak clusters. |

| Public Health Agencies | Timely, interpretable insights; risk assessment reports; data for policy & intervention. | Reusability of data for retrospective analysis; Accessibility with appropriate governance. | Situation reports, variant risk assessments, public dashboards. |

| Journal Publishers/ Funders | Data availability statements; adherence to data sharing policies; reproducible methods. | Findability via persistent identifiers (DOIs). | Data repository accession numbers, detailed methods. |

Table 2: Current Global Genomic Surveillance Sequencing Volume (Representative Data)

| Pathogen Category | Estimated Global Sequences/Month (2023-2024) | Primary Repository | Public Access Lag Time (Median) |

|---|---|---|---|

| SARS-CoV-2 | ~900,000 | GISAID, NCBI Virus | 14-30 days |

| Influenza Virus | ~80,000 | GISAID, IRD | 30-90 days |

| Mycobacterium tuberculosis | ~10,000 | ENA, SRA | 90-180 days |

| Foodborne Pathogens (e.g., Salmonella, E. coli) | ~15,000 | NCBI Pathogen Detection | 30-60 days |

Application Notes & Detailed Protocols

Application Note AN-01: Standardized Metadata Collection at Point of Sampling

Objective: To ensure Interoperability and Reusability by capturing essential contextual data at the earliest point. Procedure:

- Digital Data Capture: Utilize mobile applications or digital forms (e.g., ODK Collect, REDCap) linked to Laboratory Information Management Systems (LIMS).

- Minimum Information Checklist: For each sample, collect:

- Sample ID (unique barcode).

- Collection date and time.

- Geographic location (GPS coordinates preferred).

- Host/source information (e.g., species, age, sex).

- Clinical/epidemiological context (e.g., symptom onset, outbreak ID).

- Collector and submitting institution details.

- Controlled Vocabularies: Use predefined terms (e.g., from ENVO for environment, NCBI Taxonomy for host) to avoid free-text ambiguity.

- Data Export: Map collected fields to public repository submission formats (e.g., INSDC, GISAID metadata sheets) prior to sequencing.

Protocol P-01: End-to-End Workflow for Rapid Outbreak Sequencing & Data Sharing

Objective: Provide a detailed, reproducible methodology from nucleic acid to public data release, aligning with FAIR principles.

I. Wet-Lab Protocol: Amplification & Library Preparation (SARS-CoV-2 Example) Reagents & Equipment: Viral transport medium sample, RNA extraction kit (e.g., QIAamp Viral RNA Mini Kit), ARTIC Network primer pools, reverse transcriptase (e.g., SuperScript IV), DNA polymerase (e.g., Q5 Hot Start), library prep kit (e.g., Illumina DNA Prep). Procedure:

- RNA Extraction: Perform per kit instructions. Include positive (SARS-CoV-2 RNA) and negative (nuclease-free water) extraction controls.

- Reverse Transcription: Generate cDNA using random hexamers or gene-specific primers.

- Tiled Multiplex PCR: Amplify virus genome using two pools of ~400bp overlapping amplicons (ARTIC v4.1 design). Cycle conditions: 98°C 30s; [98°C 15s, 63°C 5m] x35 cycles; 72°C 5m.

- Library Preparation: Quantify PCR products, normalize, and tag with unique dual indices (UDIs) using a transposase-based kit. Clean up libraries using SPRI beads.

- Quality Control: Assess library size distribution (e.g., Agilent TapeStation, Bioanalyzer) and quantify (e.g., Qubit).

II. Bioinformatics Protocol: FAIR-Compliant Analysis Pipeline

Prerequisites: High-performance computing environment, Conda/Mamba for environment management, Git for version control.

Software: fastp (QC), minimap2 (alignment), ivar (primer trimming & variant calling), nextclade (lineage assignment), pangolin (lineage classification).

Procedure:

- Data Organization: Create a structured project directory. Name raw FASTQ files with SampleID_R{1,2}.fastq.gz.

- Automated QC & Trimming:

Alignment & Primer Trimming:

Lineage Assignment & Reporting:

III. Data Submission Protocol:

- Anonymize Data: Ensure all patient-identifiable information has been removed.

- Prepare Metadata: Compile finalized metadata table using repository-specific template.

- Submit to Primary Repository: Upload FASTQ and consensus FASTA files along with metadata to INSDC member (ENA, SRA, DDBJ) via web portal or API. Obtain accession numbers (ERX, SRX).

- Submit to Specialist Repository: For rapid outbreak response, concurrently submit consensus sequences and critical metadata to pathogen-specific repositories (e.g., GISAID for respiratory viruses).

- Publish Workflow: Archive analysis code on GitHub or GitLab. Register pipeline in a workflow registry (e.g., WorkflowHub) and assign a DOI using Zenodo.

Diagrams

Diagram 1: Stakeholder Roles in the FAIR Outbreak Data Pipeline

Diagram 2: End-to-End Sequencing and Sharing Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Outbreak Sequencing Research

| Item | Function & Relevance to FAIR Protocols | Example Product(s) |

|---|---|---|

| Standardized Reference Material | Provides positive control for assay validation; ensures inter-lab comparability (Interoperability, Reusability). | NIST SARS-CoV-2 RNA Standard (RM 8485), ATCC controls. |

| Tiled Multiplex PCR Primer Pools | Enables amplification of entire pathogen genomes from minimal input; standardized sets promote data uniformity. | ARTIC Network primers, Swift Normalase Amplicon Panels. |

| Unique Dual Index (UDI) Kits | Prevents index hopping/cross-talk during multiplex sequencing; critical for accurate sample tracking (Findability). | Illumina IDT for Illumina UDIs, Twist Unique Dual Indexes. |

| Automated Nucleic Acid Extractors | Increases throughput, reduces human error, and standardizes the starting material quality. | QIAsymphony, KingFisher, MagMAX kits. |

| LIMS with Electronic Lab Notebook | Manages sample metadata, workflows, and reagents; ensures audit trail and links data to processes. | Benchling, LabKey, BaseSpace Clarity LIMS. |

| Containerized Analysis Pipelines | Packages software dependencies for reproducible, portable bioinformatics (Reusability). | Docker/Singularity containers, Nextflow pipelines. |

| Metadata Schema Validators | Checks metadata files against community standards before submission, ensuring Interoperability. | CVE (CLI validator for ENA), GISAID metadata checker. |

Application Notes

Within the framework of FAIR (Findable, Accessible, Interoperable, Reusable) data protocols for outbreak sequencing research, several global initiatives and legal standards form the critical infrastructure for rapid and equitable pathogen data sharing. Their interplay directly enables or constrains the implementation of FAIR principles during public health emergencies.

World Health Organization (WHO) Biohub System & Pandemic Accord: The WHO facilitates global pathogen data sharing through normative guidance and new mechanisms like the Biohub System. A key current development is the negotiation of a WHO Pandemic Accord, which aims to establish a comprehensive international framework for pandemic prevention, preparedness, and response, including provisions for pathogen and benefit sharing.

Global Research Collaboration for Infectious Disease Preparedness (GLOPID-R): GLOPID-R is a network of major research funding organizations. It operates through a "One Health" approach, aligning research priorities and funding to enable a rapid, coordinated research response to outbreaks. Its core function is to break down silos and accelerate the availability of research resources and data under FAIR principles.

International Nucleotide Sequence Database Collaboration (INSDC): Comprising DDBJ, EMBL-EBI, and NCBI, the INSDC is the foundational, long-term open data repository for nucleotide sequences. It is the de facto standard for implementing the "Findable" and "Accessible" pillars of FAIR data in genomics. Submission to INSDC ensures persistent identifiers, rich metadata, and global, unrestricted access.

The Nagoya Protocol on Access and Benefit-Sharing (ABS): This international agreement, under the Convention on Biological Diversity, aims to ensure the fair and equitable sharing of benefits arising from the utilization of genetic resources. For outbreak sequencing, it creates a legal framework governing the physical transfer of pathogen samples (Genetic Resources) and associated sequence data (Digital Sequence Information - DSI), which can complicate rapid data sharing during emergencies.

Quantitative Data Summary

Table 1: Key Metrics of Global Initiatives Relevant to Outbreak Sequencing Data

| Initiative/Standard | Primary Scope | Key Metric (Status/Size) | Relevance to FAIR Outbreak Data |

|---|---|---|---|

| WHO Biohub | Pathogen Sharing | Pilot Phase (Operational since 2021) | Enhances Accessibility of physical & associated data resources under agreed terms. |

| GLOPID-R | Research Coordination | >30 member organizations across 6 continents | Promotes Interoperability & Reusability through aligned data standards and priority research calls. |

| INSDC | Sequence Data Repository | ~Petabytes of data; Billions of public records | Core infrastructure for Findable, Accessible, Interoperable data via unified submission portals. |

| Nagoya Protocol | Legal Compliance | 139 Parties (as of 2023) | Major factor governing Accessibility terms and potential restrictions on data/sample use. |

Experimental Protocols

Protocol 1: FAIR-Compliant Pathogen Sequence Data Submission to INSDC During an Outbreak

Objective: To rapidly generate and submit high-quality pathogen genome sequence data and associated metadata to the INSDC in a manner compliant with FAIR principles, enabling global research access.

Materials:

- Extracted nucleic acid from pathogen

- Library preparation kit (e.g., Illumina DNA Prep, Oxford Nanopore LSK-114)

- Sequencing platform (Illumina, Oxford Nanopore, PacBio)

- Computational resources for assembly/analysis

- INSDC member submission portal (e.g., NCBI's BankIt or Submission Portal)

- Metadata checklist (isolation source, host, location, date, collection details)

Methodology:

- Sample Preparation & Sequencing: Prepare sequencing library from extracted nucleic acids according to manufacturer’s protocol. Perform sequencing on appropriate platform to achieve desired coverage (e.g., >50x).

- Bioinformatic Processing: Assemble raw reads into a consensus genome using a reference-based or de novo assembler (e.g., BWA, SPAdes). Perform quality control (completeness, coverage, ambiguity).

- FAIR Metadata Curation: Compile all relevant metadata using controlled vocabularies where possible (e.g., GSC’s MIxS packages). Essential fields include: geographic location (latitude/longitude), isolation date, host (Disease Ontology ID), sampling source, and collector.

- INSDC Submission: a. Access an INSDC submission portal (e.g., NCBI). b. Create a new BioProject (overall study) and BioSample (individual sample metadata). c. Upload the consensus genome sequence file (FASTA format). d. Link the sequence to the specific BioSample and BioProject. e. Assign relevant data to a public release date (can be immediate or delayed).

- Accession Number Acquisition: Upon successful processing, the INSDC will issue unique, persistent accession numbers for the BioProject (PRJNA…), BioSample (SAMN…), and Sequence (AC:…). These must be cited in any publication.

Protocol 2: Framework for Nagoya Protocol Compliance in Cross-Border Outbreak Research

Objective: To establish a legal pathway for acquiring and utilizing pathogen samples and associated data from a country that is a Party to the Nagoya Protocol, ensuring compliance with Access and Benefit-Sharing obligations.

Materials:

- Material Transfer Agreement (MTA) template with ABS clauses

- Prior Informed Consent (PIC) documentation from providing country

- Mutually Agreed Terms (MAT) contract

- Institutional ABS compliance office contacts

Methodology:

- Due Diligence: Prior to sample request, determine the Nagoya Protocol status of the source country. Consult its National Focal Point and ABS Clearing-House to identify specific domestic regulatory requirements.

- Negotiate Mutually Agreed Terms (MAT): Propose MAT to the competent national authority of the provider country. Terms should cover:

- Scope of use (e.g., non-commercial research, in vitro diagnostics).

- Type of benefits to be shared (non-monetary: e.g., co-authorship, capacity building, data sharing; monetary: e.g., royalties from commercialization).

- Reporting and monitoring obligations.

- Secure Prior Informed Consent (PIC): Obtain formal permission from the provider country for access to the specific genetic resource.

- Execute Agreements: Formalize PIC and MAT in a legally binding contract or MTA before physical or digital transfer occurs.

- Track and Report: Maintain detailed records of the sample/data use. Fulfill all benefit-sharing and reporting obligations as stipulated in the MAT. Submit required reports to the provider country and, if applicable, the ABS Clearing-House.

Mandatory Visualizations

Title: Legal and Data Pathways for Outbreak Genomics

Title: Coordinated Outbreak Genomic Response Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for FAIR-Compliant Outbreak Sequencing Research

| Item | Function in Protocol | Relevance to FAIR/Global Standards |

|---|---|---|

| Standardized Nucleic Acid Extraction Kit (e.g., QIAamp Viral RNA Mini Kit) | Ensures high-quality, reproducible input material for sequencing, critical for data quality. | Enables Interoperable and Reusable data by standardizing the initial analytical chain. |

| Long-Read Sequencing Kit (e.g., Oxford Nanopore LSK-114) | Allows for rapid, real-time sequencing and complete genome assembly in field or lab settings. | Critical for speed in Findable data generation during outbreaks, supported by GLOPID-R priorities. |

| Metadata Spreadsheet Template (e.g., GSC MIxS checklist) | Provides a structured format for capturing essential sample and sequencing metadata. | Core tool for achieving Interoperability; required for INSDC submission and aligns with WHO guidance. |

| INSDC Submission Portal Account (e.g., NCBI login) | The direct pipeline for depositing sequence data and metadata into the permanent, open archive. | Primary mechanism to make data Findable and Accessible with a persistent identifier. |

| ABS Compliance Database Access (e.g., ABS Clearing-House) | Provides legal information on a country's requirements for accessing genetic resources. | Essential for navigating the Accessibility pillar under the legal constraints of the Nagoya Protocol. |

A Step-by-Step Guide to Implementing FAIR Outbreak Sequencing Pipelines

Application Notes

In the context of establishing FAIR (Findable, Accessible, Interoperable, and Reusable) data protocols for outbreak pathogen genomics, the initial stage of sample collection and metadata annotation is foundational. The consistent application of standardized metadata is critical for enabling global data integration, comparative analysis, and rapid insight generation during public health emergencies. This protocol advocates for the concurrent use of two complementary standards: the Minimum Information about any (x) Sequence (MIxS) checklists, developed by the Genomic Standards Consortium (GSC), and the Genomic Standards Consortium infectious disease (GSCID) reporting framework. MIxS provides a universal, environment-specific set of core descriptors, while GSCID tailors these requirements specifically for human infectious disease and outbreak investigations, ensuring that epidemiological and clinical context is preserved alongside genomic data. Adherence to these standards at the point of sample collection ensures that downstream sequence data is inherently FAIR-compliant, maximizing its utility for researchers, public health agencies, and drug development professionals tracking pathogen evolution and transmission dynamics.

Protocols

Protocol 1: Pre-Collection Planning and Kit Preparation

- Define Scope: Determine the appropriate MIxS checklist (e.g., MIMARKS for specimen from an organism, MISAG for genomes from single cells) and cross-reference with the latest GSCID core fields for human infectious disease.

- Assemble Collection Kit: Prepare sterile collection swabs, viral transport media (VTM), cryovials, and biohazard bags.

- Prepare Digital Forms: Create a sample tracking sheet or electronic data capture form that includes fields from both MIxS and GSCID. Pre-populate with constants (e.g., collection date, location, collector name) where possible.

- Assign Unique ID: Generate a persistent, unique identifier for each anticipated sample (e.g.,

OUTBREAK-2025-HOST-COUNTRY-001). This ID must link all physical samples, extracted derivatives, and metadata.

Protocol 2: Biological Sample Collection with Metadata Capture

- Sample Acquisition: Collect clinical specimen (e.g., nasopharyngeal swab, blood) using aseptic technique into the prescribed medium. Immediately label the primary container with the assigned Unique ID.

- Core Metadata Annotation (At Point of Care): Simultaneously, record the minimum mandatory fields as per the integrated checklist.

- GSCID/Epidemiological: Host disease status, symptoms, symptom onset date, epidemiological case ID, recent travel history.

- MIxS/Environmental: Collection date and time, geographic location (latitude/longitude), host subject ID (de-identified), sample type (e.g.,

nasopharyngeal swab).

- Preservation: Place primary sample immediately on dry ice or at recommended storage temperature (e.g., -80°C) and document storage conditions.

Protocol 3: Laboratory Processing and Metadata Enhancement

- Sample Accessioning: Upon receipt in the lab, log the sample into the Laboratory Information Management System (LIMS), confirming the Unique ID.

- Add Laboratory Metadata: Augment the sample record with processing information.

- MIxS/Lab: Nucleic acid extraction method, extraction kit, concentration, volume.

- GSCID/Pathogen: Suspected pathogen, target of amplification, sequencing method planned.

- Derivative Tracking: For any derived material (e.g., extracted RNA, amplified library), create a new record that links back to the primary sample's Unique ID via the

derived fromfield in MIxS.

Protocol 4: Metadata Validation and Submission

- Checklist Validation: Prior to sequence data submission, run metadata through a validation tool (e.g., the GSC's MIxS validator) to ensure all mandatory fields for the selected packages are complete and formatted correctly.

- Controlled Vocabulary Compliance: Verify terms against relevant ontologies (e.g., NCBI Taxonomy ID for organism, ENVO for environmental terms, SNOMED CT for clinical terms).

- Submission: Submit the validated metadata sheet alongside raw sequence files to public repositories (e.g., INSDC partners like ENA, SRA, or DDBJ, or pathogen-specific resources like GISAID) ensuring the metadata is attached using the repository's specified template, which often incorporates MIxS.

Data Presentation

Table 1: Core Mandatory Fields from Integrated MIxS-GSCID Checklist for Outbreak Isolates

| Field Name | Standard Source | Description | Example Value | Required Ontology/Term |

|---|---|---|---|---|

| investigation type | MIxS | Nature of study | pathogen-associated | ENA: pathogen-associated |

| project name | MIxS | Study identifier | 2025_RespVirus_Surveillance |

n/a |

| lat_lon | MIxS | Geographic coordinates | 45.5017 N, 73.5673 W |

decimal degrees |

| collection_date | MIxS | Time of sample collection | 2025-03-15 |

ISO 8601 |

| hostsubjectid | MIxS | De-identified host identifier | Patient_Alpha_001 |

n/a |

| hostdiseasestat | GSCID | Health status of host | Symptomatic |

n/a |

| host_sex | MIxS | Host gender | female |

PBI: female |

| host_age | MIxS | Host age in years | 45 |

numeric |

| suspected_pathogen | GSCID | Suspected causative agent | SARS-CoV-2 |

NCBI TaxID: 2697049 |

| isolation_source | MIxS | Body site of isolation | nasopharyngeal swab |

UBERON: 0001729 |

| seq_meth | MIxS | Sequencing methodology | Illumina NovaSeq 6000 |

n/a |

Experimental Protocols

Detailed Methodology: Viral RNA Extraction for Sequencing

Principle: To obtain high-quality, inhibitor-free viral RNA from clinical swab media for next-generation sequencing library preparation. Reagents: QIAamp Viral RNA Mini Kit (Qiagen), β-mercaptoethanol, absolute ethanol, nuclease-free water. Procedure:

- Lysis: Pipette 140µl of prepared VTM sample into a 1.5ml microcentrifuge tube. Add 560µl of Buffer AVL containing carrier RNA. Mix by pulse-vortexing for 15s. Incubate at room temperature (15–25°C) for 10 min.

- Precipitation: Briefly centrifuge the tube. Add 560µl of ethanol (96–100%) to the lysate. Mix immediately by pulse-vortexing for 15s. After mixing, briefly centrifuge again.

- Binding: Apply 630µl of the lysate-ethanol mixture to the QIAamp Mini column. Centrifuge at 6000 x g for 1 min. Discard flow-through and repeat with remaining mixture.

- Wash 1: Add 500µl Buffer AW1 to the column. Centrifuge at 6000 x g for 1 min. Place column in a clean 2ml collection tube. Discard flow-through.

- Wash 2: Add 500µl Buffer AW2 to the column. Centrifuge at full speed (20,000 x g) for 3 min. Discard flow-through and collection tube.

- Elution: Place the column in a new, labeled 1.5ml microcentrifuge tube. Apply 60µl of Buffer AVE (or nuclease-free water) to the center of the column membrane. Incubate at room temperature for 1 min. Centrifuge at 6000 x g for 1 min. The eluate contains the viral RNA. Quantify using a fluorometric method (e.g., Qubit RNA HS Assay).

Visualizations

Title: Outbreak Sample & Metadata Workflow

Title: Metadata Standards Integration for FAIR Data

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Outbreak Sample Processing

| Item | Function & Rationale |

|---|---|

| Viral Transport Media (VTM) | Stabilizes viral nucleic acids and preserves pathogen viability during sample transport from clinic to lab. |

| Nucleic Acid Extraction Kit (e.g., QIAamp Viral RNA Mini Kit) | Isolates high-purity, inhibitor-free viral RNA/DNA from complex clinical matrices, essential for sequencing. |

| RNase Inhibitors | Protects labile RNA genomes from degradation during extraction and library preparation steps. |

| Ultra-Low Temperature Freezer (-80°C) | Provides long-term, stable storage for original clinical specimens and extracted nucleic acids. |

| Laboratory Information Management System (LIMS) | Tracks sample provenance, processing steps, and links physical samples to digital metadata. |

| Ontology Lookup Service (e.g., OLS, NCBI Taxonomy) | Ensures metadata terms use standardized, controlled vocabularies for interoperability. |

| MIxS/GSCID Validator Tool | Software that checks metadata sheets for completeness and format prior to public submission. |

Within the framework of FAIR (Findable, Accessible, Interoperable, Reusable) data protocols for outbreak sequencing research, Stage 2 is critical. It encompasses the generation of raw, unprocessed sequencing data and its immediate, validated submission to international nucleotide archival repositories. This phase ensures data availability is decoupled from later, often lengthy, analysis and curation stages, enabling rapid global response during a public health crisis.

Three primary repositories form the backbone of global sequence data sharing. Their synchronized data is accessible through the International Nucleotide Sequence Database Collaboration (INSDC).

Table 1: Comparison of Major Public Sequence Repositories

| Feature | SRA (NCBI) | ENA (EMBL-EBI) | GSA (China National Center for Bioinformation) |

|---|---|---|---|

| Full Name | Sequence Read Archive | European Nucleotide Archive | Genome Sequence Archive |

| Primary Jurisdiction | International, NIH-funded | International, EMBL-member states | Mainland China |

| Mandatory Submission | For NIH-funded research | For publications in EMBL-EBI journals | For research conducted in China |

| Accepted Raw Formats | FASTQ, BAM, CRAM, PacBio HDF5, ONT FAST5 | FASTQ, BAM, CRAM, PacBio BAM, ONT FAST5 | FASTQ, BAM, CRAM |

| Metadata Standard | INSDC (SRA XML) | INSDC (Webin XML/JSON) | INSDC (GSA JSON) |

| Accession Prefix | SRR, SRX, SRS, SRP | ERR, ERX, ERS, ERP | CRR, CRX, CRS, CRP |

| Immediate Release Policy | Yes, with "hold until date" option | Yes, with "hold until date" option | Yes, with specified release date |

| Typical Processing Time | 1-3 business days | 1-2 business days | 2-5 business days |

Protocol: End-to-End Workflow for Raw Data Deposition

This protocol details the steps from sequencer output to successful repository accession.

Pre-Submission: Data and Metadata Preparation

Objective: To generate validated sequence files and structured metadata. Materials: Sequencing platform (Illumina, Oxford Nanopore, PacBio), high-performance computing cluster or server, metadata spreadsheet template.

Procedure:

- Demultiplexing: If required, use platform-specific software (e.g.,

bcl2fastqfor Illumina,guppy_barcoderfor ONT) to generate per-sample FASTQ files. - Quality Assessment: Run

FastQCorNanoPlot(for ONT) on raw FASTQs to confirm expected read quality, length, and yield. - File Integrity Check: Generate MD5 checksums for all files to be submitted.

- Metadata Compilation: Download the repository's metadata template. Complete all fields, critically including:

- Sample: organism, isolate, geographic location, collection date.

- Experiment: library strategy (AMPLICON, WGS), instrument model.

- Study: descriptive title, relevant outbreak identifier, principal investigator.

Submission to SRA via Command-Line (usingprefetchandfasterq-dumptools)

Objective: To programmatically upload data using NCBI's command-line utilities. Reagents: NCBI SRA Toolkit, Aspera Command-Line Client (optional, for faster transfer).

Procedure:

- Create Submission Directory:

- Generate SRA Metadata XML: Use the

SRA Metagenomicsweb portal orSRA_submission.xlsxtemplate to generate the final XML. - Validate Metadata: Use NCBI's

validateoption in the submission portal prior to file transfer. Upload Files: Use Aspera (

ascp) or FTP to transfer files to the designated NCBI secure server.Finalize Submission: In the SRA submission portal, link the uploaded files to the metadata and finalize. Record the returned BioProject (PRJNA...) and SRA (SRP...) accessions.

Submission to ENA via Webin-CLI

Objective: To use EMBL-EBI's comprehensive command-line interface for validated submission. Reagents: Webin-CLI tool (Java-based).

Procedure:

- Install and Authenticate:

- Prepare Manifest File: Create a

manifest.txtfile specifying files, metadata, and analysis type. Validate and Submit:

Receive Accessions: Upon success, the CLI returns sample (ERS), experiment (ERX), and run (ERR) accessions.

Diagrams

Stage 2 Submission Workflow

Title: Raw Data Deposition Workflow

INSDC Repository Synchronization

Title: INSDC Global Data Synchronization

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Tools for Raw Data Deposition

| Item | Function in Stage 2 | Example/Format |

|---|---|---|

| Sequencing Platform Software | Primary demultiplexing and basecalling. Converts raw signals to sequence reads. | Illumina DRAGEN, Oxford Nanopore Guppy, PacBio SMRT Link |

| Quality Control Tools | Provides initial assessment of read quality, length, and potential contaminants to flag issues prior to submission. | FastQC, NanoPlot (for ONT), MinIONQC |

| Checksum Generator | Creates unique file fingerprints (MD5/SHA256) to verify file integrity before, during, and after transfer. | md5sum, sha256sum (Linux commands) |

| Metadata Spreadsheet Template | Repository-provided template ensuring all required descriptive, administrative, and technical fields are populated correctly. | NCBI SRA metadata template, ENA Webin spreadsheet |

| Command-Line Submission Tools | Enables automated, scriptable, and high-throughput submission of data and metadata, reducing manual portal errors. | NCBI SRA Toolkit (prefetch, fasterq-dump), ENA Webin-CLI, Aspera ascp |

| Data Transfer Client | High-speed, secure file transfer protocol for uploading large sequence files (TB scale) to repository servers. | Aspera Connect, FTP client (e.g., lftp), or HTTPS |

| Validation Software | Performs pre-submission checks on file formats, metadata completeness, and consistency, preventing submission failures. | Built into Webin-CLI, SRA validation in portal |

Within the thesis framework of FAIR data protocols for outbreak sequencing research, this stage addresses the computational reproducibility and provenance tracking of analytical workflows. The use of standardized workflow languages like Common Workflow Language (CWL) and Nextflow, combined with registry services like the GA4GH Tool Registry Service (TRS), is critical for ensuring that genomic analyses of pathogens are Findable, Accessible, Interoperable, and Reusable.

Core Technology Comparison

Table 1: Comparison of Workflow Management Systems for Outbreak Bioinformatics

| Feature | Common Workflow Language (CWL) | Nextflow | Snakemake |

|---|---|---|---|

| Primary Language | YAML/JSON | DSL (Groovy-based) | Python-based DSL |

| Execution Model | Declarative, describes inputs/outputs | Imperative, dataflow oriented | Rule-based |

| Portability | High (spec-focused, many runners) | High (container-focused) | Medium-High |

| Provenance Logging | Via CWL-prov, Research Object CRATE | Built-in timeline/trace report | Built-in report |

| GA4GH TRS Support | Native descriptors registrable | Tools can be packaged for TRS | Limited native support |

| Key Strength in Outbreak Context | Standardization for cross-lab sharing | Scalability on clusters/cloud | Integration with Python ecosystem |

| Adoption in Public Health | Growing (used in Galaxy, EPI2ME) | High (e.g., nf-core/viralrecon) | Common in academic pipelines |

Application Notes

Implementing FAIR Workflows with CWL

CWL provides a vendor-neutral description of command-line tools and workflows. For outbreak sequencing, a CWL "CommandLineTool" descriptor for a variant caller like bcftools mpileup ensures the exact version, parameters, and base Docker/Singularity image are documented. A CWL "Workflow" descriptor chains these tools (e.g., quality control → alignment → variant calling). Provenance, captured via CWL-prov, generates a detailed record of the execution, linking input sequence reads (with SRA accessions) to the final VCF file, essential for auditability during an outbreak investigation.

Scalable Analysis with Nextflow

Nextflow enables scalable and reproducible pipelines, crucial for rapidly analyzing thousands of pathogen genomes. Pipelines like nf-core/viralrecon (a community-built pipeline for SARS-CoV-2 genome assembly and variant calling) exemplify this. Nextflow's built-in provenance includes a comprehensive execution trace and timeline report, detailing when and where each process ran, its duration, and resource consumption. This supports the "R" (Reusable) and "I" (Interoperable) FAIR principles by allowing the same pipeline to run on diverse compute infrastructures (local HPC, AWS, Google Cloud) with consistent results.

Workflow Discovery and Sharing via GA4GH TRS

The GA4GH TRS provides a standardized API for registering, discovering, and launching bioinformatics tools and workflows. A public health lab can register its validated CWL or Nextflow outbreak analysis pipeline on a TRS instance (e.g., Dockstore). Researchers globally can then discover, pull, and execute the exact versioned workflow, ensuring methodological consistency across different groups analyzing the same outbreak. TRS entries include versioning, authors, and descriptors, directly supporting FAIR principles for workflows themselves.

Detailed Protocols

Protocol: Creating a Reproducible Variant Calling Workflow with CWL and TRS Registration

Objective: To create a reproducible, TRS-registrable workflow for calling variants from viral sequencing data.

Materials:

- Input Data: Paired-end FASTQ files from Illumina sequencing of a viral isolate.

- Software:

cwltool(CWL reference runner),docker. - Tools:

fastp(v0.23.2),bwa(v0.7.17),samtools(v1.15),bcftools(v1.15). - Reference Genome: NCBI RefSeq FASTA file for the target virus.

Method:

- Tool Definition: Write a CWL

CommandLineTooldescriptor for each bioinformatics tool (e.g.,fastp.cwl,bwa-mem.cwl). Each descriptor specifies the Docker container image, base command, input parameters (e.g.,--threads), inputs (e.g.,reads_fastq), and outputs (e.g.,trimmed_fastq). - Workflow Composition: Write a CWL

Workflowdescriptor (variant-calling-workflow.cwl). This file defines the steps: a.quality_control: Runsfastp.cwlon input FASTQs. b.alignment: Runsbwa-mem.cwlusing the trimmed reads and the reference genome. c.sort_index: Runssamtools sort.cwlandsamtools index.cwlon the alignment output. d.variant_calling: Runsbcftools mpileup.cwlandbcftools call.cwlon the sorted BAM. - Execution:

- Provenance Generation: The

--provenanceflag generates a PROV-O compliant research object, packaging workflow outputs, parameters, and execution trace. - TRS Registration: Package the CWL files and a

Dockerfilein a GitHub repository. Register the repository on a TRS-compliant platform like Dockstore by linking the GitHub repo. The main workflow descriptor is tagged with a version (e.g.,1.0.0).

Protocol: Deploying a Nextflow Outbreak Pipeline from a TRS Endpoint

Objective: To launch a versioned, containerized Nextflow pipeline for viral genome analysis, retrieved from a GA4GH TRS.

Materials:

- Compute: A system with Nextflow (v22.10+) and Docker/Podman installed.

- Data: A directory of FASTQ files and a sample sheet CSV.

- TRS Endpoint: URL of a TRS API (e.g., Dockstore:

https://dockstore.org/api/api/ga4gh/trs/v2).

Method:

- Pipeline Discovery: Query the TRS endpoint to find the target pipeline (e.g.,

nf-core/viralrecon). Note itsidand desired versiondescriptor_type(NFLfor Nextflow). - Pipeline Launch: Use Nextflow's

runcommand with the TRS URL. Specify inputs via a Nextflow configuration file or command line.

- Provenance Capture: Upon completion, Nextflow automatically generates a

trace.txtreport (tab-separated execution log) and areport.htmlfile with resource usage, timeline, and command lines for every process. - FAIR Output: The

results/directory contains analysis outputs, and the Nextflow reports provide computational provenance. The pipeline's TRS source guarantees the workflow's identity and version.

Visualization

Diagram 1: FAIR Outbreak Analysis Workflow Lifecycle

Diagram 2: Nextflow Execution Provenance Data Model

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Reproducible Outbreak Bioinformatics

| Item | Function in Workflow Provenance | Example/Note |

|---|---|---|

| CWL Descriptor Files (.cwl, .yml) | Declarative, standardized description of tools and workflow logic, enabling portability. | bwa-mem.cwl defines the Docker image, command, inputs, and outputs for BWA-MEM. |

| Nextflow Pipeline Script (.nf) | Defines the dataflow and processes of a scalable, containerized pipeline. | The main main.nf script in nf-core/viralrecon. |

| Container Images (Docker/Singularity) | Encapsulates all software dependencies, guaranteeing identical execution environments. | quay.io/biocontainers/bcftools:1.15--hfe0f4f8_2 |

| GA4GH TRS API Endpoint | Serves as a versioned registry for discovering and launching workflow descriptors. | Dockstore API: https://dockstore.org/api/ga4gh/trs/v2 |

| Provenance Log File | Immutable record of a workflow run, linking inputs, parameters, software, and outputs. | CWL-prov research_object/, Nextflow trace.txt and report.html. |

| Workflow Runner | Software that interprets and executes the workflow descriptor. | cwltool, toil, nextflow, cromwell. |

| Sample Metadata Sheet (.csv, .tsv) | Structured sample information linking biological context to sequencing files, critical for FAIR outputs. | Must include sampleid, sequencingrun, collectiondate, and geographiclocation. |

Application Notes: Database Selection and Comparative Analysis

Within FAIR data protocols for outbreak sequencing research, selecting the appropriate public database for sharing interpreted genomic data is critical for ensuring data interoperability and reuse. The three primary repositories—GISAID, NCBI Virus, and BV-BRC—serve distinct yet complementary functions. The choice depends on data type, intended use, and community standards.

GISAID (Global Initiative on Sharing All Influenza Data) is the de facto standard for sharing consensus genome assemblies and associated metadata during acute viral outbreaks, most notably for influenza and SARS-CoV-2. It operates under a mechanism that recognizes and protects data contributors' rights, which has been pivotal for rapid global collaboration. Data access requires registration and agreement to its terms.

NCBI Virus offers a broad, open-data repository for viral sequence data (raw reads, assemblies, and annotated sequences) integrated with the broader NCBI toolkit (e.g., SRA, GenBank). It supports FAIR principles through rich metadata standards and programmatic access via APIs.

BV-BRC (Bacterial and Viral Bioinformatics Resource Center) merges the former IRD and PATRIC resources. It is a comprehensive analysis platform that supports the deposition of both raw and assembled data, with a strong emphasis on integrated computational analysis tools for comparative genomics and lineage assignment.

The following table summarizes the key quantitative and qualitative characteristics of each platform, based on current public data and access policies.

Table 1: Comparative Analysis of Public Databases for Viral Outbreak Data Sharing

| Feature | GISAID | NCBI Virus | BV-BRC |

|---|---|---|---|

| Primary Focus | Outbreak response for select pathogens (e.g., Influenza, SARS-CoV-2) | Comprehensive archive for all viral sequences | Integrated bioinformatics resource for bacterial & viral pathogens |

| Data Types Accepted | Consensus genome assemblies, metadata | Raw reads (SRA), assemblies, annotated genomes | Raw reads, assemblies, annotated genomes, expression data |

| Access Model | Controlled-access (registration, terms) | Open-access | Open-access |

| Key Analytical Tools | EpiCoV, EpiFlu, lineage reports (Pango, clades) | BLAST, variation analysis, sequence alignment | Genome annotation, comparative pathway/genomics, phylogenetic tree building |

| Metadata Standards | GISAID-specific curated metadata fields | INSDC / SRA metadata standards | BV-BRC standardized templates |

| FAIR Alignment | High on Reuse (clear terms), variable on machine Accessibility | High on Accessibility and Interoperability | High on Interoperability and Reusability (integrated tools) |

| Typical Submission Volume (Pathogen Example) | >16 million SARS-CoV-2 sequences | >10 million viral sequences across all taxa | Millions of bacterial/viral genomes & associated data |

| Unique Identifier System | Epi-Isolate ID (EPIISL#) | GenBank/SRA accession (e.g., MN908947) | BV-BRC ID (e.g., 201674.3) |

| Programmatic API Access | Limited, web-based queries | Full API (Entrez) available | Comprehensive API available |

Experimental Protocols

Protocol 1: Submitting a SARS-CoV-2 Consensus Genome Assembly and Lineage Data to GISAID

This protocol details the steps for submitting a viral consensus sequence (FASTA) and associated metadata to GISAID, a critical final step in an outbreak sequencing workflow adhering to FAIR principles.

I. Materials & Pre-Submission Requirements

- Research Reagent Solutions:

- Final, High-Quality Consensus Sequence: A SARS-CoV-2 genome assembly in FASTA format (≥29,000 bp, low ambiguity).

- Curated Metadata Spreadsheet: Compiled according to GISAID's EpiCoV submission template.

- GISAID User Account: Registered and approved contributor account.

- Bioinformatics Tool: Nextclade (or Pangolin) for preliminary lineage/clade assignment.

- Validation Software: Basic local FASTA validator (e.g.,

seqkit stats).

II. Methodology

- Sequence & Metadata Finalization:

- Run the final consensus FASTA file through Nextclade (clade assignment) and/or Pangolin (lineage assignment). Record the results.

- Complete the GISAID metadata Excel template meticulously. Mandatory fields include: virus name, collection date, location, submitting lab, sequencing technology, and assembly method.

GISAID Portal Submission:

- Log into the GISAID EpiCoV submission portal.

- Select "New Submission" and choose "Virus." Select "Coronavirus" and then "SARS-CoV-2."

- Follow the step-by-step web form, which mirrors the metadata spreadsheet. Paste or upload the FASTA sequence directly into the provided field.

- Input the preliminary lineage/clade data from Nextclade/Pangolin in the relevant field.

Validation and Confirmation:

- The GISAID system will perform automated checks on sequence length, ambiguous bases, and metadata completeness.

- Review the summary page carefully. Submit the data.

- Upon successful processing, you will receive an email with the provisional EPI_ISL identifier. Full accession is provided after final curation.

Post-Submission:

- Record the EPI_ISL ID in your local laboratory information management system (LIMS).

- Cite this identifier in any subsequent publications or reports.

Protocol 2: Depositing Raw Sequence Reads and Assembled Genome to NCBI Virus via SRA and GenBank

This protocol describes a parallel submission pathway suitable for broader viral pathogen data, contributing raw reads to the Sequence Read Archive (SRA) and the assembled genome to GenBank, maximizing machine accessibility.

I. Materials & Pre-Submission Requirements

- Research Reagent Solutions:

- Raw Sequencing Reads: Compressed FASTQ files (R1 and R2).

- Assembled Genome: Final consensus sequence in FASTA format.

- Annotation File (Optional): GFF3 file of genome annotations.

- NCBI User Account: With appropriate submission privileges.

- Metadata File: SRA metadata template (.tsv or .xlsx).

- Submission Software: NCBI's command-line tools (

prefetch,fasterq-dumpnot required for submission) or the web-based Submission Portal.

II. Methodology

- BioProject and BioSample Registration:

- If not existing, create a BioProject describing the overarching research study.

- Create a BioSample record for the specific viral isolate, providing sample-specific attributes (host, collection date, geographic location, isolate).

SRA Submission (Raw Reads):

- In the SRA submission portal, link the submission to the created BioProject and BioSample.

- Upload the metadata file detailing library layout (PAIRED), instrument, and strategy.

- Upload the FASTQ files via FTP, Aspera, or directly through the browser.

GenBank Submission (Assembly):

- Using the NCBI Nucleotide submission portal (BankIt or tbl2asn), start a new "Viral Genome" submission.

- Link to the same BioProject and BioSample used for the SRA submission.

- Upload the FASTA file and, if available, the annotation GFF3 file.

- Fill in the required information: organism, isolate name, and annotator information. Define the source modifers and gene features.

Validation and Release:

- NCBI staff will run validation checks. Correspond with them via the submission portal to address any queries.

- Upon acceptance, you will receive SRA run accession(s) (e.g., SRR#) and a GenBank accession (e.g., MT#).

- These accessions are publicly accessible upon your specified release date.

Visualizations

Title: GISAID Data Submission and Accession Workflow

Title: FAIR Outbreak Data Sharing Pathway to Public Databases

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Database Submission and Analysis

| Item | Function in Protocol | Example / Source |

|---|---|---|

| Consensus Genome Assembly (FASTA) | The primary interpreted data product for sharing; the nucleotide sequence of the viral isolate. | Output from assemblers (iVirAL, SPAdes) or pipelines (Nextflow, SnakeMake). |

| Curated Metadata Template | Ensures FAIR compliance by providing structured, contextual information about the sequence. | GISAID EpiCoV.xlsx, NCBI SRA metadata template. |

| Lineage Assignment Tool | Provides critical interpreted data (lineage/clade) for contextualizing the genome within the outbreak. | Pangolin, UShER, Nextclade. |

| Submission Portal Account | Authenticated access required to submit data to the chosen repository. | GISAID, NCBI My Bibliography, BV-BRC account. |

| Sequence Read Archive (SRA) Toolkit | Facilitates the management and submission of raw sequencing read data to NCBI. | prefetch, fasterq-dump (for download); Web Portal for upload. |

| Genome Annotation File (GFF3/GTF) | Enhances reusability by providing coordinates of genomic features (genes, proteins). | Output from annotation tools (Prokka, VAPiD, NCBI PGAAP). |

| Data Validation Software | Performs pre-submission checks on sequence quality and format to prevent submission failure. | seqkit stats, INSDC validator, platform-specific checkers. |

Overcoming Common FAIR Data Hurdles in High-Pressure Outbreak Scenarios

Application Notes

In the context of FAIR data protocols for outbreak sequencing research, incomplete or inconsistent metadata is a primary barrier to data reusability, interoperability, and rapid response. Metadata describes the who, what, when, where, and how of sample collection and sequencing, and its quality directly impacts analytical validity. Current challenges include missing critical fields (e.g., collection date, geographic location), use of non-standardized terms, and format inconsistencies that prevent automated data integration.

The implementation of community-defined metadata templates, coupled with automated validation tools, provides a systematic solution. These protocols ensure that data shared in public repositories like the International Nucleotide Sequence Database Collaboration (INSDC) or outbreak-specific portals (e.g., GISAID, NCBI Virus) adheres to FAIR principles, enabling efficient aggregation and comparative analysis during public health emergencies.

Table 1: Impact of Inconsistent Metadata on Outbreak Data Analysis

| Metadata Issue | Common Example | Impact on Analysis |

|---|---|---|

| Missing Collection Date | Date field blank or "unknown" | Impossible to construct accurate phylogenetic timelines or estimate transmission rates. |

| Non-Standard Location | "New York," "NYC," "New York City" | Inability to geospatially cluster cases without manual curation, slowing hotspot identification. |

| Inconsistent Host Terminology | "Homo sapiens," "Human," "patient" | Complicates filtering and comparative studies across datasets, risking erroneous conclusions. |

| Unspecified Measurement Units | Viral load given as "35" (Ct value? copies/mL?) | Renders quantitative data unusable for meta-analysis or modeling. |

Experimental Protocols

Protocol 2.1: Deployment and Use of a Standardized Metadata Template

Objective: To ensure consistent and complete capture of metadata for viral genome sequences generated during an outbreak investigation.

Materials:

- Sample information (clinical/environmental).

- Standardized metadata template (e.g., INSDC / GISAID pathogen sample checklist, MIxS-based template).

- Spreadsheet software or a Laboratory Information Management System (LIMS).

Methodology:

- Template Selection: Download the most current version of a community-agreed template. For outbreak sequencing, the "NCBI Virus Pathogen Sample Checklist" is recommended as it aligns with INSDC standards and includes outbreak-critical fields.

- Field Population: For each sequenced sample, populate every field in the template. Use controlled vocabulary where specified (e.g., for "hosthealthstate," use terms like "diseased," "healthy").

- Mandatory Field Verification: Ensure all fields designated as "Mandatory" (M) are completed. These typically include:

sample_id(unique identifier)collect_date(YYYY-MM-DD)geo_loc_name(country: region, e.g., "USA: New York City")host(e.g., "Homo sapiens")isolate(virus isolate name)lat_lon(decimal degrees)

- Data Export: Save the populated template as a comma-separated values (.csv) or tab-separated values (.tsv) file for submission to repositories or internal validation.

Protocol 2.2: Automated Metadata Validation Using Command-Line Tools

Objective: To programmatically check metadata files for completeness, syntactic correctness, and adherence to vocabulary rules prior to data submission.

Materials:

- Metadata file (.csv/.tsv) from Protocol 2.1.

- Computer with Python 3.8+ installed.

- Validation tool (e.g.,

frictionlessframework,checkmfor MIxS, or custom scripts).

Methodology (using a generic frictionless-inspired approach):

- Schema Definition: Create a JSON Table Schema file (

schema.json) that defines constraints for each metadata field (required fields, allowed patterns, permissible values).

Validation Execution: Run a validation script.

Error Review and Correction: The tool outputs a report listing all errors (e.g., missing required field, date format mismatch, term not in enum). Manually correct the source metadata file and re-validate until no critical errors remain.

- Integration: This validation step can be integrated into a bioinformatics pipeline, triggering automatically upon metadata file creation.

Mandatory Visualizations

Diagram 1: Metadata Curation & Validation Workflow for Outbreak Sequences (80 chars)

Diagram 2: How Templates & Validation Tools Achieve FAIR Data Goals (74 chars)

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Metadata Standardization and Validation

| Item / Solution | Category | Primary Function |

|---|---|---|

| INSDC / GISAID Submission Checklists | Template | Provides the authoritative list of required and recommended metadata fields for public data deposition. |

| MIxS (Minimum Information about any (x) Sequence) Standards | Template & Vocabulary | Defines core environmental packages (e.g., MIMS, MIMARKS) and a large set of curated terms for consistent reporting. |

| NCBI Datasets Command-Line Tools | Validation & Retrieval | Includes metadata validators and tools to download standardized metadata for existing datasets. |

| Frictionless Framework | Validation Software | A Python/CLI toolkit for creating data schemas and validating tabular data files against them. |

| EDAM-Bioimaging Ontology | Vocabulary | Provides standardized terms for imaging metadata, relevant for correlative microscopy studies in pathogenesis. |

| CWL (Common Workflow Language) / Nextflow | Pipeline Framework | Allows embedding metadata validation steps into reproducible bioinformatics workflows. |

| LinkML (Linked Data Modeling Language) | Schema Framework | A modeling language for generating validation schemas, conversion code, and documentation from one central source. |

Balancing Rapid Sharing with Ethical and Legal Constraints (Data Sovereignty, GDPR)

Application Notes for FAIR Outbreak Sequencing Data Sharing

The implementation of FAIR (Findable, Accessible, Interoperable, Reusable) principles in outbreak genomics must be reconciled with jurisdictional data sovereignty laws and the EU's General Data Protection Regulation (GDPR). The primary challenge is enabling rapid, global scientific collaboration while adhering to legal frameworks that restrict cross-border data flows and protect individual privacy.

Table 1: Key Legal/Ethical Constraints vs. FAIR Data Sharing Enablers

| Constraint/Enabler | Core Principle | Impact on Outbreak Data Sharing | Potential Mitigation Strategy |

|---|---|---|---|

| GDPR (Article 9) | Protects special category data (e.g., health, genetic data). | Requires explicit consent or derogations for processing; limits broad sharing of patient-linked sequences. | Pseudonymization; use for public health derogation; data use agreements. |

| Data Sovereignty | Data subject to laws of country where it is collected/stored. | Prohibits or restricts transfer of genomic data outside national borders. | Federated Analysis; in-country data processing; use of certified cloud providers. |

| FAIR Principle (Accessible) | Data should be retrievable by their identifier using a standardized protocol. | Direct, open access may conflict with access controls required by GDPR/sovereignty. | Tiered-access systems; automated Data Access Committees (DACs). |

| Informed Consent | Participants must understand data use scope. | Legacy consents may not permit broad sharing for future outbreaks. | Dynamic consent platforms; broad consent frameworks within ethical review. |

| Purpose Limitation | Data used only for specified, explicit purposes. | Hinders secondary use of data for research on a novel, emergent pathogen. | Consent language anticipating public health research; robust governance for repurposing. |

Table 2: Quantitative Summary of Data Sharing Delays in Recent Outbreaks

| Pathogen/Outbreak | Estimated Avg. Delay (Sample to Public Database) | Primary Cited Reasons for Delay (Beyond Technical) | % of Sequences with Restricted Access (e.g., DAC) |

|---|---|---|---|

| SARS-CoV-2 (Early 2020) | 0-14 days | Urgency overrode typical constraints; some sovereignty concerns later emerged. | <5% |

| MPXV (2022) | 21-60 days | Ethics approvals, novel context of outbreak, data sovereignty considerations. | ~15-20% |

| Highly Pathogen Avian Influenza (HPAI) H5N1 | 30-180+ days | Sovereign concerns over sharing virus genetics from animal/human cases; trade implications. | >50% |

| Lassa Fever (Endemic) | 6-24 months | Complex consent, infrastructure limitations, sovereignty, and academic competition. | ~30% |

Protocols for Ethically and Legally Compliant Data Sharing

Protocol 2.1: Pre-Sharing Data Sanitization and Metadata Annotation Objective: To prepare raw sequencing data and associated metadata for sharing in a manner that minimizes privacy risks and maximizes reusability while documenting legal bases.

- Data Pseudonymization:

- Replace direct identifiers (e.g., patient ID, name) with a persistent, unique study code.

- Use a trusted third party or secure algorithm to maintain a reversible key, stored separately under highest security.

- For viral consensus sequences: Strip all human genomic reads. Verify using host read removal tools (e.g.,

Kraken2,minimap2against human reference).

- Metadata Preparation:

- Compile essential epidemiological metadata using standardized ontologies (e.g., SNOMED-CT, LOINC for sample type; CIDO for infectious disease).

- Critical Step: Create a "Data Passport" – a structured file (JSON format) documenting:

- Legal basis for processing/sharing (GDPR Article 6 & 9 conditions).

- Jurisdiction of origin.

- Use restrictions (from consent).

- Contact details of the Data Custodian.

- File Packaging:

- Package sequence files (FASTQ/FASTA), sanitized metadata, and the Data Passport.

- Generate a unique, persistent dataset identifier (e.g., DOI, accession number).

Protocol 2.2: Implementing a Tiered-Access Data Release System Objective: To provide transparent, auditable, and timely data access that respects legal constraints.

- Define Access Tiers:

- Tier 1 (Open): Fully anonymized/pseudonymized viral consensus sequences with core non-sensitive metadata (e.g., location, date, specimen type). Available immediately via public repositories (INSDC, GISAID).

- Tier 2 (Registered): Data with broader metadata (e.g., patient age group, outcome) or raw sequencing reads. Requires user registration and acceptance of a standard Data Use Agreement (DUA) prohibiting re-identification.

- Tier 3 (Controlled): Data involving potential residual re-identification risk or from jurisdictions with strict sovereignty rules. Access requires project-specific approval by a Data Access Committee (DAC).

- Automated DAC Workflow Implementation:

- Use a platform (e.g., DUOS, GA4GH Passport standard) to manage applications.

- DAC reviews applications against predefined criteria (scientific validity, consent alignment).

- Approved applications trigger automated provisioning of access credentials to a secure data workspace (e.g., Terra, Seven Bridges).

Diagrams

Tiered Access Protocol for Outbreak Genomic Data

Balancing Legal Constraints with FAIR Protocol Engine

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Compliant Outbreak Data Management

| Tool/Category | Example(s) | Function in Ethical/Legal FAIR Sharing |

|---|---|---|

| Metadata Standardization | MIxS (Minimum Information about any Sequence), GA4GH Metadata Schemas | Ensures interoperability and completeness of data, crucial for defining what can be shared under given consent. |

| Pseudonymization Engine | CRUSH, pseudoGA4GH, custom scripts with secure hashing (SHA-256) | Reversibly replaces direct identifiers, a key step for GDPR-compliant processing and sharing. |

| Data Access Governance Platform | GA4GH DUO, Data Use Ontology (DUO); DAM; DUOS | Standardizes machine-readable data use restrictions, automating access tier assignment and DAC review. |

| Federated Analysis Framework | SARS-CoV-2 SPHERES, GA4GH WES, DRS & TRS APIs; Beacon v2 | Allows analysis across sovereign datasets without moving raw data, addressing data sovereignty concerns. |

| Secure Data Workspace | Terra, Seven Bridges, DNAnexus | Provides a cloud-based environment where Tier 2/3 data can be analyzed under compliant compute governance. |

| Consent Management Tool | REDCap with dynamic consent modules, PEACH | Enables management of participant preferences and tracking of consent scope for secondary use. |

| Data Transfer Agreement (DTA) Template | Model Clauses, GA4GH DTA | Pre-negotiated legal contract templates that accelerate secure data transfers between institutions. |

Managing Computational and Storage Demands for Large-Scale Sequence Data

Within the framework of FAIR (Findable, Accessible, Interoperable, Reusable) data protocols for outbreak sequencing research, managing the associated computational and storage infrastructure is a critical bottleneck. The scale of data generated by high-throughput sequencing (HTS) platforms during pathogen surveillance necessitates specialized strategies to ensure data integrity, accessibility, and analytical reproducibility while controlling costs.

Quantitative Landscape of Sequencing Data

The following table summarizes the current data output from major sequencing platforms relevant to outbreak genomics (e.g., viral and bacterial sequencing).

Table 1: Data Output and Storage Requirements for Common Sequencing Platforms

| Platform (Model Example) | Typical Output per Run (Gb) | Estimated FASTQ Size per Sample* (Gb) | Approx. Storage for 1000 Samples (Tb) |

|---|---|---|---|

| Illumina (NextSeq 2000) | 80-360 Gb | 1.5 - 3.5 | 1.5 - 3.5 |

| Oxford Nanopore (PromethION 48) | 100-200 Gb | 4 - 10 (FAST5) | 4 - 10 |

| PacBio (Revio) | 120-360 Gb | 3 - 8 (HiFi reads) | 3 - 8 |

| MGI (DNBSEQ-T20) | 12,000-18,000 Gb | 20 - 30 | 20 - 30 |

Size varies by coverage and genome size. Examples assume ~100x coverage for a ~5 Mb bacterial genome or a ~30 kb viral genome pooled in multiplex. Nanopore FAST5 includes raw signal data. *Uncompressed FASTQ storage. Aligned BAM/CRAM files and analyzed data will add overhead.

Application Notes & Protocols

Protocol: Implementing a Tiered Storage Architecture for FAIR Outbreak Data

Objective: To create a cost-effective, accessible storage system that aligns with the data lifecycle and FAIR principles.

Materials:

- Primary Storage System: High-performance network-attached storage (NAS) or parallel file system (e.g., Lustre, BeeGFS).

- Secondary Storage System: Object storage (e.g., AWS S3, Google Cloud Storage, MinIO).

- Archival Storage: Tape library or cold cloud storage (e.g., AWS Glacier, Google Coldline).

- Data Management Software: iRODS, DKIST Data Center, or custom scripts with a metadata catalog.

Methodology:

- Data Ingestion & Hot Tier: Raw sequencing data (FASTQ) is written directly to primary storage. Immediate QC (FastQC), de-multiplexing (bcl2fastq, guppy), and initial assembly/minION mapping are performed here.

- Active Analysis & Warm Tier: Processed data (aligned BAM/CRAM, variant calls, consensus genomes) are moved to object storage after primary analysis. This tier supports scalable, programmatic access for downstream phylogenetic and epidemiological analysis.

- Metadata Cataloging: Upon movement to object storage, a standardized metadata file (in ISA-TAB or RO-Crate format) is generated and linked in a searchable catalog. This is critical for Findability and Interoperability.

- Archival & Cold Tier: After publication or project conclusion, raw data and key analysis outputs are transferred to archival storage. A persistent identifier (DOI) is issued, and the metadata catalog is updated with the archival location, ensuring long-term Accessibility and Reusability.

Protocol: Cloud-Native Pandemic-Scale Phylogenetic Analysis

Objective: To execute a scalable, reproducible phylogenomic pipeline for thousands of pathogen genomes using cloud infrastructure.

Materials:

- Workflow Manager: Nextflow or Snakemake.

- Containerization: Docker or Singularity containers for all tools.

- Cloud Provider: AWS, GCP, or Azure account with batch compute services (e.g., AWS Batch, Google Batch).