Hidden Flaws, Real Consequences: Identifying and Mitigating Common Errors in Viral Sequence Databases

This article provides a critical analysis of persistent and emerging errors in public viral sequence databases.

Hidden Flaws, Real Consequences: Identifying and Mitigating Common Errors in Viral Sequence Databases

Abstract

This article provides a critical analysis of persistent and emerging errors in public viral sequence databases. We examine the foundational sources of contamination, misannotation, and incomplete metadata, detailing their impact on research reproducibility and drug target identification. Methodological strategies for robust sequence verification and database querying are presented, alongside troubleshooting workflows for identifying problematic entries. Finally, we compare the error profiles and curation practices across major repositories like GenBank, RefSeq, and GISAID, offering validation frameworks to ensure data integrity. This guide equips researchers and drug developers with the knowledge to enhance the reliability of their genomic analyses.

From Contamination to Mislabeling: The Root Causes of Viral Database Errors

Within the context of research on common errors in viral sequence databases, defining the error landscape is a critical first step. Public genomic repositories, such as GenBank, the Sequence Read Archive (SRA), and the Global Initiative on Sharing All Influenza Data (GISAID), serve as indispensable resources for researchers and drug development professionals. However, the data within them is heterogeneous, originating from diverse laboratories with varying protocols. This guide provides a technical framework for categorizing, quantifying, and investigating the types and prevalence of errors that compromise the integrity of viral sequence data, impacting downstream analyses from phylogenetic tracing to vaccine design.

Types of Errors in Viral Sequence Databases

Errors can be systematic or sporadic, introduced at various stages from sample collection to database submission.

2.1. Pre-Analytical & Experimental Errors

- Sample Contamination: Cross-contamination between samples or with host/organelle DNA.

- Poor Nucleic Acid Quality: Degradation or insufficient quantity leading to incomplete genomic coverage.

- Primer/Probe Bias: In amplification-based methods, primers may not bind effectively to all viral variants, causing underrepresented lineages.

2.2. Sequencing & Bioinformatic Errors

- Platform-Specific Errors: Illumina miscalls in homopolymer regions; Oxford Nanopore higher raw read error rates.

- Assembly Artifacts: Misassemblies due to recombination, repeats, or low-coverage regions creating chimeric sequences.

- Consensus Generation Issues: Over-reliance on majority rule can mask legitimate minority variants.

2.3. Curation & Annotation Errors

- Incorrect Metadata: Erroneous collection date, geographic location, or host species.

- Frameshifts/Stop Codons: Unnoticed frameshifts in coding sequences (CDS) annotated as functional proteins.

- Redundancy & Duplication: Multiple submissions of the same sequence under different accession numbers.

Prevalence: Quantitative Analysis

Data from recent studies (2023-2024) investigating error rates in public repositories are summarized below.

Table 1: Prevalence of Sequence-Level Errors in Public Viral Repositories

| Error Type | Representative Study Focus | Estimated Prevalence | Key Findings |

|---|---|---|---|

| In-Del Errors in Homopolymers | SARS-CoV-2 Illumina datasets from SRA | 0.5-2.0% of homopolymer regions >5bp | Systematic undercalling of insertions, affecting ORF1ab and S gene annotations. |

| Contamination | Human metagenomic (RNA-seq) datasets in SRA | ~3% of "viral" reads were host/other | Common in low-input samples; misassigns host RNA as viral. |

| Annotational Frameshifts | Influenza A virus sequences in GenBank | ~1.2% of HA/NA segments | Often caused by single nucleotide indels not corrected prior to submission. |

| Critical Metadata Errors | Geographic location in arbovirus datasets | Up to 5% (in specific subsets) | Misplaced data confounds spatial spread models and surveillance. |

Table 2: Error Prevalence by Sequencing Technology (Viral Whole Genome)

| Technology | Typical Raw Read Error Rate | Post-Error Correction Rate | Primary Error Type |

|---|---|---|---|

| Illumina (Short-Read) | ~0.1% | <0.01% | Substitution (AT, CG bias) |

| Oxford Nanopore (R10.4.1) | ~4% | <0.1% | Insertion/Deletion |

| PacBio HiFi (Circular Consensus) | ~0.3% | <0.01% | Random Substitution |

Experimental Protocols for Error Detection and Validation

4.1. Protocol: Identifying Assembly and Contamination Errors

- Title: Triangulation Assembly Validation Protocol

- Purpose: To identify chimeric assemblies and contamination by comparing multiple assembly methodologies.

- Materials: Raw FASTQ files, high-performance computing cluster.

- Steps:

- Independent Assembly: Assemble the same read set using three distinct de novo assemblers (e.g., SPAdes, MEGAHIT, IVA).

- Reference-Guided Mapping: Map the raw reads to a closely related reference genome using BWA or Bowtie2.

- Consensus Generation: Generate a consensus from the mapping using bcftools.

- Comparison: Align the three de novo contigs and the mapping-based consensus using MAFFT.

- Discrepancy Flagging: Manually inspect regions of high disagreement (>5% divergence) in a viewer like Geneious. These regions likely represent assembly ambiguity, recombination, or contamination.

- PCR Validation: Design primers flanking the discrepant region for Sanger sequencing validation from the original sample.

4.2. Protocol: Validating Annotated Coding Sequences

- Title: In-Silico ORF Integrity Check

- Purpose: To detect frameshifts and premature stop codons in annotated viral proteins.

- Materials: Viral genome sequence in GenBank format, Python/R environment.

- Steps:

- Data Extraction: Parse the GenBank file to extract the nucleotide sequence for each annotated CDS.

- Translation: Translate the nucleotide sequence in the annotated frame.

- Scan: Scan the translated amino acid sequence for internal stop codons ("*").

- Frame Analysis: Translate the nucleotide sequence in all six possible frames. If an alternative frame yields a significantly longer open reading frame without internal stops, flag the original annotation.

- Cross-Reference: Check flagged entries against literature and curated databases (e.g., UniProt) to determine if the frameshift is a documented biological feature (e.g., ribosomal frameshift in Coronaviridae) or a likely error.

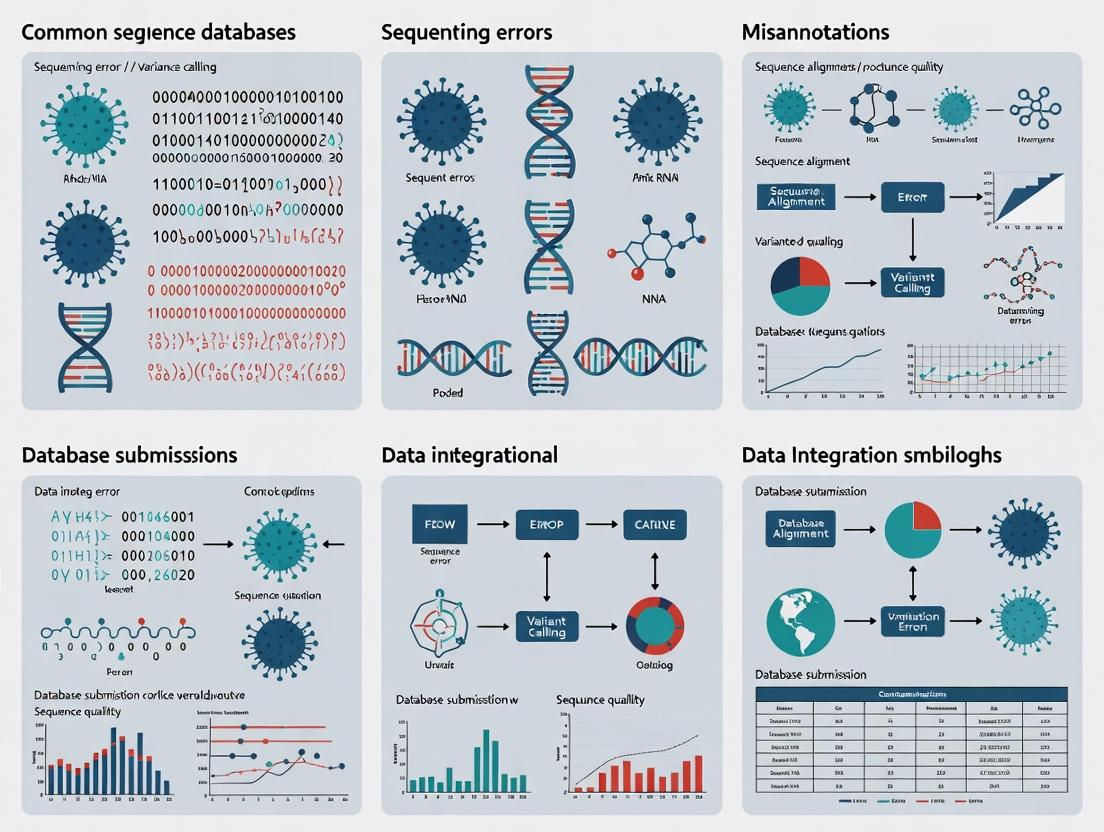

Visualization of Workflows and Relationships

(Figure 1: Error Introduction Points in Viral Data Lifecycle. Max width: 760px)

(Figure 2: Triangulation Protocol for Assembly Validation. Max width: 760px)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Tools for Viral Sequence Error Investigation

| Item | Function in Error Analysis | Example Product/Software |

|---|---|---|

| Synthetic Control RNA | Provides an error-free reference for benchmarking sequencing and bioinformatic pipeline accuracy. Distinguishes technical vs. biological variation. | ERCC RNA Spike-In Mix (Thermo Fisher); Seraseq Viral Metagenomics Panel (SeraCare) |

| High-Fidelity Polymerase | Minimizes amplification-induced errors during cDNA synthesis and PCR, reducing artificial minority variants. | SuperScript IV (Thermo Fisher); Q5 High-Fidelity DNA Polymerase (NEB) |

| Prokaryotic Carrier RNA | Improves recovery during nucleic acid extraction from low-viral-load samples, reducing stochastic sampling errors. | UltraPure Glycogen (Thermo Fisher); RNase-free Yeast tRNA |

| Multi-Platform Sequencing | Using both short-read (accuracy) and long-read (phasing, structure) technologies enables error correction and validation. | Illumina NextSeq 2000; Oxford Nanopore PromethION |

| Metagenomic Classifier | Identifies and quantifies contaminating sequences from host, microbiome, or other sources within raw data. | Kraken2; Centrifuge |

| Alignment & Visualization Suite | Critical for manual inspection of discrepancies flagged by automated pipelines. | Geneious Prime; UGENE; IGV |

| Automated Curation Pipeline | Script-based workflow to flag common annotation issues (frameshifts, stop codons, metadata conflicts). | BioPython toolkit; Nextclade (for specific viruses) |

A systematic understanding of the error landscape—categorizing types, quantifying prevalence, and applying rigorous validation protocols—is foundational for improving the fidelity of viral sequence databases. For researchers relying on these repositories, especially in high-stakes fields like drug and vaccine development, incorporating the error detection methodologies and quality control reagents outlined here is no longer optional but a core component of robust bioinformatic analysis. This diligence ensures that scientific conclusions are drawn from biological reality, rather than technical artifact.

Within the broader thesis on common errors in viral sequence databases, sequence contamination represents a critical, pervasive flaw. This in-depth guide examines the tripartite crisis of contamination from host genomes, cloning vectors/assembly reagents, and cross-sample sources. Such pollution compromises the integrity of public databases, leading to erroneous biological interpretations, flawed phylogenetic analyses, and misdirected therapeutic development.

Core Contamination Types & Quantitative Impact

Recent analyses of major databases reveal the alarming scale of the problem.

Table 1: Prevalence of Contamination in Public Sequence Databases

| Contamination Type | Estimated Prevalence (NCBI SRA, 2023) | Commonly Affected Databases | Primary Impact |

|---|---|---|---|

| Host Genome (Human/Mouse) | 0.5-1.2% of all public sequences | SRA, GenBank, EMBL-EBI | Misannotation of endogenous viral elements |

| Cloning Vector / Adapter | ~0.8% of assembled viral genomes | RefSeq Viral, GenBank | Chimeric genome assemblies, false ORFs |

| Cross-Sample / Lab-Based | Difficult to quantify; significant in metagenomics | IMG/V, ViPR, GISAID | False positivity, erroneous diversity estimates |

| Synthetic Control | 0.3% of "viral" entries in some subsets | All, especially diagnostic assay data | Inclusion of non-biological sequences |

Experimental Protocols for Contamination Detection & Mitigation

Protocol: In silico Screening for Host Contamination

Principle: Align query sequences to host reference genomes.

- Data Preparation: Retrieve sequences in FASTA format.

- Index Host Genomes: Using

bwa indexorbowtie2-buildfor relevant hosts (e.g., human GRCh38, mouse GRCm39). - Alignment: Execute alignment with high-sensitivity parameters.

- Filtering: Extract reads with alignment length >50bp and identity >90%. Flag source sequences for review or removal.

- Validation: Manually inspect flagged alignments in a viewer (e.g., IGV) to confirm homology.

Protocol: Vector/Adapter Sequence Identification

Principle: Screen against curated databases of common vectors and oligonucleotides.

- Database Curation: Maintain a local FASTA database from sources like Univec.

- Local BLAST: Perform BLASTn search against the vector database.

- Trimming/Removal: Use tools like

SeqKitorBBdukto remove identified adapter sequences from read termini. - Assembly Re-assessment: Re-assemble trimmed reads and compare contigs to original assembly.

Protocol: Cross-Contamination Detection in Metagenomic Workflows

Principle: Use unique marker kmers or statistical abundance outliers.

- Positive Control Spiking: Include a non-biological synthetic control (e.g., Equine Arteritis Virus in human samples) during library prep.

- Bioinformatic Subtraction: Map all reads from a sequencing run to all reference genomes from projects in that run.

- Abnormal Profile Detection: Identify sequences with highly skewed, non-biological abundance distributions across samples.

- Source Tracking: If a contaminant is identified, use its kmer profile to trace potential sample-to-sample leakage in the workflow.

Signaling Pathway: Institutional Response to Detected Contamination

Title: Institutional Response Workflow to Sequence Contamination

Experimental Workflow for Contamination-Aware Viral Genome Assembly

Title: Viral Genome Assembly with Integrated Contamination Screening

Table 2: Key Reagents and Resources for Contamination Management

| Item / Resource | Function / Purpose | Example / Source |

|---|---|---|

| Univec Database | Core database of vector, adapter, and linker sequences for screening. | NCBI Univec |

| Host Reference Genomes | High-quality reference sequences for in silico subtraction of host reads. | GRCh38 (human), GRCm39 (mouse), Ensembl, UCSC Genome Browser |

| Synthetic Control Spikes | Non-biological or exogenous viral sequences added to monitor cross-contamination. | PhiX, Equine Arteritis Virus, Armored RNA |

| BLAST+ Suite | Standard tool for local sequence alignment against contamination databases. | NCBI |

| Bowtie2 / BWA | Fast, memory-efficient aligners for host read subtraction. | Open Source |

| Kraken2 / Bracken | Taxonomic classification tool to identify anomalous sequence origins. | Open Source |

| FastQC / MultiQC | Quality control visualization to detect overrepresented sequences (adapters/vectors). | Babraham Bioinformatics |

| BBTools (BBduk) | Toolkit for adapter trimming, quality filtering, and artifact removal. | DOE Joint Genome Institute |

| DNase/RNase Treatment Kits | Wet-lab reagent to degrade nucleic acids from previous experiments on lab surfaces. | Commercial suppliers (ThermoFisher, Qiagen) |

| UV Crosslinker | Equipment to irradiate and crosslink contaminating DNA/RNA on labware. | Laboratory equipment suppliers |

Addressing the contamination crisis in viral sequence databases is not merely a technical cleanup task but a foundational requirement for robust virological research and drug development. By implementing the rigorous experimental protocols and bioinformatic workflows outlined here, researchers can significantly improve the fidelity of generated data. This effort, framed within the broader thesis on database errors, is essential for ensuring that downstream analyses—from evolutionary studies to vaccine target identification—are built upon a reliable foundation.

Research within viral sequence databases (e.g., GenBank, GISAID, NMDC) is foundational to modern virology, epidemiology, and therapeutic development. A core thesis in this field identifies common errors in viral sequence databases as a critical impediment to robust science. While base-calling errors and contamination are often discussed, systematic metadata gaps—specifically missing collection date, geographic location, or host information—represent a pervasive, high-impact class of error. These gaps introduce severe biases, confounding phylogenetic reconstruction, evolutionary rate estimation, ecological niche modeling, and the identification of zoonotic origins. This whitepaper provides a technical guide on how these gaps skew analysis and offers protocols for mitigation.

Quantitative Impact: How Gaps Skew Key Analyses

The following tables summarize the quantitative effects of metadata incompleteness on common analytical outcomes.

Table 1: Impact of Metadata Gaps on Phylogenetic & Evolutionary Analysis

| Analysis Type | Complete Metadata Outcome | With Missing Collection Date | With Missing Location | With Missing Host |

|---|---|---|---|---|

| Evolutionary Rate (subs/site/year) | Accurate, time-calibrated estimate (e.g., 1e-3) | Rate underestimated; loss of temporal signal (e.g., 1e-4); inflated credibility intervals. | Potential geographic confounding of rate estimates. | Missed host-dependent rate variation. |

| TMRCA (Time to Most Recent Common Ancestor) | Precise date estimate (e.g., Oct 2021) | Biased, often artificially older TMRCA estimates. | Unaffected if population is panmictic; biased with population structure. | Unclear if divergence is due to time or host jump. |

| Phylogenetic Clustering (e.g., for outbreak tracking) | Clear spatiotemporal clusters identified. | Clusters based solely on genetic distance, misrepresenting transmission dynamics. | Inability to link transmission chains across regions. | Inability to discern human-to-human vs. animal spillover chains. |

| Positive Selection Detection (dN/dS) | Accurate identification of host-adaptation sites. | Time-dependence of dN/dS may be obscured. | May confound spatially-varying selection with other signals. | Critically skewed: Cannot attribute selection pressure to specific host environments. |

Table 2: Impact on Epidemiological & Ecological Models

| Model Type | Critical Metadata | Consequence of Gap |

|---|---|---|

| Spatial Spread Model | Precise geographic coordinates (or region) | Cannot reconstruct introduction routes or diffusion waves. Model fails to predict future spread. |

| Ecological Niche Model (Species Distribution) | Host species & Location | Overly broad, inaccurate predicted reservoir ranges; failed identification of zoonotic risk hotspots. |

| Phylogeographic Analysis | Location | Breaks in ancestral state reconstruction; unreliable inference of migration pathways. |

| Antigenic Cartography | Collection Date | Unable to track antigenic drift over time, reducing vaccine strain selection accuracy. |

Experimental Protocols for Assessing and Mitigating Metadata Gaps

Protocol 3.1: Quantifying Metadata Completeness in a Database

- Objective: Systematically audit a viral sequence dataset (e.g., all Betacoronavirus sequences in GenBank) for completeness of key fields.

- Materials: Database dump or API access (e.g., NCBI Entrez), scripting language (Python/R), structured query tools.

- Methodology:

- Data Retrieval: Use

Biopythonorrentrezto fetch sequence records with associated metadata. - Field Parsing: For each record, parse the

collection_date,country/region, andhostfields. - Completeness Scoring: Categorize each field as:

Complete(valid value),Partial(e.g., only year for date, only country for location), orMissing. - Temporal Analysis: For dates, calculate the percentage with full resolution (YYYY-MM-DD). Plot completeness over time of submission.

- Cross-analysis: Determine if gaps correlate with specific submitting labs, host types, or geographic regions.

- Data Retrieval: Use

Protocol 3.2: Simulating the Impact of Missing Dates on Evolutionary Rate Estimation

- Objective: Empirically demonstrate bias in Bayesian evolutionary rate estimation when dates are missing.

- Materials: BEAST2 package, sequence simulator (e.g.,

pyvolveorSeq-Gen), known phylogeny with known evolutionary rate. - Methodology:

- Simulate Ground Truth: Simulate nucleotide sequences along a known tree with a pre-defined, time-calibrated evolutionary rate (e.g., 5e-4 subs/site/year). Assign each tip a precise date.

- Create Degraded Dataset: Randomly remove the precise date for a defined percentage (e.g., 30%, 50%) of tips, degrading them to only the year.

- Bayesian Inference: Run two parallel BEAST2 analyses:

- Analysis A: Use the complete, precise dates.

- Analysis B: Use the degraded date set.

- Compare Outputs: Compare the posterior distributions of the evolutionary rate and TMRCA from both analyses. The degraded analysis (B) will show a wider 95% HPD (Highest Posterior Density) and a median rate biased towards lower values.

Visualization: The Cascade of Analytical Errors

Title: How Metadata Gaps Cascade to Poor Public Health Outcomes

Title: Workflow for Metadata Curation and Validation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Metadata-Rich Viral Research

| Tool/Reagent Category | Specific Example(s) | Function & Relevance to Metadata Integrity |

|---|---|---|

| Standardized Collection Kits | NIH/NIAID BEI Resources protocols, WHO specimen kits. | Ensure host, date, and location are recorded at source with standardized formats, minimizing initial gaps. |

| Laboratory Information Management System (LIMS) | Benchling, LabArchives, Freezerworks. | Digitally tracks specimen from collection through sequencing, automatically propagating metadata to sequence files. |

| Metadata Validation Software | metaGrab, Keemei (for Google Sheets), INSDC submission tools. |

Checks for format compliance, controlled vocabulary (e.g., NCBI Taxonomy ID for host), and logical consistency before database submission. |

| Phylogenetic Software with Tip-Dating | BEAST2, MrBayes 3.2+. | Explicitly models sampling dates to estimate evolutionary rates, but requires complete date metadata for accuracy. |

| Spatial Analysis Packages | seraphim (for BEAST), BiogeoBEARS, phylogeo (R). |

Reconstructs viral spatial spread; dependent on precise location metadata for reliable output. |

| Public Database APIs & Clients | NCBI Entrez (via Biopython), GISAID API, IRD/VRP tools. |

Programmatic access to retrieve sequences with associated metadata for large-scale, reproducible analyses. |

| Data Harmonization Tools | MicrobeTrace, Nextstrain augur pipelines. |

Standardize and align metadata from disparate sources into a unified format for combined analysis. |

This whitepaper, framed within a broader thesis on common errors in viral sequence databases, addresses two pervasive issues compromising the integrity of viromics and viral genomics research: mislabeled strains and chimeric sequences. These inconsistencies introduce significant noise into downstream analyses, affecting evolutionary studies, diagnostic assay design, and therapeutic target identification. For researchers, scientists, and drug development professionals, recognizing and mitigating these errors is critical for robust research outcomes.

Live internet searches of recent literature (2023-2024) and database advisories reveal a non-negligible prevalence of taxonomic and annotation issues.

Table 1: Prevalence of Taxonomic and Sequence Artifacts in Public Repositories

| Database / Study | Error Type | Estimated Prevalence | Primary Impact |

|---|---|---|---|

| NCBI Nucleotide (Advisory Notes) | Mislabeled/Misidentified Organisms | ~0.5-1% of entries* | Phylogenetic misplacement, incorrect host attribution |

| Public Viral Isolate Collections | Cross-contamination / Mislabeling | 1-3% (based on re-sequencing audits) | Compromised reference strains for assay development |

| High-Throughput Sequencing Studies | Chimeric Amplicons (e.g., in SARS-CoV-2) | Up to 2% of reads in some amplicon protocols | Spurious recombinant variants, false single nucleotide polymorphisms (SNPs) |

| Metagenomic Assemblies (Virome Studies) | Computational Chimeras | Varies widely (0.1-5%) based on assembler and overlap settings | Artificial genes, inflated diversity estimates |

*Note: Prevalence estimates are extrapolated from periodic NCBI screening reports and user submissions. The true figure is challenging to quantify globally.

Protocols for Detection and Resolution

Protocol A: Validating Strain Identity and Detecting Mislabeling

Objective: To confirm the taxonomic identity of a viral isolate or sequence entry.

Materials:

- Target Sequence(s): The viral genome sequence(s) in question (FASTA format).

- Verified Reference Sequences: High-quality, trusted reference genomes for the suspected true and labeled taxa (from authoritative sources like ICTV reference lists).

- Computational Tools: BLASTn, k-mer based tools (Kraken2, Bracken), phylogenetic inference software (MAFFT, IQ-TREE).

Methodology:

- Whole-Genome Alignment: Perform a global alignment of the target sequence against a curated database of reference genomes using BLASTn. The top hit by percent identity and query coverage provides an initial identity check.

- k-mer Composition Analysis: Classify the sequence using Kraken2 with a minimal database containing only viral genomes. Discrepancy between the k-mer classification and the original label is a strong indicator of mislabeling.

- Phylogenetic Triangulation: a. Generate a multiple sequence alignment (MSA) using MAFFT with the target sequence, the reference genome for the labeled taxon, and the reference genome for the top BLAST/k-mer hit (suspected true taxon). b. Include outgroup sequences from a related viral genus. c. Construct a maximum-likelihood phylogeny (IQ-TREE with ModelFinder). d. Interpretation: The target sequence should cluster monophyletically with its true reference clade with high bootstrap support (>90%). Nesting within or sister relationship to a different taxon confirms mislabeling.

Title: Workflow for Detecting Mislabeled Viral Sequences

Protocol B: Detecting and Deconstructing Chimeric Sequences

Objective: To identify chimeras formed via laboratory (PCR) or computational (assembly) artifacts.

Materials:

- Sequence Data: For amplicon-based studies: raw paired-end reads. For assembled contigs: the contig sequence and original reads if available.

- Reference Genome: A close reference for the expected virus.

- Tools: Read mapping tools (Bowtie2, BWA), chimera detection tools (UCHIME2, DECIPHER), assembly visualization software (Geneious, IGV).

Methodology for In Silico Detection:

- Read-Mapping Inspection: Map all reads back to the suspected chimeric contig or amplicon consensus using Bowtie2. Visualize in IGV. A sudden, sustained drop in read coverage at a specific point can indicate a fusion junction of two distinct parent sequences.

- Reference-Based Chimera Check:

a. Use the

uchime2_reffunction in VSEARCH. Provide the suspected sequence as the "query" and a database of non-chimeric reference sequences as the "reference". b. The algorithm performs pairwise global alignments and reports a score (non-chimeric, chimeric, or borderline). A chimeric call typically shows two high-identity segments mapping to different parent references. - De Novo Chimera Detection (for metagenomes without references):

a. Use the

chimera.denovofunction in DECIPHER (R/Bioconductor) on a set of aligned sequences. b. The method models sequence formation as a tree and identifies sequences likely to be composites of two more abundant "parent" sequences. - Experimental Validation (Required for Confirmation): Re-amplify the target region using primers designed to be specific to each putative parent sequence segment identified in silico. Sanger sequence the products. The original full-length amplicon should only be generated in a mixed-template PCR.

Title: Chimeric Sequence Identification and Validation Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Addressing Taxonomic and Chimeric Errors

| Item | Function/Application | Example/Note |

|---|---|---|

| Authenticated Reference Strains | Gold-standard controls for phylogenetic placement and assay validation. | Obtain from recognized repositories (ATCC, NCPV, BEI Resources). |

| High-Fidelity Polymerase | Reduces PCR errors and limits chimera formation during amplification. | Enzymes like Q5 (NEB) or Phusion (Thermo Fisher). |

| Synthetic Control Sequences | Spike-in controls for metagenomic studies to detect cross-sample contamination and assembly artifacts. | Non-natural viral genomes (e.g., from PhiX or custom designs). |

| Blocking Oligonucleotides | Suppress amplification of contaminating host or common lab-strain DNA in PCRs. | Used in viral metagenomics to enrich for target viruses. |

| UMI (Unique Molecular Identifier) Adapters | Tags each original molecule before PCR to trace and collapse duplicates, identifying PCR/sequencing artifacts. | Critical for distinguishing low-frequency real variants from chimeric artifacts in amplicon sequencing. |

| In Silico Reference Databases | Curated, non-redundant sequence sets for accurate classification and chimera checking. | Use tools like mothur with SILVA or ViralRefSeq-curated subsets; avoid the complete, uncurated NCBI nr. |

| Bioinformatics Pipelines | Automated, reproducible workflows for quality control and error screening. | Nextflow/Snakemake pipelines incorporating tools like FastQC, Bowtie2, VSEARCH, and Kraken2. |

The Impact of Submission Volume and Automated Processing on Error Proliferation

The exponential growth of viral sequence data, driven by high-throughput sequencing and global surveillance initiatives, has created an unparalleled resource for biomedical research. This data underpins critical efforts in outbreak tracking, vaccine design, and therapeutic development. However, its utility is fundamentally contingent upon data integrity. This whitepaper, framed within the broader thesis on Common errors in viral sequence databases, examines the dual-edged role of high submission volume and automated bioinformatics pipelines in the proliferation of errors. We argue that the very mechanisms designed to handle scale often become vectors for systematic inaccuracy.

Submission Phase Errors

Errors originate at the point of data generation and submission. Key factors include:

- Sample Contamination: Cross-contamination between samples or with host/vector sequences.

- Sequencing Artifacts: PCR recombination, chimeric reads, and base-calling errors, especially in homopolymeric regions.

- Inadequate Metadata: Missing or mislabeled critical information (host, collection date, geographic location).

Automated Processing Amplification

Automated pipelines, while essential for processing large datasets, can systematically amplify these initial errors:

- Reference Bias: Assembly and mapping algorithms biased toward a reference genome can misrepresent novel variants or recombinants.

- Error-Prone Automation: Automated annotation tools that propagate functional predictions from outdated or incorrect reference entries.

- Lack of Curation Scalability: Automated submissions bypass manual curation checks; downstream quality control filters are often permissive to avoid false negatives.

Quantitative Analysis of Error Rates

Recent studies provide measurable evidence of error proliferation linked to database scale and automation. The following table summarizes key findings.

Table 1: Documented Error Rates and Sources in Viral Sequence Databases

| Error Type | Study/Source (2023-2024) | Estimated Frequency | Primary Amplifying Factor |

|---|---|---|---|

| Misannotated Host | Re-analysis of public betacoronavirus data | ~8-12% of entries | Automated metadata parsing from template fields |

| Chimeric Sequences | Analysis of HCV & HIV-1 NGS datasets | 1-5% of assembled genomes | Inadequate chimera detection in assembly pipelines |

| Contaminant Reads | Review of SARS-CoV-2 wastewater sequences | Up to 15% of samples | Lack of specific filtration in automated host removal |

| Incorrect Coding Sequences (CDS) | Audit of flavivirus entries in GenBank | ~10% of entries have CDS issues | Propagation of historical annotation errors via automated tools |

Experimental Protocol for Error Detection and Validation

To investigate and quantify errors, a robust experimental and computational validation protocol is required.

Protocol 4.1: In Silico Audit of Database Entries

- Dataset Retrieval: Programmatically download target viral sequence datasets (e.g., all Orthopoxvirus entries) from INSDC databases using APIs.

- Metadata Consistency Check: Parse and validate metadata fields (host, country, collection date) against controlled vocabularies; flag entries with mismatches or empty required fields.

- Sequence Quality Assessment: Calculate per-sequence statistics (N-content, ambiguous bases, length deviation from median). Filter sequences with >5% Ns or anomalous length.

- Contamination Screening: Align all sequences to a composite database of potential contaminants (host genomes, vectors, common lab strains) using BLASTn or minimap2. Flag sequences with high-identity secondary alignments.

- Phylogenetic Anomaly Detection: Perform multiple sequence alignment (MAFFT) and construct a maximum-likelihood tree (IQ-TREE). Visualize to identify sequences with strong topological incongruence (potential mislabeling or recombination).

Protocol 4.2: Experimental Validation of Suspected Errors

- Wet-Lab Re-extraction & Sequencing: For flagged sequences originating from accessible physical samples, perform new RNA/DNA extraction.

- Orthogonal Verification: Use an alternative sequencing platform (e.g., Oxford Nanopore if original was Illumina) and/or Sanger sequencing of specific genomic regions.

- Data Reconciliation: Compare the newly generated high-confidence sequence to the original public entry. Document the nature of any discrepancies (e.g., SNP, indel, contaminant).

Visualizing the Error Proliferation Workflow

The relationship between high volume, automation, and error proliferation is conceptualized in the following feedback loop.

Diagram 1: Error Proliferation Cycle in Viral Genomics

The Scientist's Toolkit: Research Reagent Solutions

Critical tools and resources for conducting error-aware viral genomics research.

Table 2: Essential Toolkit for Mitigating Database Errors

| Item / Resource | Function & Rationale |

|---|---|

| NCBI Virus Datasets API | Programmatic access to download large, specific datasets with consistent metadata for controlled analysis. |

BBTools Suite (bbduk.sh) |

Effective removal of host and common contaminant sequences using k-mer matching prior to assembly. |

| UViG/Chainer | Specialized tool for detecting chimeric sequences in viral genomes from NGS data. |

| Nextclade CLI | Provides standardized quality checks (missing data, mixed sites, frame shifts) against a curated reference. |

| CheckV | Assesses the completeness and identifies potential contamination in viral genome sequences. |

| Phycode | A curated database of expected phylogenetic relationships used to flag taxonomically anomalous sequences. |

| Sanger Sequencing Reagents | Gold-standard orthogonal validation of specific genomic regions flagged by in silico audits. |

The integrity of viral sequence databases is compromised by a systemic cycle where volume necessitates automation, and insufficiently validated automation introduces and spreads errors. To break this cycle, the field must adopt:

- Stricter Submission Standards: Enforce mandatory completeness checks for metadata and raw read deposition.

- Improved Pipeline Governance: Integrate multiple, orthogonal error-detection modules (e.g., Chainer, CheckV) as default in public processing workflows.

- Community-Led Curation: Develop scalable, versioned community annotation platforms to correct errors without removing original data. For researchers and drug developers, a stance of trust but verify is essential. Cross-database validation and experimental confirmation of critical sequences must become routine practice to ensure the foundation of viral research is robust and reliable.

Best Practices for Navigating and Interrogating Viral Databases Safely

Within the broader thesis on Common Errors in Viral Sequence Databases, the accuracy of submitted data is the foundational pillar for all downstream research. Errors introduced at the point of submission—ranging from metadata mislabeling and sequence contamination to incorrect isolate names and geographical origin—propagate through databases, compromising evolutionary analyses, drug target identification, and public health surveillance. This whitepaper provides a comprehensive pre-submission quality control (QC) checklist and technical guide to empower researchers to minimize these entry errors at the source.

The Imperative for Rigorous Pre-submission QC

The reliance on viral sequence databases for critical applications like vaccine design, antiviral drug development, and outbreak tracing (e.g., SARS-CoV-2 variants, influenza surveillance) demands impeccable data integrity. Common errors can be categorized and their impacts are significant, as summarized in Table 1.

Table 1: Common Entry Errors and Their Impact on Viral Database Research

| Error Category | Specific Examples | Potential Impact on Research |

|---|---|---|

| Metadata Errors | Incorrect collection date, host species, geographical location. | Skews evolutionary rate calculations, misleads phylogeographic studies. |

| Sample/Contamination | Cross-contamination, host/genomic nucleic acid presence. | Generates chimeric or misleading sequences, false positive variant calls. |

| Sequence Quality | Poor base-calling, uncalled bases (N's), adapter presence. | Obscures true genetic variation, hinders consensus building. |

| Nomenclature & Labeling | Non-standard isolate names, inconsistent lineage labels. | Causes dataset redundancy, complicates data retrieval and integration. |

| Annotation Errors | Incorrect gene boundaries, flawed protein translations. | Misidentifies functional regions, leads to incorrect structural models. |

Pre-submission QC Checklist: A Step-by-Step Guide

This checklist outlines a systematic workflow for validating data prior to deposition in public repositories like GenBank, GISAID, or NDAR.

Phase 1: Raw Data and Metadata Verification

- Metadata Auditing: Verify all sample metadata (isolate name, host, collection date [YYYY-MM-DD], location [with GPS coordinates if possible], submitting lab details) against laboratory records. Use controlled vocabularies.

- Ethics & Compliance: Confirm all necessary permits and ethical approvals are documented for sharing.

Phase 2: In silico Sequence Validation

- Contamination Screening: Perform alignment to host genome (e.g., human, mouse) and common contaminants (e.g., mycoplasma, E. coli) using tools like BLAST or Kraken2. Remove contaminant reads.

- Quality Trimming: Use tools like Trimmomatic or Fastp to remove adapter sequences and low-quality bases (e.g., Q-score < 20). Set a minimum length threshold.

- Error Correction: For assembled genomes, verify consensus sequence by mapping reads back to the assembly (e.g., using BWA/IGV). Check for regions of low coverage or high heterogeneity.

- Chimera Check: For amplicon-based sequences (e.g., HIV pol, 16S), use tools like UCHIME or DECIPHER to detect and remove chimeric sequences.

Phase 3: Biological & Taxonomic Plausibility

- BLAST Validation: Confirm the sequence's closest matches are from the expected viral taxon and host.

- Open Reading Frame (ORF) Check: Verify major viral ORFs are intact and free of unexpected stop codons (unless documenting a genuine mutation).

- Phylogenetic Sanity Check: Perform a quick preliminary phylogenetic analysis with closely related reference sequences. Ensure the new sequence clusters as expected; outliers may indicate a labeling or contamination issue.

Phase 4: Final Formatting for Submission

- Nomenclature Compliance: Adhere to database-specific formatting rules for isolate names, gene annotations, and source modifiers.

- File Formatting: Ensure sequence file (FASTA) is correctly formatted, with a descriptive definition line. Verify associated metadata file (e.g., CSV, TSV) aligns perfectly with sequence records.

- Dual Review: Have a second researcher independently review all metadata and annotations against the original source documents.

Experimental Protocols for Key Cited QC Steps

Protocol 1: In silico Host Contamination Screening with Kraken2

- Objective: Identify and quantify reads originating from the host organism.

- Methodology:

- Database Building: Download the host reference genome (e.g., GRCh38 for human). Build a custom Kraken2 database:

kraken2-build --download-taxonomy --db ./host_db && kraken2-build --add-to-library host_genome.fna --db ./host_db && kraken2-build --build --db ./host_db. - Classification: Run Kraken2 on raw sequencing reads (R1.fq, R2.fq):

kraken2 --db ./host_db --paired R1.fq R2.fq --output classifications.kraken --report report.kraken2. - Interpretation: Analyze the report file. A high percentage of reads classified as "Homo sapiens" indicates significant host contamination requiring additional wet-lab or bioinformatic depletion.

- Database Building: Download the host reference genome (e.g., GRCh38 for human). Build a custom Kraken2 database:

- Reagents: Custom Kraken2 database, raw FASTQ files.

Protocol 2: Phylogenetic Sanity Check using MAFFT and FastTree

- Objective: Provide a rapid phylogenetic placement to detect major labeling errors.

- Methodology:

- Sequence Alignment: Gather the new sequence and 10-20 relevant reference sequences from a trusted database. Perform multiple sequence alignment using MAFFT:

mafft --auto input_sequences.fasta > aligned_sequences.fasta. - Tree Inference: Generate an approximate maximum-likelihood tree with FastTree:

FastTree -gtr -nt aligned_sequences.fasta > preliminary_tree.tree. - Visualization & Evaluation: View the tree in software like FigTree or iTOL. The new sequence should cluster with isolates from the same viral species/lineage, host, and approximate collection timeframe. Investigate any unexpected placement.

- Sequence Alignment: Gather the new sequence and 10-20 relevant reference sequences from a trusted database. Perform multiple sequence alignment using MAFFT:

Visualizations

Pre-submission QC Workflow & Error Loop

Error Sources & Corresponding QC Checkpoints

The Scientist's Toolkit: Research Reagent & Software Solutions

Table 2: Essential Tools for Viral Sequence Pre-submission QC

| Tool/Reagent Name | Category | Primary Function in QC |

|---|---|---|

| FastQC / MultiQC | Software | Provides an initial visual report on raw read quality, per-base sequences, adapter contamination, and GC content. |

| Trimmomatic / Fastp | Software | Performs adapter trimming and quality filtering of raw sequencing reads to remove low-quality data. |

| Kraken2 / BLAST | Software | Identifies taxonomic origin of reads to flag host or environmental contamination. |

| Bowtie2 / BWA | Software | Maps sequencing reads to a reference genome for consensus validation and coverage analysis. |

| MAFFT / MUSCLE | Software | Aligns multiple nucleotide or amino acid sequences for phylogenetic and ORF analysis. |

| Integrative Genomics Viewer (IGV) | Software | Visualizes read mappings against a reference to inspect coverage, variants, and potential assembly errors manually. |

| Qubit Fluorometer & dsDNA HS Assay | Wet-Lab Reagent | Accurately quantifies double-stranded DNA/RNA concentration post-extraction, critical for library prep success. |

| Agarose Gel Electrophoresis | Wet-Lab Protocol | Provides a qualitative check for nucleic acid integrity and size, detecting degradation or adapter dimer. |

| Negative Control (NTC) | Wet-Lab Control | Critical for detecting cross-contamination during PCR amplification or library preparation steps. |

| Reference Genome (Curated) | Data | A high-quality, annotated genome sequence for the target virus is essential for mapping, annotation, and comparison. |

Implementing a rigorous, multi-stage pre-submission QC protocol is a non-negotiable step in responsible viral sequence data generation. By systematically addressing errors in metadata, sequence quality, and biological plausibility before database entry, researchers directly enhance the reliability of the global data commons. This proactive approach mitigates one of the core vulnerabilities outlined in the thesis on database errors, thereby strengthening the foundation for all subsequent research in epidemiology, evolution, and therapeutic development.

This guide is framed within a critical research thesis examining pervasive errors in viral sequence databases. Common issues include misannotation, chimeric sequences, host-genome contamination, poor sequencing quality, and incomplete metadata. These errors propagate through research, compromising genomic analyses, epidemiological tracking, drug target identification, and vaccine development. This whitepaper provides an in-depth technical guide to designing robust bioinformatic queries and filtering strategies essential for isolating high-quality viral sequences from noisy, error-prone databases.

Core Filtering Dimensions and Quantitative Benchmarks

Effective filtering operates across multiple dimensions. The following table summarizes key metrics, typical error rates observed in public databases (e.g., GenBank, SRA, GISAID), and recommended thresholds for high-quality sequence isolation.

Table 1: Quantitative Benchmarks for Viral Sequence Filtering

| Filtering Dimension | Common Error/Issue | Observed Error Rate in Public DBs* | Recommended Threshold for "High-Quality" |

|---|---|---|---|

| Sequence Length | Truncated/partial genes; assembly fragments. | ~15-30% of entries are <80% of expected length. | Within ±10% of expected genome/gene length for the virus. |

| Ambiguous Bases (N/X) | Low-quality sequencing reads; gaps in assembly. | ~12% of viral entries have >1% ambiguous bases. | ≤ 0.5% ambiguous bases (N/X) for reference-grade sequences. |

| Host Contamination | Adherent host/cell line sequences in viral prep. | Up to 5% in cell-culture derived sequences. | Zero alignment to host genome (using strict BLASTN/TBLASTX). |

| Sequence Complexity | Low-complexity regions or cloning artifacts. | Prevalence varies by sequencing method. | Pass DUST or entropy filter; no poly-A/T tails >20bp unless genomic. |

| Read Depth/Coverage | Uneven coverage leading to consensus errors. | Not applicable to assembled sequences; critical for raw data. | Mean coverage ≥50x; no genomic positions with coverage <10x. |

| PASS Base Quality (Q-score) | High probability of base-calling errors. | ~8% of SRA runs have median Q-score <30. | ≥95% of bases with Q-score ≥30 (Phred scale). |

| Chimera Detection | Artificially joined sequences from different strains. | Estimated 1-3% in some environmental viral datasets. | Pass multiple chimera-check tools (UCHIME, ChimeraSlayer). |

| Taxonomic Consistency | Misannotation of viral species or strain. | Up to 10% in broad "viral" categories per recent audits. | BLAST top hit E-value <1e-50 & percent identity >90% to claimed taxon. |

*Rates are synthesized from recent audits (e.g., NCBI's contaminated genomes report, 2023; GISAID quality annotations; published methodology papers).

Experimental Protocols for Validation

Protocol 1: In Silico Validation of Sequence Integrity

Objective: To computationally verify that a candidate viral genome is complete, uncontaminated, and free of major artifacts.

Methodology:

- Length & Ambiguity Filter: Retrieve sequences. Discard any where:

(Sequence Length) / (Expected Reference Length) ∉ [0.9, 1.1]OR(Count of N, X) / (Total Length) > 0.005. - Host Contamination Screen: Align all passing sequences against a relevant host genome (e.g., human GRCh38, Vero cell line) using

BLASTNwith stringent parameters (-task megablast -evalue 1e-50). Discard sequences with any significant alignment (>50bp identity >95%). - Chimera Check: Use the

vsearch --uchime_denovoalgorithm on sequences clustered at 99% identity. Visually confirm potential chimeras in alignment viewers (e.g., Geneious, UGENE). - Taxonomic Verification: Perform

BLASTNagainst the NCBInt/RefSeq viral database. Confirm the top hit's taxonomy matches the submitted annotation. Flag sequences where the top hit identity is <90% or where the second hit is a different species with near-identical score.

Protocol 2: Wet-Lab Validation via Sanger Sequencing of Key Regions

Objective: To empirically confirm the fidelity of consensus sequences derived from high-throughput methods for critical genomic regions.

Methodology:

- Primer Design: Design PCR primers flanking key regions of interest (e.g., spike protein RBD for coronaviruses, polymerase gene for influenza). Target 3-5 regions per genome (~500-800bp each).

- Amplification: Perform RT-PCR (for RNA viruses) or PCR on the original sample material or cloned DNA using high-fidelity polymerase (e.g., Q5, Phusion).

- Purification & Sequencing: Purify amplicons via gel extraction or bead-based cleanup. Sequence bi-directionally using Sanger sequencing.

- Sequence Alignment & Reconciliation: Assemble Sanger reads into a consensus for each region. Align this consensus to the original HTS-derived genome. Document and investigate any discrepancies (e.g., single nucleotide variants, indels). A discrepancy rate >0.1% suggests potential HTS consensus errors.

Visualization of Filtering Workflows

Title: Sequential Filtering Pipeline for Viral Sequences

Title: Wet-Lab Validation Workflow for HTS Sequences

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Reagents and Tools for Sequence Quality Control

| Item Name | Category | Function in Quality Control |

|---|---|---|

| High-Fidelity DNA Polymerase (e.g., Q5, Phusion) | Wet-Lab Reagent | Minimizes PCR errors during amplicon generation for Sanger validation, ensuring the validation standard itself is accurate. |

| Nuclease-Free Water & Clean Tubes | Wet-Lab Reagent | Prevents cross-contamination and RNase/DNase degradation during sample and reagent preparation. |

| SPRIselect Beads | Wet-Lab Reagent | For precise size selection and cleanup of NGS libraries, removing adapter dimers and short fragments that cause errors. |

| Reference Host Genomes (e.g., GRCh38, CHO-K1) | In Silico Resource | Essential digital reference for bioinformatic screening to identify and remove host nucleic acid contamination. |

| Cytoscape or Similar | Software Tool | Visualizes complex relationships in metadata to identify anomalous entries or batch effects in database subsets. |

| BEDTools Suite | Software Tool | Operates on genomic interval files (BED, GFF) to calculate coverage depth and identify low-coverage regions in alignment files. |

| FastQC/MultiQC | Software Tool | Provides initial quality metrics on raw sequencing reads (per-base quality, adapter content, GC bias) before assembly. |

| Chimera Detection Algorithms (UCHIME, DECIPHER) | Software Tool | Specifically designed to identify chimeric sequences formed during PCR or assembly from mixed templates. |

Thesis Context: This whitepaper is framed within a broader investigation of Common errors in viral sequence databases research. The choice of reference database is a foundational decision that can propagate or mitigate errors in downstream analyses, from variant calling to phylogenetic inference.

For reference-based work in genomics, particularly virology, the National Center for Biotechnology Information (NCBI) hosts two primary sequence repositories: GenBank and RefSeq. Their structural and philosophical differences have direct implications for data integrity.

- GenBank is a public, archival database. It is a comprehensive collection of all submitted sequences with minimal processing, preserving submitter annotations. It contains redundancy (multiple entries for the same gene or genome) and varying levels of annotation quality.

- RefSeq is a curated, non-redundant derivative of GenBank. Records are generated and maintained by NCBI staff and collaborators. The curation process involves merging data from multiple sources, resolving discrepancies, and providing consistent, evidence-based annotations.

Core Differences and Quantitative Comparison

The table below summarizes the key operational differences between the two databases relevant to reference-based analysis.

Table 1: Strategic Comparison of RefSeq and GenBank for Reference-Based Analysis

| Feature | GenBank | RefSeq | Implication for Reference-Based Work |

|---|---|---|---|

| Primary Role | Archival Repository | Curated Reference Standard | RefSeq is designed to be a benchmark; GenBank documents the raw data landscape. |

| Curation Level | Minimal; submitter-provided. | High; expert and computational curation. | RefSeq reduces errors from misannotation. GenBank may contain conflicting data. |

| Redundancy | High (multiple entries per biological entity). | Low (aims for one representative per molecule). | RefSeq simplifies reference choice and alignment. GenBank requires deduplication steps. |

| Sequence Data | Direct from submitter. | May be corrected or assembled from multiple sources. | RefSeq sequences are more likely to be biologically accurate. |

| Annotation | Subjective, heterogeneous. | Standardized, evidence-based, and updated. | RefSeq ensures consistent gene names, coordinates, and functional calls. |

| Update Frequency | Continuous (submissions). | Periodic (curation cycles). | GenBank is more current; RefSeq is more stable. |

| Error Potential | Higher (submission errors, chimeras, poor annotation). | Lower, but not zero (curation lags, overlooked conflicts). | RefSeq mitigates a major class of database errors central to our thesis. |

Table 2: Illustrative Viral Database Statistics (Examples)

| Virus/Database | GenBank Entries (Approx.) | RefSeq Representative Genomes (NM/NT/NC) | Key Curation Note |

|---|---|---|---|

| SARS-CoV-2 | > 16 million sequences | 1 reference genome (NC_045512.2) plus curated variants. | RefSeq provides the definitive reference coordinate system for global research. |

| Influenza A Virus | Hundreds of thousands | Curated set per segment & subtype (e.g., NC_026433.1 for H1N1 PB2). | RefSeq crucial for consistent segment annotation in reassortment studies. |

| HIV-1 | ~1 million | Reference genomes for major groups (e.g., NC_001802.1 for group M subtype B). | RefSeq resolves issues from high genetic diversity and recombination. |

| Human Adenovirus C | Thousands | Single reference genome per type (e.g., NC_001405.1 for Adenovirus type 5). | RefSeq corrects for historical sequencing errors in early GenBank submissions. |

Decision Framework: When to Use RefSeq vs. GenBank

The following diagram outlines the logical decision process for database selection in a viral research project.

Diagram Title: Decision Workflow for Choosing a Viral Reference Database

When RefSeq is the Preferred Choice:

- Establishing a Mapping Coordinate System: For RNA-Seq, ChIP-Seq, or variant calling (e.g., SARS-CoV-2 lineage assignment), the non-redundant, stable identifiers of RefSeq are essential.

- Functional Annotation Studies: When annotating genes, regulatory elements, or proteins, RefSeq's curated annotations minimize error propagation.

- Developing Diagnostic Assays/Primers: Requires a single, accurate reference sequence to ensure specificity.

- Comparative Genomics: Using a consistent set of representative genomes (RefSeq) avoids bias from over-represented strains in GenBank.

When GenBank May Be Necessary:

- Studying Database Errors or Annotations: The core research of our thesis requires analyzing the raw, uncurated data found in GenBank.

- Discovering Novel or Highly Divergent Viruses: If a virus is not yet represented in RefSeq, GenBank is the primary source.

- Meta-Analysis of All Available Data: Projects intending to capture the full breadth of submissions, including partial sequences.

Experimental Protocols: Validating Reference-Based Findings

The following protocol is critical for mitigating database-related errors, regardless of the primary database chosen.

Protocol: Reference Sequence Validation and Harmonization Purpose: To control for errors originating from reference database choice in viral genome alignment and variant identification.

- Retrieve Sequences: Download the primary reference sequence from RefSeq (e.g.,

NC_045512.2for SARS-CoV-2). In parallel, download the top 5-10 matching sequences from a GenBank search for the same virus/serotype. - Multiple Sequence Alignment (MSA): Use a rigorous aligner like

MAFFTorMUSCLEto align all retrieved sequences. - Identify Discrepant Regions: Visually inspect the alignment (e.g., in

AliView) or compute consensus to identify indels or polymorphisms present in the GenBank entries but absent from the RefSeq entry. These may indicate curation decisions or potential errors. - Annotate Variants: Use a tool like

SnpEff(with a custom-built RefSeq database) to annotate variants called against the RefSeq reference. Cross-check these against annotations in the GenBank records. - Report Database Discrepancies: Document any differences that would materially change biological conclusions (e.g., a frameshift in a key antigenic site). This step directly addresses the thesis on database errors.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Tools for Database-Conscious Viral Genomics

| Item | Function/Benefit | Example/Supplier |

|---|---|---|

| RefSeq Viral Genome Database (Local) | Allows local, high-speed BLAST searches and sequence extraction without web latency. Enables custom pipeline integration. | Download via NCBI's datasets command-line tool or FTP. |

| Conda/Bioconda Environment | Manages versions of bioinformatics tools, ensuring reproducibility of analyses that depend on specific database builds. | conda install -c bioconda snpeff blast entrez-direct |

| SnpEff with Custom Database | Annotates variants based on curated RefSeq features, ensuring consistent gene names and functional predictions. | Build db: java -jar snpEff.jar build -genbank -v MyVirusRefSeq |

| AliView | Lightweight, fast alignment viewer for manual inspection of discrepant regions identified between RefSeq and GenBank entries. | Open-source software (aliview.software). |

| NCBI E-Utilities (E-utilities) | Scriptable command-line access to NCBI databases for automated retrieval of records and cross-referencing between GenBank and RefSeq. | efetch, esearch, elink commands. |

| Validation Primer Sets | Wet-lab reagent for confirming critical genomic regions where database discrepancies are identified (e.g., Sanger sequencing). | Designed from conserved regions flanking the discrepancy. |

Within the broader thesis on common errors in viral sequence databases, two persistent and critical issues stand out: sequence contamination and incomplete or erroneous metadata. These errors propagate through downstream analyses, compromising epidemiological tracking, evolutionary studies, and therapeutic target identification. This whitepaper provides an in-depth technical guide to leveraging two essential, database-specific toolkits designed to combat these issues: the NCBI's Foreign Contamination Screen (FCS) and GISAID's curation flag system. For researchers, scientists, and drug development professionals, mastering these tools is no longer optional but a fundamental step in ensuring data integrity.

NCBI Foreign Contamination Screen (FCS): A Technical Deep Dive

The NCBI FCS is an automated pipeline that identifies sequences, or segments within sequences, that originate from an organism different from the stated source. It is critical for detecting host contamination, cross-species artifacts, and vector/adaptor sequences.

Core Methodology & Algorithmic Workflow

The FCS operates through a multi-stage screening process:

- Sequence Segmentation: Input sequences are fragmented into overlapping windows.

- k-mer Profiling: Each window is converted into a compositional fingerprint based on short nucleotide subsequences (k-mers).

- Taxonomic Classification via k-mer Alignment: These fingerprints are compared against a curated reference database (RefSeq) using a rapid alignment-free algorithm. Each window receives a taxonomic label with a confidence score.

- Contig Assessment: Window-level classifications are aggregated across the entire sequence contig.

- Decision Logic: A contig is flagged if a significant proportion of its windows are classified to a taxon that is phylogenetically distant from the submitted source organism, or if specific contaminants (e.g., E. coli vectors, human chromosome segments) are identified.

Diagram Title: NCBI FCS Algorithmic Screening Workflow

Key Metrics and Performance Data

Recent benchmark analyses of the FCS tool provide the following quantitative performance data:

Table 1: Performance Metrics of NCBI FCS on Benchmark Datasets

| Metric | Value | Description / Context |

|---|---|---|

| Sensitivity (Recall) | 98.5% | Proportion of known contaminated sequences correctly flagged. |

| Precision | 99.7% | Proportion of flagged sequences that are truly contaminated. |

| Runtime (Avg.) | ~2 min / 1,000 sequences | For sequences of ~30kbp on standard compute. |

| Primary Contaminants Detected | Human, Mouse, E. coli, Vector | Most commonly identified contaminant sources. |

| False Positive Rate | < 0.3% | Varies by source organism complexity. |

Protocol: Implementing FCS for User Submission Validation

Objective: Proactively screen your viral isolate sequences before submission to NCBI's GenBank.

Materials & Reagents:

- FCS GitHub Repository: Source for standalone script and databases.

- Pre-formatted FCS Reference Database: Downloaded via provided

fcs.shsetup script. - Computational Environment: Unix/Linux system with Perl and adequate storage (~100GB for database).

- Input Data: Viral sequence(s) in FASTA format.

Procedure:

- Tool Acquisition: Clone the repository:

git clone https://github.com/ncbi/fcs.git - Database Setup: Navigate to the

fcsdirectory and run./fcs.sh setup. This downloads and formats the necessary reference data. - Execution: Run the screen:

./fcs.sh screen -i your_sequences.fasta -o results_directory - Output Interpretation: Analyze the

*.fcs_report.txtfile. Key columns:contam_status:pass,flag(review), orfilter(auto-remove).contam_type: e.g., "host", "vector".identified_species: The suspected source of the contaminant segment.

- Curation: For

flagged sequences, manually inspect aligned reads in the region indicated, re-trim, or re-assemble as needed.

GISAID Curation Flags: Interpreting Community Quality Annotations

GISAID's EpicoV platform employs a curation system where flags are applied by submitters and database curators to denote sequences with known quality issues or exceptional properties. Understanding these flags is crucial for selecting high-fidelity data for analysis.

Flag Taxonomy and Meaning

Flags are metadata annotations attached to a sequence record. They represent a consensus between submitters and curators on data quality issues.

Table 2: Common GISAID Curation Flags and Researcher Implications

| Flag | Technical Meaning | Impact on Research Use |

|---|---|---|

frameshift |

One or more indels cause a disrupted open reading frame in a key gene (e.g., Spike). | High Impact. Avoid for structural studies or vaccine design. May be useful for studying defective viral particles. |

premature stop |

A nonsense mutation leads to early termination of a protein. | High Impact. Similar implications to frameshift. Gene is likely non-functional. |

ambiguous |

An excess of degenerate bases (e.g., N, R, Y) in the sequence. | Variable Impact. Depends on location and quantity. Avoid for consensus-level phylogenetic analysis if >0.5% Ns. |

mixed infection |

Evidence of infection by more than one distinct viral lineage in the sample. | Caution. Consensus sequence may be a "chimeric" average. Useful for studying co-infection dynamics. |

recombinant |

Evidence of recombination between lineages/variants. | Specialized Use. Essential for recombination studies. Must be validated with appropriate algorithms (RDP4, SimPlot). |

host |

Sequence is derived from an atypical or non-human host (e.g., mink, deer). | Contextual. Critical for zoonosis and cross-species transmission research. |

Protocol: Filtering a GISAID Dataset by Curation Flags

Objective: Download and filter SARS-CoV-2 sequences from GISAID for a phylogenetic analysis of the Spike protein, excluding sequences with critical quality issues.

Materials & Reagents:

- GISAID Access: Approved EpiCoV database credentials.

- Data Filtering Tool: GISAID's advanced search interface or the

gisaidR/Python client (if available for your institute). - Local Scripting Environment: Python/R for post-download filtering if needed.

Procedure:

- Dataset Retrieval: Use the "Search" tab in GISAID. Apply initial filters (Location, Date, Complete sequences, Low coverage excl.).

- Flag-Based Filtering: In the advanced search options or results filter sidebar, locate the "Curation" or "Flags" section.

- Exclusion Strategy: Select to EXCLUDE sequences with the following flags:

frameshift,premature stop. Consider excludingambiguousif N-content is high. - Inclusion Strategy: For a study on host adaptation, you may explicitly INCLUDE the

hostflag. - Download & Verification: Download the metadata TSV file along with sequences. The

AA Substitutionscolumn can be programmatically scanned for stop codons (*) in the Spike gene to double-check the filter.

Diagram Title: Decision Logic for GISAID Flag Filtering

Table 3: Key Research Reagent Solutions for Sequence Quality Control

| Item / Tool | Function / Purpose | Source / Example |

|---|---|---|

| NCBI FCS (Standalone) | Pre-submission detection of foreign sequence contamination. | GitHub: ncbi/fcs |

| GISAID EpiCoV | Primary source for flagged, curated viral sequences with sharing agreements. | gisaid.org |

| Nextclade | Web/USB tool for phylogenetic placement, QC, and identification of frameshifts/missing data. | clades.nextstrain.org |

| BBDuk (BBTools Suite) | Adapter trimming, quality filtering, and artifact removal of raw reads prior to assembly. | JGI DOE |

| Geneious Prime / CLC Bio | Commercial GUI-based platforms for visualizing alignments, checking open reading frames, and manual curation. | geneious.com, qiagenbioinformatics.com |

| RDP4 (Recombination Detection Program) | Specialized tool for identifying and analyzing recombinant sequences flagged in GISAID. | web.cbio.uct.ac.za/~darren/rdp.html |

| Pangolin & pangoLEARN | Lineage assignment tool; updated models rely on quality data, making pre-filtering essential. | github.com/cov-lineages/pangolin |

Addressing common database errors requires a proactive, layered approach. Researchers must integrate NCBI's FCS into pre-submission workflows to minimize the introduction of contaminants. Concurrently, a sophisticated understanding of GISAID's curation flags is necessary for intelligent post-retrieval filtering. Used in tandem, these database-specific tools form a critical first defense, ensuring that the foundational data for downstream research—from tracking viral evolution to designing monoclonal antibodies—is of the highest possible integrity. This practice directly strengthens the validity of conclusions drawn within the broader landscape of viral sequence database research.

Within the broader thesis on common errors in viral sequence databases, the accuracy of lead target sequences is a critical bottleneck in antiviral drug development. Errors—introduced via sequencing artifacts, bioinformatic mis-assembly, or database annotation mistakes—propagate through the discovery pipeline, leading to failed target validation, ineffective screening, and costly clinical trial attrition. This whitepaper provides a technical guide for researchers to identify, rectify, and prevent sequence errors, ensuring that therapeutic programs are built on a foundation of high-fidelity genomic data.

Common Error Archetypes in Viral Sequence Databases

Viral sequence databases (e.g., GenBank, GISAID) are indispensable but harbor inherent errors that compromise target identification. Major error types include:

| Error Type | Frequency Estimate* | Impact on Drug Development |

|---|---|---|

| Mis-assembled Contigs | ~5-15% of de novo assemblies | Creates chimeric or truncated open reading frames (ORFs), leading to incorrect protein targets. |

| Homopolymer/Sequencing Errors | 0.1-1% per base (NGS platforms) | Introduces frameshifts or premature stop codons, disrupting functional protein modeling. |

| Annotation Propagation | High in derived records | Mis-annotated start/stop sites and protein functions are copied, misdirecting biological validation. |

| Lab-of-Origin Contamination | Variable, outbreak-dependent | Cross-sample contamination creates artificial consensus sequences with no biological reality. |

| Low-Quality/Partial Sequences | ~10-20% of submissions | Incomplete genes misrepresent true viral diversity and drug binding site conservation. |

*Frequency estimates aggregated from recent literature and database audits.

Experimental Protocols for Sequence Verification

Protocol:De NovoVerification via Sanger Sequencing

Purpose: To empirically confirm the nucleotide sequence of a cloned viral target gene obtained from a public database.

- Primer Design: Design tiling primers with ~100 bp overlap, spanning the entire target ORF and ~50 bp of flanking regions.

- Template Preparation: Clone the database-derived gene sequence into a standard vector (e.g., pUC19). Transform into high-fidelity E. coli strain. Isolate plasmid DNA from multiple colonies.

- Cycle Sequencing: Perform Sanger sequencing reactions using the primer set and BigDye Terminator chemistry.

- Sequence Assembly & Reconciliation: Assemble reads using a reference-guided aligner (e.g., Geneious). Manually inspect chromatograms for ambiguous bases, mixed signals (indicating contamination), and discrepancies with the original database entry. Resolve conflicts by consensus from multiple clones.

- Validation: The corrected sequence should be re-submitted to a quality-checked in-house database.

Protocol: Functional Validation via Reporter Assay

Purpose: To confirm the correct expression and functionality of a putative viral enzyme (e.g., protease) target.

- Construct Creation: Sub-clone the verified target sequence into an mammalian expression vector with an N-terminal tag (e.g., FLAG).

- Reporter System: Use a compatible cell line (e.g., HEK293T) transfected with a fluorescence resonance energy transfer (FRET)-based reporter substrate specific for the viral protease.

- Transfection & Assay: Co-transfect the expression and reporter constructs. Include controls: empty vector (negative) and a known active mutant (positive).

- Measurement: At 24-48h post-transfection, measure FRET signal cleavage via fluorescence plate reader. Correctly assembled and framed sequences will show enzymatic activity comparable to positive controls; error-containing sequences will show negligible activity.

- Analysis: Normalize activity to target protein expression level via western blot (anti-FLAG). Discard constructs with discrepancies between expected and observed molecular weight.

Protocol: Mass Spectrometric (MS) Verification of Expressed Target

Purpose: To directly confirm the amino acid sequence of the expressed target protein.

- Protein Expression & Purification: Express the verified construct in a suitable system (e.g., HEK293 for post-translational modifications). Purify via affinity chromatography (e.g., anti-FLAG beads).

- Digestion: Perform in-gel or in-solution tryptic digestion of the purified protein.

- LC-MS/MS Analysis: Analyze peptides via liquid chromatography-tandem mass spectrometry.

- Database Search: Search the MS/MS spectra against a custom database containing the theoretical tryptic digest of the verified target sequence.

- Validation: Require >95% sequence coverage matching the expected peptides. Any persistent, unexplained peptides may indicate database errors or cloning artifacts.

Integrated Workflow for Error Mitigation

The following diagram illustrates the logical sequence for vetting a candidate target from a public database.

Title: Target Sequence Vetting Workflow

Key Research Reagent Solutions

| Item | Function in Verification | Example/Provider |

|---|---|---|

| High-Fidelity DNA Polymerase | Error-free amplification of target sequences for cloning. | Q5 (NEB), Phusion (Thermo) |

| Sanger Sequencing Service | Provides gold-standard base-by-base sequence confirmation. | Azenta, Eurofins |

| Mammalian Expression Vector | For functional expression of viral targets in relevant cellular context. | pcDNA3.1 (Thermo), pCMV vectors |

| FRET-Based Reporter Assay Kit | Measures enzymatic activity of viral proteases/kinases to confirm function. | SensoLyte (AnaSpec), Cisbio HTRF |

| Affinity Purification Resin | Isolates tagged target protein for MS analysis and biochemical studies. | Anti-FLAG M2 Agarose (Sigma), HisPur Ni-NTA (Thermo) |

| Trypsin, MS Grade | Cleaves purified protein into peptides for mass spectrometric sequencing. | Trypsin Gold (Promega) |

| Reference Control RNA/DNA | Well-characterized viral material for assay calibration and positive controls. | ATCC Viral Standards |

A Pathway-Centric View of Error Impact

Errors at the genomic level disrupt the entire downstream biological pathway targeted for drug intervention. The following diagram maps this cascade for a hypothetical antiviral targeting a viral protease.

Title: Impact of Sequence Error on Viral Protease Pathway

Integrating rigorous sequence verification protocols is non-negotiable for modern antiviral drug development. By treating public database entries as preliminary hypotheses—subject to experimental confirmation via the integrated workflow of in silico checking, Sanger verification, functional assay, and MS validation—research teams can de-risk pipelines, conserve resources, and increase the probability of developing effective therapeutics against validated, error-free viral targets.

Diagnosing and Correcting Database Errors in Your Viral Genomics Workflow

Accurate sequence data is the cornerstone of virology, phylogenetics, and drug target discovery. Within the broader context of common errors in viral sequence databases, the identification of problematic sequences in alignments and phylogenetic trees is a critical, yet often overlooked, quality control step. This guide details the red flags signaling data corruption and provides protocols for their detection and resolution.

Key Indicators of Problematic Sequences

Problematic sequences can arise from laboratory contamination, sequencing errors, database misannotation, or recombination. Their presence skews evolutionary interpretations and can misdirect therapeutic development.

Quantitative Red Flags

The following table summarizes key metrics and their typical thresholds for identifying outliers.

Table 1: Quantitative Metrics for Identifying Sequence Anomalies

| Metric | Calculation/Description | Normal Range | Red Flag Threshold | Primary Implication |

|---|---|---|---|---|

| Pairwise Identity | Percentage of identical residues between two sequences. | Varies by virus and region. | Extreme outlier (>3 std dev from mean) or 100% identity to distant taxon. | Contamination or mislabeling. |

| Sequence Length | Number of nucleotides/amino acids. | Consistent within functional region. | >10% deviation from median length of alignment. | Truncation, indel errors, or non-homologous sequence. |

| Branch Length | Evolutionary distance from node to tip in a tree. | Relatively consistent within clade. | Exceptionally long branch relative to closest relatives. | Poor sequence quality or accelerated evolution. |

| Compositional Bias | Deviation in GC or AT content. | Consistent within viral family/region. | >15% difference from group mean. | Contaminant from host or other organism. |

| Substitution Saturation (Iss’s Index) | Test for loss of phylogenetic signal due to multiple hits. | Iss < Iss.critical (SymTest). | Iss significantly > Iss.critical. | Phylogenetic inferences are unreliable. |

| Phylogenetic Incongruence | Bootstrap support for conflicting placement. | High support (>70%) for single position. | Strong support (>70%) for two mutually exclusive positions. | Recombinant sequence or mixed infection. |

Experimental Protocols for Detection

Protocol 1: Initial Data Triage and Alignment Validation

- Retrieve Sequences from public databases (GenBank, BV-BRC). Record isolate, host, and collection date metadata.

- Perform Multiple Sequence Alignment using a tool appropriate for the data (e.g., MAFFT for nucleotides, MUSCLE for smaller datasets).

- Calculate Summary Statistics using AliStat or similar to identify length outliers and positions with >50% gaps.

- Visualize the Alignment in AliView or Geneious. Scan for obvious stretches of incongruent sequence or abnormal patterns.

Protocol 2: Phylogenetic Incongruence and Recombination Detection

- Construct Initial Tree using a maximum-likelihood method (IQ-TREE, RAxML) with appropriate model (ModelFinder). Perform 1000 bootstrap replicates.

- Screen for Recombinants using RDP5. Apply multiple detection methods (RDP, GENECONV, MaxChi). Sequences flagged by ≥3 methods with significant p-values (<10⁻⁶) are candidates.

- Confirm by Phylogenetic Incongruence:

- Physically split the candidate sequence at the proposed breakpoints.

- Construct separate trees for each genomic region.

- A confirmed recombinant will occupy distinct, well-supported phylogenetic positions in each tree.