Viral Taxonomy Correction: A Critical Guide to Identifying and Fixing Mislabeled Sequences in Genomic Databases

This article provides a comprehensive, step-by-step guide for researchers, scientists, and drug development professionals on identifying, troubleshooting, and correcting incorrect taxonomic labeling in viral genomic sequences.

Viral Taxonomy Correction: A Critical Guide to Identifying and Fixing Mislabeled Sequences in Genomic Databases

Abstract

This article provides a comprehensive, step-by-step guide for researchers, scientists, and drug development professionals on identifying, troubleshooting, and correcting incorrect taxonomic labeling in viral genomic sequences. It addresses the foundational causes and impacts of mislabeling, details modern methodological pipelines and bioinformatics tools for detection and reclassification, offers solutions for common challenges and optimization strategies, and validates best practices through comparative analysis of current tools and databases. The goal is to enhance the accuracy and reliability of viral data crucial for biomedical discovery and therapeutic development.

The High Stakes of Viral Mislabeling: Understanding Causes, Consequences, and Database Vulnerabilities

Troubleshooting Guides & FAQs

Q1: My viral sequence matches a reference in the database, but the host information is contradictory. Is this incorrect labeling?

A: Yes, this is a primary example. A sequence from a plant sample labeled as "Human adenovirus C" constitutes incorrect labeling due to host-virus association mismatch. This often stems from database entry errors or contamination. First, verify the host field in the GenBank/RefSeq entry (e.g., /host="Homo sapiens"). Then, cross-check using the International Committee on Taxonomy of Viruses (ICTV) taxonomy browser and recent literature on host range.

Q2: BLASTN top hit is to a "Porcine endogenous retrovirus," but phylogenetic analysis groups it with rodent viruses. Which is correct? A: The phylogenetic context likely reveals the incorrect label. Reliance solely on pairwise alignment (BLAST) can be misleading for highly conserved regions or recombinant sequences. The taxonomic label should reflect evolutionary relationships, not just highest percent identity. The "Porcine" label is likely incorrect if robust phylogenetic trees (using structural or polymerase genes) show consistent clustering with rodent clades with high bootstrap support (>90%).

Q3: What are the definitive criteria to flag a sequence as incorrectly labeled? A: Incorrect labeling is constituted by a clear, evidence-based mismatch between the sequence's assigned taxonomy and its validated biological properties. The criteria are:

- Phylogenetic Incongruence: The sequence consistently clusters with a different genus/family in a robust phylogenetic tree.

- Host-Virus Discordance: The labeled host is biologically implausible for the viral genus (e.g., a bacteriophage labeled from a mammalian host).

- Genomic Feature Mismatch: Genome organization (e.g., gene order, regulatory elements) is atypical for its labeled taxon but typical for another.

- Technical Artifact Confirmation: The sequence is a proven laboratory contaminant (e.g., common in NGS kits) mislabeled as a novel pathogen.

Key Diagnostic Experiment Protocols

Protocol 1: Core Phylogenetic Analysis for Taxonomic Validation

- Objective: To determine the correct evolutionary placement of a query viral sequence.

- Method:

- Gene Selection: Extract and translate the conserved core gene (e.g., RdRp for RNA viruses, major capsid for phages) from your query.

- Reference Dataset: Download corresponding protein sequences from ICTV-verified reference genomes across related genera. Include outgroups.

- Alignment: Use MAFFT (v7) with G-INS-i algorithm. Trim with TrimAl (

-automated1). - Model Selection: Find best-fit substitution model with ModelTest-NG or

-m TESTin IQ-TREE. - Tree Inference: Run IQ-TREE 2 (1000 ultrafast bootstrap replicates). Visualize in FigTree.

- Interpretation: Query clustering with a reference clade with ≥90% UFboot support suggests its true taxonomy. Isolated branching or low support may indicate novel lineage or database error.

Protocol 2: In Silico Host Prediction for Eukaryotic Viruses

- Objective: To predict the likely host range and identify host-label mismatches.

- Method:

- For DNA viruses, run the query through Virus-Host Predictor (genome similarity + CRISPR spacer matches).

- For broad-range, use HoPhage (for phages) or VirHostMatcher-Net (based on oligonucleotide frequency).

- Cross-reference predictions with the labeled host from the database record.

- Interpretation: A strong prediction (e.g., p-value < 1e-5) for a host radically different from the database label is a strong indicator of incorrect taxonomic labeling, warranting experimental host-range validation.

Table 1: Common Sources of Incorrect Viral Taxonomic Labeling in Public Databases (Hypothetical Snapshot)

| Source of Error | Estimated Frequency* | Primary Impact | Example |

|---|---|---|---|

| Misannotation of Host Field | ~8-12% of eukaryotic virus entries | Creates false host-virus associations | A fish virus labeled as isolated from human cells. |

| Legacy/Obsolete Taxonomy | ~15-20% of older entries | Uses deprecated genus/family names | Sequence labeled as "Enterobacteria phage T4" under an old classification not recognized by ICTV. |

| Contaminant Mislabeling | ~1-5% (higher in some NGS studies) | False positive for viral presence | Sequencing adapter or vector contaminant labeled as a novel viral sequence. |

| Over-reliance on BLAST | Common in automated pipelines | Misassignment at genus/species level | A novel betacoronavirus assigned as "SARS-CoV-2" due to high RdRp similarity. |

Frequency estimates are illustrative, based on analyses from recent studies (e.g., *Nucleic Acids Res., 2023; Viruses, 2024).

Table 2: Diagnostic Tools for Identifying Incorrect Labels

| Tool Name | Purpose | Key Metric | Decision Threshold |

|---|---|---|---|

| CheckV | Assess genome quality, identify contaminants | contamination flag |

contamination > 0 warrants inspection. |

| tBLASTx | Compare nucleotide sequences via translated alignment | E-value, Query Coverage | E-value < 1e-10 and coverage > 70% for core genes. |

| VIRIDIC | Compute intergenomic similarities (for prokaryotic viruses) | % Similarity | % Similarity < 70% of genus threshold suggests mislabeling. |

| PhyloSuite | Integrated pipeline for phylogeny & taxonomy | Bootstrap/Posterior Probability | Support value ≥ 90% for confident placement. |

Visualizations

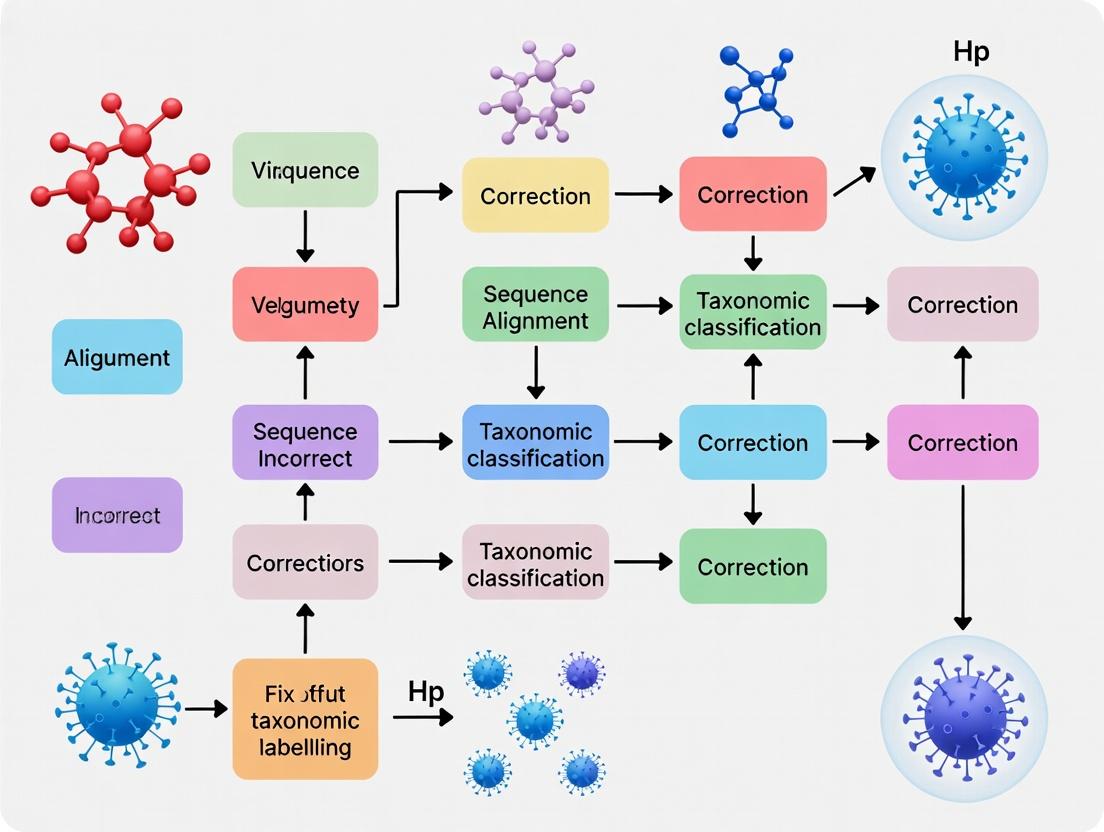

Title: Diagnostic Workflow for Suspect Viral Taxonomic Labels

Title: Phylogenetic Protocol for Taxonomic Placement

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Taxonomic Verification | Example Product/Catalog |

|---|---|---|

| ICTV Virus Metadata Resource | Provides the gold-standard, ratified taxonomy for building reference datasets. | ICTV Online (10th) Report |

| Viral RefSeq Genome Database | Curated, non-redundant set of reference viral genomes with consistent annotation. | NCBI Viral RefSeq (ftp.ncbi.nlm.nih.gov/refseq/release/viral/) |

| MAFFT Software | Creates accurate multiple sequence alignments of conserved viral genes for phylogeny. | MAFFT v7.520 (https://mafft.cbrc.jp/) |

| IQ-TREE 2 Software | Infers maximum-likelihood phylogenetic trees with built-in model testing and fast bootstrapping. | IQ-TREE 2.2.2.7 (http://www.iqtree.org/) |

| CheckV Database & Tool | Assesses genome completeness and identifies contamination in viral sequences from metagenomes. | CheckV v1.0.1 (https://bitbucket.org/berkeleylab/checkv/) |

| Virus-Host DB | Provides experimentally verified virus-host interaction data for cross-referencing. | Virus-Host DB (https://www.genome.jp/virushostdb/) |

| ZymoBIOMICS Microbial Standard | Control for metagenomic experiments to identify kit/background contaminants. | ZymoBIOMICS D6300 & D6305 |

Technical Support Center

Troubleshooting Guides & FAQs

FAQ Category: Contamination Issues

Q1: My viral metagenomic analysis is detecting mammalian sequences (e.g., Homo sapiens, Mus musculus) at high abundance. What is the likely cause and how can I resolve this? A: This is a classic sign of host or laboratory contamination. Common sources include carryover from nucleic acid extraction kits, environmental aerosols, or cross-sample contamination during library preparation.

- Protocol for Decontamination: Implement an in silico subtraction step. Align your raw sequencing reads to the host genome (e.g., GRCh38 for human) using a stringent aligner like Bowtie2 (

--very-sensitive-local). Discard all reads that align. For viral enrichment wet-lab protocols, always include a non-template control (NTC) in your experiment to identify kit-borne contaminants. - Key Reagent: DNase I / RNase A treatment during nucleic acid extraction to degrade free-floating host nucleic acids.

Q2: I suspect my reference database contains mislabeled sequences. How can I audit and clean it before my analysis? A: Legacy data errors are pervasive. Perform a self-BLAST of your custom database.

- Protocol for Database Auditing:

- Format your database for BLAST.

- Run

blastn -db your_viral_db -query your_viral_db.fasta -outfmt 6 -out self_blast.tsv. - Parse the output to identify sequences with very high identity (e.g., >99%) but different taxonomic labels.

- Manually inspect these hits by checking the original publication or cross-referencing with a trusted source like the NCBI Viral RefSeq database.

- Key Reagent: Curated reference databases like NCBI RefSeq Viral, ICTV Master Species List, and GVD (Global Virus Database).

FAQ Category: Automated Pipeline Errors

Q3: My pipeline's taxonomic classifier (e.g., Kraken2, Kaiju) is assigning reads to a rare virus at low confidence. Should I trust this result? A: Low-confidence assignments from automated tools are a major error source. This can be due to conserved domains shared across viral families or pipeline default settings optimized for speed, not accuracy.

- Protocol for Verification:

- Extract the reads assigned to the questionable taxon.

- Perform a direct BLASTn/BLASTx search against the NT/NR database. Examine the full alignment—not just the top hit—for consistency.

- Check for presence of multiple viral genes. A single gene hit is less reliable than a multi-gene signature. Use a tool like VirSorter2 or CheckV for genome context.

- Key Reagent: High-fidelity polymerase (e.g., Q5, Phusion) for amplicon-based validation of suspect regions.

Q4: How can I benchmark my classification pipeline to understand its error profile? A: Use an in silico "mock community" with known ground truth.

- Protocol for Pipeline Benchmarking:

- Create a synthetic metagenome by spiking simulated reads from known viral genomes (obtain from CAMI challenges or simulate with CAMISIM) into a background of host and bacterial reads.

- Run this mock dataset through your exact pipeline.

- Compare the pipeline's output taxonomy to the known input taxonomy to calculate precision, recall, and false positive rates (see Table 1).

Data Presentation

Table 1: Common Error Rates in Taxonomic Classifiers (Benchmark on CAMI II Viral Dataset)

| Classifier Tool | Average Precision (%) | Average Recall (%) | Common Error Type (False Positive) |

|---|---|---|---|

| Kraken2 | 88.7 | 75.2 | Family-level misclassification due to shared k-mers |

| Kaiju | 91.2 | 70.5 | Gene-level homology across distant taxa |

| Diamond (Blastx) | 95.5 | 65.8 | Slow but highly precise at species level |

| CLARK | 93.1 | 78.4 | Sensitive to incomplete reference database |

Experimental Protocols

Protocol 1: Rigorous Wet-Lab Contamination Control for Viral Enrichment Title: Protocol for Contamination-Free Viral Nucleic Acid Preparation

- Physical Separation: Perform pre-extraction steps (homogenization, centrifugation) in a separate area from post-PCR steps.

- NTC Inclusion: Include a non-template control containing only molecular-grade water through the entire extraction and library prep process.

- Enzymatic Treatment: Treat samples with a cocktail of DNase I and RNase A (excluding RNA/DNA viruses respectively) for 30 min at 37°C prior to viral lysis to remove external nucleic acids.

- Ultra-Clean Reagents: Use UV-irradiated, filtered pipette tips and dedicated equipment. Employ commercial viral enrichment kits that include internal process controls.

Protocol 2: In Silico Verification of Problematic Taxonomic Assignments Title: Protocol for Validating Low-Confidence Viral Hits

- Read Extraction: Extract FASTA/FASTQ reads assigned to the taxon of interest from your classifier's output.

- Assembly: De novo assemble these reads using SPAdes (

--metaflag) or MEGAHIT. - BLAST Validation: BLAST the resulting contigs against the NCBI NT database using

blastn(ortblastxfor divergent viruses). Disable the low-complexity filter (-F F). - Phylogenetic Confirmation: Align the putative viral sequence with trusted reference sequences using MAFFT. Build a maximum-likelihood tree with IQ-TREE. True assignment is supported by forming a monophyletic clade with verified sequences of that taxon.

Mandatory Visualizations

Title: Error Introduction and Correction Pathway in Viral Taxonomy

Title: Clean Lab & Bioinformatics Workflow for Accurate Taxonomy

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Accurate Viral Taxonomy

| Item | Function | Example Product/Brand |

|---|---|---|

| DNase I / RNase A | Degrades contaminating free host nucleic acids prior to viral lysis, reducing false host signals. | Thermo Fisher Turbo DNase, Qiagen RNase A |

| UltraPure BSA | Acts as a carrier and stabilizer during viral nucleic acid extraction from low-biomass samples. | Invitrogen UltraPure BSA |

| Molecule-grade Water | PCR/DNA-free water for all reactions to prevent environmental contamination. | Thermo Fisher Nuclease-free Water |

| PhiX Control | Spiked into sequencing runs for quality control and to detect cross-contamination between lanes. | Illumina PhiX Control v3 |

| Synthetic Mock Community | Contains known genomes at defined ratios; used for benchmarking pipeline accuracy. | ZymoBIOMICS Microbial Community Standard |

| High-Fidelity Polymerase | For accurate amplification of viral sequences during confirmatory PCR. | NEB Q5, Thermo Fisher Phusion |

| Curated Viral Database | A clean, non-redundant, taxonomically verified reference for classification. | NCBI Viral RefSeq, GVD, IMG/VR |

Technical Support Center: Troubleshooting Incorrect Viral Taxonomy

FAQ & Troubleshooting Guide

Q1: Our phylogenetic tree shows unexpected clustering of a known human respiratory virus with an arbovirus. What steps should we take to troubleshoot?

A: This typically indicates a taxonomic labelling error, often from public database contamination or misannotation.

- Verify Sequence Integrity: Run a BLASTn search against the NCBI nt database. Check the top hits for consistency in host and geographic origin.

- Check for Chimeras: Use tools like UCHIME2 or DECIPHER's

FindChimerason your raw reads or aligned sequence. - Re-extract and Re-annotate: If working with public data, download the original SRA data. Re-process with a standardized pipeline (see Protocol A).

- Re-run Phylogeny with Robust Methods: Use a maximum-likelihood (IQ-TREE2) or Bayesian (BEAST2) method on a conserved region (e.g., RdRp). Include carefully chosen reference sequences.

Q2: Our newly developed diagnostic PCR assay is producing false positives for non-target viruses. Could taxonomic database errors be the cause?

A: Yes. Primer/probe design based on mislabeled sequences is a primary cause.

- Audit Your Reference Set: Trace the origin of every sequence used in design. Use Table 1 for database comparison.

- In Silico Specificity Re-test: Use the updated, curated reference database from Table 1 to perform a fresh in silico specificity check with tools like Primer-BLAST.

- Wet-Lab Validation: Test against a broader panel of clinically relevant isolates and near-neighbors using Protocol B.

Q3: We identified a promising viral protease inhibitor, but activity is inconsistent across lab strains. Is sequence variation the issue?

A: Inconsistency often stems from undefined genetic differences between strains due to poor sequence metadata.

- Sequence the Actual Target Strain: Fully sequence the viral stocks used in your assays (Protocol A).

- Perform Structural Alignment: Align the protease sequence from your stock with the sequence used for in silico drug design. Identify key residue differences.

- Re-express and Test Variants: Clone and express variant proteases found in mislabeled strains for biochemical activity assays.

Experimental Protocols

Protocol A: Standardized Viral Genome Re-analysis Pipeline for Taxonomic Verification

Objective: To extract, assemble, and correctly classify viral sequences from raw sequencing data.

- Data Retrieval: Download SRA files using

prefetchandfasterq-dumpfrom the SRA Toolkit. - Quality Control & Host Read Removal: Use

fastpfor adapter trimming and quality filtering. Align reads to the host genome (e.g., human GRCh38) usingbowtie2and retain unaligned reads. - De novo Assembly: Assemble cleaned reads using

SPAdes(--metaflag for mixed samples) orIVARfor amplicon data. - Contig Identification: BLAST assembled contigs against the RVDB database. Also run

Kaijufor taxonomic classification of reads. - Consensus Generation & Annotation: Generate a consensus sequence from mapped reads using

bcftools. Annote open reading frames (ORFs) withProkkaorVGEA. - Curation & Submission: Manually verify annotations against literature. Submit corrected sequences to databases with complete metadata.

Protocol B: Diagnostic Assay Specificity Wet-Lab Validation

Objective: Empirically test PCR assay specificity against a panel of potential cross-reactants.

- Panel Creation: Obtain genomic material (extracted RNA/DNA) for: (i) Target virus (positive control), (ii) Closest phylogenetic relatives, (iii) Viruses causing similar clinical syndromes, (iv) Negative controls.

- Nucleic Acid Quantification: Standardize all samples to the same concentration (e.g., 10^4 copies/µL).

- Cross-Reactivity Testing: Run your qPCR assay against each panel member in triplicate. Use standardized cycling conditions.

- Data Analysis: Any amplification in non-target wells with a Cq < 40 requires investigation. Redesign primers/probes from a curated sequence set.

Data Presentation

Table 1: Comparison of Viral Sequence Database Error Rates & Key Features

| Database | Scope | Estimated Error/Mislabel Rate* | Key Feature | Best Use Case |

|---|---|---|---|---|

| NCBI GenBank | Comprehensive | ~0.1-0.4% (higher for some taxa) | Broadest sequence set, user-submitted | Initial discovery, data richness |

| RefSeq | Curated subset of GenBank | <0.01% | Manually curated, non-redundant | Gold standard for assay/tool development |

| Virus-NCBITaxonomy | Taxonomic framework | N/A | Official viral taxonomy hierarchy | Resolving naming/classification issues |

| RVDB | Viral sequences only | Low (pre-filtered) | Cleaned, non-host, non-synthetic | Metagenomic & diagnostic studies |

| ICTV Virus Metadata | Taxonomy & exemplars | Very Low | Authoritative taxonomy & species lists | Final taxonomic assignment |

Rates based on recent peer-reviewed audits (e.g., NCBI GenBank (2023): ~17% of *Flaviviridae entries had issues; RefSeq: Curation aims for near-zero labeling errors).

Mandatory Visualizations

Diagram 1: Impact Flow of Taxonomic Error

Diagram 2: Taxonomic Verification Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Viral Taxonomy Correction |

|---|---|

| SRA Toolkit | Downloads raw sequencing data from public repositories for re-analysis. |

| Bowtie2 / BWA | Aligner to remove host-derived reads, enriching for viral sequences. |

| SPAdes (meta) | Assembler for constructing viral genomes from complex metagenomic reads. |

| BLAST+ Suite | Standard tool for initial sequence homology search and identification. |

| IQ-TREE2 | Software for fast and accurate phylogenetic inference to test placement. |

| RVDB Database | Curated viral database to minimize false matches to non-viral sequences. |

| ICTV Report | Authoritative reference for definitive viral taxonomy and nomenclature. |

| Sanger Sequencing | Gold-standard for validating key genomic regions (e.g., primer binding sites). |

Technical Support Center: Troubleshooting & FAQs

Frequently Asked Questions:

Q1: My analysis pipeline identified a sequence from a public repository (like NCBI) as a potential mislabel. What are the first steps I should take? A1: First, do not delete or alter your local copy. Document the accession number and the specific discrepancy (e.g., expected vs. observed taxonomy). Re-run your BLASTn or genome assembly against the latest NT/NR database to confirm. Check the publication linked to the record for possible explanations. Finally, consider contacting the submitter directly or flagging the issue to the repository via their official error reporting channel (e.g., NCBI's "Submit an update").

Q2: How can I distinguish between a genuine mislabeling event and contamination in my own or a public dataset? A2: Follow a contamination vs. mislabeling diagnostic workflow. For a suspect sequence, map all reads back to the assembled genome and check for uneven coverage or mixed base calls, which suggest contamination. For a complete public entry, analyze the nucleotide composition (e.g., k-mer profiles) across the entire genome and compare to the claimed taxon's expected profile. A uniform but anomalous profile suggests mislabeling.

Q3: What is the most robust bioinformatic protocol to confirm a suspected viral taxonomic mislabel? A3: A multi-method consensus approach is required. The protocol is detailed below.

Q4: After verifying a mislabel, how do I contribute a correction to GISAID or NCBI? A4: Processes differ by repository.

- NCBI: Use the "Submit an update" link on the GenBank or SRA record page. Provide detailed evidence (alignment files, analysis reports).

- GISAID: Corrections are managed by the originating laboratory. You must contact the submitter identified in the metadata. GISAID itself will not alter records without submitter consent.

Experimental Protocol: Confirming Suspected Viral Mislabeling

Objective: To definitively identify sequences incorrectly classified at the species or genus level in public repositories.

Materials: Suspect sequence (FASTA), high-performance computing cluster, reference databases.

Methodology:

- Primary Screening: Perform a BLASTn search against the entire

ntdatabase. Restrict output to the top 100 hits. Calculate percent identity and query coverage. - Phylogenetic Placement: Download the top 50 BLAST hits plus representative references for the claimed taxon. Perform a multiple sequence alignment using MAFFT. Construct a maximum-likelihood tree using IQ-TREE (Model: GTR+F+I+G4). Visually inspect the placement of the query sequence.

- Genome Composition Analysis: Calculate the di-nucleotide frequency of the query sequence using

compseq(EMBOSS). Compare against a pre-computed profile of the claimed genus using a Euclidean distance metric. - Marker Gene Analysis: If applicable (e.g., for herpesviruses), extract conserved core genes (e.g., DNA polymerase). Translate to amino acids and perform a BLASTp search. Use the results for a separate, gene-specific phylogenetic analysis.

- Consensus Call: A sequence is flagged as mislabeled if: a) Its top BLAST hits are to a taxon different from its label, b) It clusters robustly (bootstrap >90%) with a different clade in the phylogeny, and c) Its genome composition is an outlier for its labeled group.

Workflow Visualization:

Table 1: Documented Cases of Viral Sequence Mislabeling in Public Repositories

| Repository | Claimed Taxon | Actual Taxon | Evidence Method | Impact/Notes | Reference (Example) |

|---|---|---|---|---|---|

| NCBI GenBank | Hepatitis C Virus (HCV) | Bovine viral diarrhea virus (BVDV) | Whole-genome phylogeny, BLAST | Misled HCV evolution studies; potential lab contamination. | Kuiken et al., 2006 |

| NCBI SRA | Influenza A virus | Armigeres subalbatus mosquito RNA | k-mer analysis, lack of mapping | Inflated IAV diversity metrics; host contamination. | Lu & Perkins, 2021 |

| GISAID | SARS-CoV-2 (Human) | SARS-CoV-2 in Vero cell line | Presence of C→T mutations hallmark of Vero passage | Skews analysis of human adaptive evolution. | De Maio et al., 2020 |

| NCBI RefSeq | Tomato mosaic virus | Tomato brown rugose fruit virus | Re-analysis of sequencing reads, assembly errors | Obsolete reference impacted diagnostic assay design. | Ongoing curation |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Validating Viral Taxonomy

| Tool / Reagent | Category | Primary Function in Mislabeling Investigation |

|---|---|---|

| NCBI NT/NR Database | Reference Data | Gold-standard database for primary sequence similarity search (BLAST). |

| MAFFT | Bioinformatics Software | Creates accurate multiple sequence alignments for phylogenetic analysis. |

| IQ-TREE | Bioinformatics Software | Infers maximum-likelihood phylogenetic trees with model testing. |

| CheckV | Bioinformatics Pipeline | Assesses genome quality and identifies contamination in viral sequences. |

| Kraken2/Bracken | Bioinformatics Tool | Provides rapid taxonomic classification of sequence reads for contamination screening. |

| Vero E6 Cell Line | Biological Reagent | Common substrate for virus isolation; its genetic signature must be bioinformatically filtered. |

| PhiX Control DNA | Sequencing Reagent | Used as a spike-in during Illumina runs; must be bioinformatically removed to avoid false "virus" hits. |

Logical Decision Pathway for Mislabel Investigation

Viral Taxonomy Troubleshooting Center

Welcome to the Technical Support Center for Viral Sequence Labeling. This resource is designed to assist researchers in diagnosing and correcting issues stemming from the dynamic nature of viral taxonomy, as governed by International Committee on Taxonomy of Viruses (ICTV) updates. Incorrect or outdated labels in sequence databases can compromise experimental reproducibility, meta-analyses, and drug target identification.

FAQs & Troubleshooting Guides

Q1: My BLAST search for a known virus returns sequences with conflicting genus names. Which one is correct? A: This is a common symptom of legacy labeling. The ICTV may have reclassified the virus, but older database entries retain outdated names.

- Troubleshooting Steps:

- Identify the reference: Find the latest ICTV Taxonomy Release report or the official Virus Metadata Resource (VMR) spreadsheet.

- Verify the isolate: Note the exact isolate or strain designation from your BLAST hit (e.g., "Bat coronavirus HKU5-1").

- Cross-reference: Search for this isolate name in the VMR or recent literature to find its current accepted taxonomic assignment (Species, Genus, Family).

- Action: Manually annotate your local sequence file with the verified taxonomy. Consider flagging the outdated database entry if possible.

Q2: My phylogenetic analysis shows my sequence clustering with members of a new genus, but my lab's legacy annotation says otherwise. How do I reconcile this? A: Your analysis likely reveals the "ground truth" that a prior ICTV update has formalized. Legacy annotations are a major source of error.

- Troubleshooting Protocol:

- Re-run Phylogeny with Current Type Sequences: Download reference sequences for all relevant current species and genera from RefSeq or GenBank, ensuring they have the latest taxonomic lineage in their metadata.

- Perform Robust Tree Inference: Use maximum-likelihood or Bayesian methods. Key supports (bootstrap >90%, Bayesian posterior probability >0.9) on the node linking your sequence to the new genus are strong evidence.

- Action: Update your sequence records and any associated publications or internal databases with the new classification. Cite the specific ICTV taxonomy update that mandated the change.

Q3: How do ICTV updates specifically impact drug and vaccine target identification? A: Mislabeling can lead to targeting non-conserved regions or missing broad-spectrum opportunities.

- Scenario: A conserved protease was identified in all members of the genus Alphavirus (old label). An ICTV update moves one species to the new genus Foxtrotvirus. If databases aren't updated, searches for "Alphavirus protease" will miss the Foxtrotvirus homolog, potentially overlooking a critical divergent strain.

- Solution: Always perform homology searches using protein function/conserved domains (e.g., Pfam) in conjunction with a current taxonomic filter, not just a legacy genus name.

Experimental Protocol: Validating and Correcting Sequence Taxonomy

This protocol outlines a systematic method to verify the taxonomic label of a viral sequence in light of ICTV changes.

Title: Workflow for Taxonomic Validation of Viral Sequences Objective: To determine the correct, current taxonomic classification for a query viral genome sequence. Materials:

- Query viral nucleotide or amino acid sequence.

- High-speed internet access to bioinformatics databases.

- Computational tools (command-line or web server):

BLAST+,MAFFT,IQ-TREE,ETE3toolkit.

Methodology:

- Initial Homology Search: Use

blastnorblastp(for nucleotides or proteins, respectively) against the NCBI NT or NR database. Retain top 50-100 hits with significant E-values (<1e-10). - Extract Metadata & Identify Conflict: Parse the taxonomic lineage of each hit. Flag conflicts (e.g., hits spread across multiple genera). Download these sequences.

- Acquire Ground Truth Reference Set: Download the official reference sequences for the type species of suspected genera from the NCBI Virus or RefSeq database. Crucially, consult the latest ICTV VMR to ensure this reference set reflects the newest taxonomy.

- Multiple Sequence Alignment: Align your query, the BLAST hits, and the type references using

MAFFTwith the--autoflag. - Phylogenetic Inference: Construct a tree with

IQ-TREEusing ModelFinder (-m MFP) and 1000 ultrafast bootstrap replicates (-B 1000). - Taxonomic Assignment: Root the tree using an appropriate outgroup. If your query sequence clusters within a monophyletic clade containing a type reference sequence with high bootstrap support, it belongs to that genus/species.

- Annotation: Use the

ETE3toolkit to programmatically apply the taxonomic label from the confirmed type reference to your query sequence in its header/annotation file.

Workflow Diagram:

Diagram Title: Viral Sequence Taxonomic Validation Workflow

Data Presentation: Impact of a Major ICTV Update

The 2022-2023 ICTV ratification cycle included a major reorganization of the order Mononegavirales. The table below quantifies the scale of change, illustrating the relabeling challenge.

Table 1: Taxonomic Reclassification in Mononegavirales (ICTV 2023 Update)

| Change Type | Family Affected | Old Genus/Species Label | New Genus/Species Label | Estimated Sequences in Public Databases Affected* |

|---|---|---|---|---|

| Genus Creation | Rhabdoviridae | Unclassified "Bas-Congo virus" | New genus: Dichorhavirus | ~150 |

| Genus Merger | Paramyxoviridae | Rubulavirus, Avulavirus | Merged into expanded Rubulavirus | ~5,000 |

| Species Reassignment | Pneumoviridae | Human orthopneumovirus (HRSV) | Reassigned within existing Metapneumovirus genus | ~10,000+ |

| Family Reassignment | N/A | Bornaviridae (Order) | Moved to new order Hepelivirales | ~2,000 |

*Estimates based on GenBank sequence count searches for the old taxonomic label.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Managing Taxonomic Change

| Item | Function & Relevance to Taxonomic Fixing |

|---|---|

| ICTV Virus Metadata Resource (VMR) | The master spreadsheet linking virus isolates to their current, ICTV-ratified species and higher taxonomy. The primary reference for correction. |

| NCBI Taxonomy Database | The operational database used by GenBank/RefSeq. Contains both current and historical nodes, essential for tracking changes. |

| RefSeq Viral Genome Database | A curated set of reference viral genomes, with annotations that are updated relative to ICTV decisions. Use as a trusted source for type sequences. |

| ETE3 Python Toolkit | A library for programmatically building, analyzing, and visualizing phylogenetic trees and their associated taxonomic data. Enables automated re-labeling. |

| ViralZone (Expasy) | Provides structured information on viral molecular biology, linked to taxonomy. Useful for understanding functional implications of reclassification. |

| Nextclade / Pangolin | Specialized tools for SARS-CoV-2 and influenza, demonstrating the principle of real-time, lineage-based classification that bypasses slower formal taxonomy. |

Diagram: The Taxonomic Mislabeling Cascade

Diagram Title: Cascade from ICTV Update to Research Error

The Correction Pipeline: Step-by-Step Methods and Bioinformatics Tools for Accurate Reclassification

Troubleshooting Guides & FAQs

FAQ 1: My viral genome assembly is unusually long and contains many mammalian genes. What is the likely cause and how can I resolve it? Answer: This is a classic sign of host DNA contamination. The sequence data likely contains a significant percentage of reads from the host cell line or tissue used to propagate the virus.

- Solution: Use a host subtraction tool (e.g., BBduk from BBMap, Bowtie2) to map reads against the host reference genome and remove matching sequences before assembly. Always run a FastQC report post-subtraction to verify the removal of contaminant sequences.

FAQ 2: After quality trimming, my sequence depth has dropped dramatically, making variant calling unreliable. How can I avoid this? Answer: Over-trimming with aggressive quality or adapter trimming parameters is the common cause.

- Solution: Use adaptive trimmers like

fastporTrimmomaticwith careful, validated parameters. Perform quality trimming in two stages: 1) Light trimming for initial QC, 2) Post-contamination screening, apply targeted trimming only to residual adapters. Compare pre- and post-trimming depth metrics in a table to optimize.

FAQ 3: My QC reports show high-quality scores, but BLAST analysis of contigs reveals sequences from common lab contaminants (e.g., E. coli, phiX). Why did my initial QC miss this? Answer: Standard QC checks metrics like Phred scores and GC content but does not screen for specific biological contaminants.

- Solution: Integrate a mandatory contamination screening tool into your workflow. Use Kraken2 or DeconSeq with a custom database containing common lab contaminants, cloning vectors, and phylogenetically related viruses to identify and filter these sequences.

FAQ 4: I am working with unknown or highly divergent viruses. How can I screen for contamination when reference-based tools fail? Answer: Reference-free methods are essential here.

- Solution: Employ sequence composition-based tools. Tools like

PhredorBlobToolKitcan visualize sequence "blobs" based on GC content and read depth. Contaminants often form distinct clusters separate from your target virus. Additionally, use protein-level screens with DIAMOND against non-redundant databases to identify anomalous taxonomic assignments.

Key Experimental Protocols

Protocol 1: Pre-Assembly Contamination Screening & Host Subtraction

Objective: To remove host-derived and common contaminant reads prior to de novo assembly.

- Input: Raw paired-end FASTQ files.

- Adapter/Quality Trimming: Run

fastp(v0.23.2) with default parameters to remove adapters and low-quality ends. - Host Read Subtraction: Align reads to the host genome (e.g., human GRCh38) using

Bowtie2(v2.5.1) in sensitive end-to-end mode (--very-sensitive). Extract unmapped read pairs usingsamtools(v1.17). - Contaminant Screening: Screen unmapped reads against a curated database of common contaminants using

Kraken2(v2.1.2). The database should include phiX174, sequencing vectors, E. coli genomes, and yeast. - Output: "Clean" FASTQ files for assembly, and a contamination report table.

Protocol 2: Post-Assembly Contig Classification and Verification

Objective: To taxonomically label all assembled contigs and flag mislabelled or contaminant sequences.

- Input: Assembled contigs (FASTA) from tools like SPAdes or MEGAHIT.

- Primary Classification: Run all contigs through

Kaiju(v1.9.2) against the NCBI BLAST non-redundant protein database (nr_euk). - Secondary Validation: For contigs classified as viral, perform a protein-level search using

DIAMOND(v2.1.6) BLASTx against thenrdatabase with an e-value cutoff of 1e-5. - Cross-Reference: Manually inspect top hits for consistency. A contig labelled as "Human adenovirus C" should not have top protein hits to bacteriophages.

- Output: A final, verified contig set with reliable taxonomic labels.

Data Presentation

Table 1: Impact of Sequential QC Steps on Simulated Metagenomic Dataset (n=10M reads)

| QC Step | Tool Used | Reads Retained | % Human Reads | % PhiX Reads | Top Viral Hit (Read Count) |

|---|---|---|---|---|---|

| Raw Data | - | 10,000,000 | 45.2% | 0.8% | Influenza A (12,450) |

| After Adapter Trim | fastp | 9,987,120 | 45.2% | 0.8% | Influenza A (12,450) |

| After Host Subtraction | Bowtie2 vs. GRCh38 | 5,487,120 | 0.1% | 1.5%* | Influenza A (12,448) |

| After Contaminant Filter | Kraken2 Filter | 5,400,105 | 0.1% | 0.01% | Influenza A (12,448) |

*Percentage increased post-host removal due to reduced denominator.

Table 2: Common Contaminants and Recommended Screening Tools

| Contaminant Type | Example Organisms | Recommended Screening Tool | Database to Use |

|---|---|---|---|

| Sequencing Control | PhiX174 | Kraken2, BLASTn | Custom PhiX genome |

| Cloning Vector | pUC19, pBR322 | VecScreen (NCBI), BLASTn | UniVec database |

| Common Lab Bacteria | E. coli, B. subtilis | Kraken2, DeconSeq | RefSeq complete genomes |

| Host Genome | Human, Mouse, Vero Cells | Bowtie2, HISAT2 | Host reference genome |

| Cross-Species | Other viruses in study | BLASTn, DIAMOND BLASTx | Custom local database |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in QC/Contamination Screening |

|---|---|

| PhiX Control v3 | Provides a known sequence for run quality monitoring; must be bioinformatically filtered. |

| Negative Extraction Controls | Helps identify kit/lab-borne contaminants present in extraction reagents. |

| Host rRNA Depletion Probes | Reduces the proportion of host reads during library prep, improving viral target coverage. |

| Synthetic Spike-in Controls (e.g., ERCC RNA) | Allows for quantitative assessment of sequencing sensitivity and detection thresholds. |

| Nuclease-free Water (certified) | Used as a no-template control to detect ambient nucleic acid contaminants in reagents. |

| Curated Contaminant Database | A locally compiled FASTA file of known lab contaminants for precise screening. |

Visualizations

Title: Viral QC and Contamination Screening Workflow

Title: Contaminant Identification Decision Tree

Troubleshooting & FAQs

Q1: My BLASTn search against nt returns no significant hits (E-value > 0.001) for my viral sequence. What should I do? A1: This suggests a novel or highly divergent virus. Proceed as follows:

- Verify Parameters: Ensure you are using

-task megablast(for highly similar sequences) or-task blastn(for more divergent sequences). For short reads, use-task blastn-short. - Iterative Search: Perform a BLASTx (translated nucleotide vs. protein database) search. A significant protein-level hit can reveal conserved functional domains even when nucleotide similarity is low.

- Database Selection: Search against the dedicated

refseq_viralandenv_ntdatabases instead of the fullnt. This reduces noise from non-viral sequences. - Lower Stringency: Temporarily increase the E-value cutoff to 10 and examine the taxonomic lineage of marginal hits for clues.

Q2: My k-mer frequency analysis shows an ambiguous result, placing my sequence between two distinct viral families. How is this resolved? A2: Ambiguous k-mer profiles often indicate recombination, contamination, or poor sequence quality.

- Quality Control: Re-examine the sequence quality metrics (e.g., Phred scores) and trim low-quality ends.

- Compositional Segmentation: Use sliding-window analysis (e.g., with tools like Phaster or VIROME) to check if different genome regions have k-mer profiles matching different references, suggesting recombination.

- Complementary Method: Cross-check with the Genome Composition Check (G+C content & dinucleotide bias). A consistent composition across the genome supports a single origin, while a shift supports recombination or contamination. See Table 1.

- Reagent Solution: Use high-fidelity polymerases (e.g., Q5, Phusion) during amplification to prevent chimeras.

Q3: How do I interpret conflicting results between BLAST (suggests Virus A) and k-mer profiling (suggests Virus B)? A3: This conflict is a key signal for potential mislabeling.

- Prioritize Local Similarity: BLAST may be identifying a conserved region (e.g., a common domain) rather than the whole genome. Examine the BLAST alignment—is the high similarity localized?

- Trust Whole-Genome Signal: k-mer frequency reflects the global composition and is less swayed by a single conserved region. A strong, unambiguous k-mer signal for Virus B is strong evidence for mislabeling.

- Protocol - Targeted Re-BLAST: Extract the high-scoring segment pair (HSP) region from your query and the matching region from the BLAST hit. Perform a separate, detailed alignment of these sub-sequences. If they are nearly identical while the flanking regions are not, the original BLAST hit is likely misleading for the full-length label.

Q4: What are the critical thresholds for G+C content and dinucleotide frequency deviation that indicate a probable taxonomic mismatch? A4: There is no universal fixed threshold, as variation exists within taxa. Use the following comparative framework:

Table 1: Genome Composition Check Thresholds & Interpretation

| Metric | Suggested Analysis Threshold | Interpretation of Mismatch |

|---|---|---|

| G+C Content | Deviation > 10% from reference genus/family average. | Strong indicator of different taxonomic grouping. |

| Dinucleotide Bias (δ-distance) | δ > 0.06 (6% deviation) from expected genus/family profile. | Supports distinct evolutionary lineage or host. |

| CpG & TpA Suppression | Pattern (presence/absence) incongruent with expected viral family. | Mismatch in host interaction or replication machinery. |

Protocol: Calculate the Z-score for each dinucleotide in your query versus the reference set. A cluster of outliers (|Z-score| > 3) for multiple dinucleotides is a significant red flag.

Q5: During a k-mer profiling workflow, the software fails with a memory error on large datasets. How can I optimize this? A5: This is common with large viral metagenomic assemblies.

- Reduce k-mer size: Start with a smaller k (e.g., k=4 or 6) for initial broad classification, which reduces the feature space.

- Use Streaming/Probabilistic Data Structures: Employ tools that use Count-Min Sketches or Bloom Filters (e.g., Mash, sourmash) instead of storing all k-mer counts in memory.

- Subsample Sequences: For a quick check, uniformly subsample your contigs (e.g., using

seqtk sample). If the subsample's profile matches the full dataset's, proceed with the subsample for iterative testing. - Cluster First: Cluster similar sequences in your dataset using CD-HIT-EST before profiling to reduce redundant computation.

Research Reagent & Computational Toolkit

Table 2: Essential Solutions for Taxonomic Verification Experiments

| Item / Tool | Function & Application |

|---|---|

| BLAST+ Suite | Core tool for sequence homology search against NCBI or local databases. |

| Kraken2 / Kaiju | For rapid, k-mer based taxonomic classification of sequence reads/contigs. |

| Jellyfish / KMC3 | Efficient k-mer counting for generating frequency profiles from raw sequences. |

| Phusion/Uracil DNA Polymerase | High-fidelity PCR for amplicon generation without chimeras. |

| NCBI Viral RefSeq Database | Curated, non-redundant set of viral reference genomes for reliable comparison. |

| CheckV | For assessing genome quality and identifying host contamination in viral sequences. |

| Sklearn / R | For implementing PCA/LDA on k-mer frequency matrices for visualization. |

| Geneious / CLC Bio | Commercial GUI platforms for integrating BLAST, composition, and alignment views. |

Experimental Protocols

Protocol 1: Integrated Taxonomic Verification Pipeline

- Input: Putatively labeled viral genome sequence (

query.fasta). - Step 1 - BLAST-Based Screen:

- Run:

blastn -query query.fasta -db refseq_viral -outfmt "6 qseqid sseqid pident length evalue staxids" -evalue 1e-5 -out blast_results.tsv. - Extract top 10 hits' taxonomy IDs (staxids).

- Run:

- Step 2 - k-mer Profiling:

- Generate k-mer spectrum:

jellyfish count -m 8 -s 100M -t 10 -C query.fasta -o query_mercounts.jf. - Download k-mer profiles for reference taxa (e.g., from RefSeq).

- Calculate Manhattan distance between query and reference profiles.

- Generate k-mer spectrum:

- Step 3 - Genome Composition Check:

- Compute

query.fastaG+C content (e.g., usingseqkit stat). - Calculate dinucleotide frequencies and Z-scores against reference set.

- Compute

- Step 4 - Consensus Labeling:

- Aggregate results from Steps 1-3 into a decision matrix.

- If methodologies conflict, flag sequence for manual inspection and potential re-labeling.

Protocol 2: Constructing a k-mer Reference Database for a Viral Family

- Data Curation: Download all complete genomes for the target viral family from RefSeq.

- Normalization: Remove duplicate sequences (≥99% identity) using

cd-hit-est. - k-mer Counting: For each genome, compute its k-mer frequency vector (normalized to total k-mers) using a standardized k (e.g., k=6).

- Profile Creation: For the taxon, create a consensus profile by averaging the frequency vectors of all member genomes. Store variance for each k-mer.

- Database Formatting: Store profiles in a structured format (JSON/TSV) with metadata (taxon ID, number of genomes, average G+C).

Visualizations

Title: Core Methodologies Workflow for Taxonomic Verification

Title: Troubleshooting Conflicting BLAST and k-mer Results

FAQs & Troubleshooting Guides

Q1: During tree construction, my multiple sequence alignment (MSA) is poor, leading to low-confidence phylogenies. What are the key checks?

A: Poor MSA is a common bottleneck. First, verify your alignment program and parameters. For viral sequences, MAFFT with the --auto flag is often robust. Check the alignment manually in a viewer like AliView; look for excessive gaps or misaligned conserved domains. Quantify alignment quality with metrics like sum-of-pairs score. If issues persist, consider refining your input sequence set—highly divergent sequences can break alignments. Pre-filtering sequences by length or using an alignment trimmer like trimAl may be necessary.

Q2: The reconciliation analysis between my gene tree and the reference species tree shows an unexpectedly high number of duplication events. Is this a tool error or a real biological signal? A: First, rule out technical artifacts. Ensure the species tree topology is correct for your taxa. High duplications often arise from incorrect sequence labelling (paralogs mislabelled as orthologs) or poor gene tree resolution. Re-run the gene tree inference with a different model (e.g., from ML to Bayesian) or add an outgroup to root the tree properly. Use Table 1 to compare reconciliation outputs from different tools as a sensitivity check.

Table 1: Comparison of Phylogenetic Reconciliation Tool Outputs for a Test Dataset

| Tool | Input Trees | Events Predicted (LGT/Duplication/Loss) | Run Time | Recommended Use Case |

|---|---|---|---|---|

| ALE | Gene (rooted), Species | 2 / 5 / 12 | ~30 min | Probabilistic; best for large, noisy trees |

| EcceTERA | Gene (unrooted), Species | 1 / 8 / 15 | ~5 min | Parsimony-based; fast for hypothesis testing |

| Notung | Gene (rooted), Species | 3 / 6 / 10 | ~2 min | Parsimony with binary resolution; good for visualization |

Q3: My final reconciled tree still places my query sequence in a clade with species from a different host, suggesting mislabelling. What is the definitive validation step? A: Phylogenetic reconciliation provides statistical evidence. The definitive step is to examine the bootstrap/a posteriori support values for the node placing your query. Supports >90% (ML bootstrap) or >0.95 (Bayesian posterior probability) indicate strong evidence for mislabelling. You should also check for consistent signals across different gene trees (if using a multi-locus approach). Report the sequence to the original database curator with your reconciled tree as evidence.

Q4: What are the minimum computational resources required for these analyses on a large viral dataset (~10,000 sequences)? A: For large NGS-derived viral datasets, resource requirements scale significantly. See Table 2 for benchmarks.

Table 2: Computational Resource Requirements for Large-Scale Phylogenetic Reconciliation

| Analysis Step | Typical Software | Minimum RAM | Recommended Cores | Estimated Time (10k seqs) |

|---|---|---|---|---|

| MSA | MAFFT | 32 GB | 16 | 2-4 hours |

| Gene Tree Inference | IQ-TREE | 64 GB | 24 | 6-12 hours |

| Reconciliation | ALE | 16 GB | 1 | 1-2 hours |

Detailed Experimental Protocol: Phylogenetic Reconciliation for Taxonomic Validation

Protocol Title: Full-Protocol for Validating Taxonomic Placement of Viral Sequences via Gene Tree/Species Tree Reconciliation.

1. Input Data Curation:

- Query Sequences: Gather putatively mislabelled viral nucleotide/protein sequences.

- Reference Sequence Set: Download all reference sequences from NCBI RefSeq/Viral for the suspected correct and original taxa. Use a broad evolutionary range.

- Species Tree: Construct a trusted species tree from the ICTV taxonomy or a published mega-tree (e.g., from TreeBase). Convert to Newick format.

2. Multiple Sequence Alignment & Trimming:

- Align sequences using MAFFT v7:

mafft --auto --thread 16 input.fasta > alignment.aln - Visually inspect alignment in AliView. Trim unreliable regions with trimAl:

trimal -in alignment.aln -out alignment.trimmed.aln -automated1 - Generate alignment quality report with AMAS:

AMAS summary -i alignment.trimmed.aln -f fasta -d dna

3. Phylogenetic Gene Tree Inference:

- Perform Model Testing & Maximum Likelihood tree building with IQ-TREE2:

iqtree2 -s alignment.trimmed.aln -m MFP -B 1000 -T AUTO -pre my_genetree - Root the resulting tree (

my_genetree.treefile) using the outgroup method with FigTree ornw_rerootfrom Newick Utilities.

4. Phylogenetic Reconciliation Analysis:

- Using the rooted gene tree and the reference species tree, run a reconciliation with EcceTERA:

java -jar ecceTERA.jar -g genetree.rooted.nwk -s speciestree.nwk -t . -o ./ecceTERA_output - Interpret the output

Reconciliations.txtfile, focusing on the predicted event (Speciation, Duplication, Transfer, Loss) at each node.

5. Taxonomic Re-assignment Recommendation:

- Map the reconciled tree topology onto taxonomic labels. If the query sequence consistently groups within a monophyletic clade of a taxon different from its original label with high support, it is a candidate for reclassification.

- Generate a final visualization (see Diagram 1) summarizing the evidence.

Visualization: Reconciliation Workflow & Output

Diagram Title: Phylogenetic reconciliation workflow for taxonomic validation.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools & Reagents for Phylogenetic Reconciliation Experiments

| Item Name | Type (Software/Data/Service) | Function in Validation Protocol |

|---|---|---|

| MAFFT | Software | Creates accurate multiple sequence alignments, critical for downstream tree accuracy. |

| IQ-TREE 2 | Software | Infers maximum likelihood phylogenies with integrated model testing and bootstrapping. |

| EcceTERA / ALE | Software | Performs the core reconciliation algorithm between gene and species trees. |

| ICTV Master Species List | Reference Data | Provides the authoritative, hierarchical species tree for viruses. |

| NCBI RefSeq Viral Database | Reference Data | Curated, non-redundant source for high-quality reference sequences. |

| TrimAl | Software | Automates the trimming of spurious alignment regions to improve phylogenetic signal. |

| CIPRES Science Gateway | Web Service | Provides high-performance computing access for resource-intensive tree inference steps. |

| FigTree / iTOL | Visualization Tool | Visualizes and annotates final trees for publication and interpretation. |

Troubleshooting Guides & FAQs

VICTOR (Virus Classification and Tree Building Online Resource) Q1: My genome-based phylogeny in VICTOR fails or produces a poorly resolved tree. What are the most common causes? A: This is typically due to low sequence similarity or incomplete genome data. VICTOR relies on pairwise comparisons of genome sequences. Ensure your input FASTA contains complete or near-complete viral genomes. Sequences with less than 15% pairwise similarity to any in the reference set may fail. Pre-filter your dataset to remove highly fragmented or low-quality sequences.

Q2: What does the "Distance method not applicable" error mean? A: This error arises when the chosen distance formula (e.g., formula D0) cannot be calculated for your dataset, often because sequences are too divergent or share no detectable homology. Switch to a more robust distance formula within VICTOR, such as the formula D4 recommended for highly divergent sequences.

vConTACT2 (Virus Contig Cluster and Taxonomy) Q3: vConTACT2 classifies my phage contigs as "unclustered" or "No ICTV label." How should I proceed? A: "Unclustered" indicates your contigs did not share enough protein cluster similarity with references to form a robust cluster. First, verify you used the correct--db 'prokaryotic' or 'nr' database. Increase the--min-score parameter (default 1) cautiously. Consider augmenting the analysis by including closely related genomes from GenBank in your input to provide more context for clustering.

Q4: The .csv output file is difficult to interpret. What are the key columns for taxonomy?

A: Focus on VC (Virus Cluster), VC.Subcluster, and Automatic.ICTV.Taxonomy. The Taxonomic.status column flags sequences as "Tool-Trusted," "Pending," or "Unknown." Cross-reference the VC number with the network file in Cytoscape for visual validation of cluster relationships.

Genome Detective Q5: Genome Detective assigns a low "Score" or "Confidence" to my viral identification. What affects this score? A: The score is based on breadth and depth of coverage against the best-matching reference. A low score often results from a highly divergent virus, a chimeric assembly, or contaminating host reads. Use the "Alignment" tab to inspect read mapping. Preprocess your reads to remove host contamination and ensure a clean, quality-trimmed input.

Q6: The tool reports "Multiple best matches" for a single sequence. Is this a bug? A: No. This indicates your query sequence is nearly equally similar to multiple reference sequences, suggesting they belong to the same taxonomic group or that the reference database lacks resolution at that level. Review the matched references; they likely share the same genus or family-level classification.

Experimental Protocol: Viral Taxonomy Re-Assignment Workflow

Objective: To correct taxonomic labels of uncharacterized or mislabeled viral genome sequences.

Materials & Input:

- Viral genome sequences in FASTA format.

- Computational Tools: VICTOR, vConTACT2, Genome Detective.

- Reference Databases: NCBI RefSeq, ICTV Master Species List, specialty databases (e.g., RVDB for vertebrate viruses).

Methodology:

- Initial Quality & Composition Check:

- Upload FASTA to Genome Detective. Select the appropriate viral module (e.g., "Viral Detective").

- Review the output: assigned taxonomy, confidence score, and genome completeness.

- Export the amino acid file (.faa) of predicted genes for downstream analysis.

Protein-Centric Clustering (vConTACT2):

- Prepare the gene prediction file (.faa) from Genome Detective and/or from your own annotation pipeline.

- Run vConTACT2 in

--db 'prokaryotic'mode for phages or--db 'nr'for broader viruses. - Use default parameters initially:

--rel-mode 'Diamond' --pcs-mode MCL --vcs-mode ClusterONE. - Analyze the output network (.graphml) in Cytoscape and the taxonomy file (.csv).

Whole-Genome Phylogenetic Validation (VICTOR):

- For sequences receiving a firm cluster assignment from vConTACT2, perform definitive classification.

- Take the nucleotide FASTA and submit to VICTOR using the "TYPING" workflow.

- Select the appropriate distance formula (D4 for divergent sequences).

- Root the resulting phylogenetic tree (Newick format) with a known outgroup.

Synthesis of Evidence:

- Compare results from all three tools using the decision matrix below.

Data Presentation: Tool Comparison & Decision Matrix

Table 1: Core Function and Output of Taxonomic Tools

| Tool | Core Principle | Input | Primary Output | Best For |

|---|---|---|---|---|

| Genome Detective | Unified alignment & k-mer scoring | Reads/Contigs/Genomes | Taxonomic label, confidence score, assembly | Rapid initial identification & assembly QC |

| vConTACT2 | Protein-sharing social network | Gene predictions (.faa) | Protein clusters, viral clusters (VCs) | Classifying unknown phages & discovering new groups |

| VICTOR | Genome BLAST distance phylogeny | Whole genomes (.fasta) | Phylogenetic tree, taxonomic proposal | Definitive genus/species demarcation |

Table 2: Troubleshooting Common Outcomes

| Observed Result | Likely Cause | Recommended Action |

|---|---|---|

| Low confidence/score (Genome Detective) | Divergent virus, contamination | Decontaminate reads, check alignment view, try alternative module |

| "Unclustered" (vConTACT2) | Novelty or insufficient gene-sharing | Lower --min-score, add related public genomes to input |

| Poor tree resolution (VICTOR) | Low similarity, fragmented genomes | Use formula D4, filter for >50% complete genomes |

Visualization: Taxonomic Re-Labelling Workflow

Diagram Title: Viral Taxonomy Correction Decision Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational "Reagents" for Viral Taxonomy

| Item | Function & Note | Source/Access |

|---|---|---|

| NCBI Viral RefSeq DB | Curated reference genomes; critical for alignment & tree-building. | FTP: NCBI |

| ICTV Master Species List | Ground truth for taxonomic nomenclature; final arbiter for labels. | ictv.global |

| RVDB (C-RVDB) | Non-redundant virus DB; reduces host contamination in searches. | rvdb.dbi.udel.edu |

| Diamond BLAST | Ultra-fast protein aligner; core engine for vConTACT2. | github.com/bbuchfink/diamond |

| Cytoscape | Network visualization; essential for interpreting vConTACT2 clusters. | cytoscape.org |

| MCL Algorithm | Markov Cluster algorithm; clusters proteins in vConTACT2. | micans.org/mcl |

| Newick Tree File | Standard output from VICTOR; for viewing/editing trees. | N/A |

Technical Support Center

Troubleshooting Guides & FAQs

FAQ 1: What is the first step before submitting a correction to a sequence database? Answer: The first and most critical step is to definitively verify the mislabeling using robust phylogenetic analysis and, where possible, wet-lab validation (e.g., PCR, sequencing of original samples). You must gather all supporting evidence (multiple sequence alignments, phylogenetic trees, metadata discrepancies) before initiating a submission. Contacting the original submitter for clarification is also recommended.

FAQ 2: My correction submission to GenBank was rejected. What are common reasons? Answer: Common reasons include:

- Insufficient Evidence: Phylogenetic analysis with low bootstrap support or poor alignment.

- Incorrect Format: Not using the required forms or data formats specified by the database.

- Scope Error: Attempting to change a name based on unpublished data or taxonomic opinion not widely accepted.

- Contact Issues: The database may attempt to contact the original submitter and receive no response, stalling the process.

FAQ 3: How do I handle a correction when the original submitter is unresponsive? Answer: NCBI and ENA have policies for this. You must demonstrate a good-faith effort to contact the original submitter (document emails). Your evidence for mislabeling must be exceptionally strong and published in a peer-reviewed journal. The database staff will then make a final judgment based on the provided evidence.

FAQ 4: What's the difference between updating a record and suppressing it? Answer:

- Update/Correction: The record remains public but with corrected taxonomic information (e.g., organism name, lineage). Used for clear-cut bioinformatics errors.

- Suppression/Withdrawal: The record is removed from public view. Used for irredeemable errors like sample contamination, synthetic constructs, or non-viral sequences.

FAQ 5: How long does the re-labeling process typically take? Answer: The timeline is highly variable. A simple update with consent from the original submitter may take 2-4 weeks. A contested or complex case requiring database staff arbitration can take several months. See the table below for average estimates.

Data Presentation

Table 1: Comparison of Major Database Correction Processes

| Database | Primary Correction Form/Tool | Key Evidence Required | Avg. Processing Time (Business Days) | Original Submitter Consent Needed? |

|---|---|---|---|---|

| NCBI GenBank | Sequin software or BankIt web tool; direct email to gb-admin@ncbi.nlm.nih.gov |

Phylogenetic tree (published/aligned data), publication reference (if any), alignment files. | 20-40 days | Preferred, but not always mandatory with strong evidence. |

| ENA (EMBL-EBI) | Webin Submission Portal (update existing record) or datasubs@ebi.ac.uk |

Detailed justification, alignment supporting new taxonomy, stable study/project ID. | 15-30 days | Yes, for most updates. ENA will contact them directly. |

| DDBJ | SAKURA submission system or ddbj@ddbj.nig.ac.jp |

Similar to NCBI: phylogenetic evidence, proposed correct taxonomic identifier (TaxID). | 20-40 days | Recommended. |

| Virus Pathogen Resource (ViPR) | https://www.viprbrc.org/ -> Contact Us form |

Curation request linked to specific accession, evidence summary. | 10-20 days | No, handled by internal curation team. |

Experimental Protocols

Protocol: Phylogenetic Verification of Suspected Mislabeling

Objective: To generate robust phylogenetic evidence supporting a taxonomic re-labeling request.

Materials: See "The Scientist's Toolkit" below.

Methodology:

- Sequence Retrieval: Download the suspect sequence(s) and a representative set of reference sequences spanning the expected (claimed) taxonomic group and the suspected true taxonomic group from GenBank/ENA. (Minimum references: 10-15 per group).

- Multiple Sequence Alignment (MSA): Use MAFFT or Clustal Omega with default parameters for nucleotides (for proteins, use translated sequences). Manually inspect and trim the alignment to conserved regions.

- Phylogenetic Tree Construction: Perform two independent methods:

- Maximum Likelihood: Use IQ-TREE with model finder (e.g.,

-m MFP) and 1000 bootstrap replicates. Command:iqtree -s alignment.fasta -m MFP -bb 1000 -nt AUTO. - Neighbor-Joining: Use MEGA11 with the Tajima-Nei model and 1000 bootstrap replicates.

- Maximum Likelihood: Use IQ-TREE with model finder (e.g.,

- Tree Interpretation: The suspect sequence should cluster with the suspected true group with bootstrap support >90% from both methods and show clear separation from its claimed taxonomic group.

- Documentation: Save all tree files (.nwk, .png), the final alignment file, and record all accession numbers used. This package constitutes your primary evidence.

Mandatory Visualization

Database Correction Submission Workflow

Essential Components of a Correction Evidence Package

The Scientist's Toolkit

Table 2: Research Reagent Solutions for Phylogenetic Verification

| Item | Function in Re-labeling Process |

|---|---|

| NCBI Taxonomy Database | Provides the authoritative taxonomic identifier (TaxID) for the proposed correct organism name. |

| MAFFT / Clustal Omega | Software for performing multiple sequence alignment, the foundation for phylogenetic analysis. |

| IQ-TREE / MEGA11 | Software for constructing statistically robust phylogenetic trees with bootstrap support values. |

| BLAST Suite (nt/nr) | Used for initial exploratory analysis to identify the closest matching sequences to the suspect isolate. |

| Reference Sequence Set | A carefully selected collection of verified sequences representing relevant viral taxa for comparison. |

| Sequence Data Archive | Local database (e.g., using blastdbcmd) of downloaded sequences to ensure reproducibility of the analysis. |

Solving Common Pitfalls: Strategies for Ambiguous Cases, Mixed Infections, and Novel Viruses

FAQs & Troubleshooting Guides

Q1: My BLASTn search for a viral contig returned multiple top hits with similarly low E-values and identities (~70-85%). Which one is the correct taxonomic label? A: In viral genomics, especially with novel or recombinant viruses, this is common. A single BLAST search is insufficient. Do not automatically assign the top hit. You must implement a secondary, curated database search and phylogenetic analysis.

- Action: Use the NCBI Viral RefSeq database or the ICTV's curated virus database for a secondary BLAST. Then, extract the conserved core genes (e.g., RNA-dependent RNA polymerase for RNA viruses) from your sequence and the hits for phylogenetic tree construction.

Q2: How low of a percentage identity is too low for reliable viral taxonomic assignment via BLAST? A: Thresholds vary by virus group due to differing evolutionary rates. The table below summarizes general guidelines derived from current literature.

Table 1: BLAST Identity Thresholds for Preliminary Viral Taxonomic Assignment

| Viral Group | Genus-Level Guideline | Family-Level Guideline | Notes |

|---|---|---|---|

| DNA Viruses (e.g., Herpesviridae) | >70% aa identity (core genes) | >50% aa identity (core genes) | More conserved; use protein BLAST (BLASTp). |

| RNA Viruses (e.g., Picornaviridae) | >60% aa identity (Polyprotein/RdRp) | >40% aa identity (Polyprotein/RdRp) | High mutation rate; aa alignment is essential. |

| Retroviruses | >70% nt identity (pol gene) | >50% nt identity (gag/pol) | Consider endogenous elements. |

| Novel/Divergent Viruses | Often <60% aa identity | Requires phylogenetic analysis | BLAST alone fails; indicates potential new taxa. |

Q3: What is the step-by-step protocol to resolve ambiguous hits and assign a correct label? A: Experimental Protocol: Multi-Step Verification for Viral Taxonomy

Objective: To conclusively determine the taxonomic placement of a viral sequence with ambiguous BLAST results. Materials: See "Research Reagent Solutions" below. Method:

- Primary BLAST (nt & aa): Run both BLASTn and BLASTp against the non-redundant (nr) database. Record top 20 hits, E-values, query coverage, and percent identity.

- Curated Database Filtering: Run BLASTp of your translated sequence against the NCBI Viral RefSeq Protein Database. This removes non-viral and low-quality entries.

- Conserved Gene Extraction: Identify and extract the sequence for a conserved replicative gene (e.g., RdRp, Capsid protein) from your contig using HMMER or domain analysis (Pfam, CDD).

- Reference Sequence Curation: Download the matching conserved gene sequences from the top RefSeq hits and from known type species in the suspected family/order.

- Multiple Sequence Alignment (MSA): Align your gene sequence with the reference set using MAFFT or MUSCLE (configured for viral rates).

- Phylogenetic Reconstruction: Construct a maximum-likelihood tree (IQ-TREE, ModelFinder) or Bayesian tree from the MSA. Use a minimum of 1000 bootstrap replicates.

- Taxonomic Assignment: Your sequence clusters with a monophyletic clade with strong bootstrap support (>70%) is assigned that label. Sequences falling outside known clades may be novel.

Title: Workflow for Resolving Ambiguous Viral BLAST Hits

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Correcting Viral Taxonomic Labels

| Item / Resource | Category | Function in Protocol |

|---|---|---|

| NCBI BLAST Suite | Bioinformatics Tool | Primary sequence similarity search. |

| NCBI Viral RefSeq DB | Curated Database | Filtered, non-redundant viral sequences for reliable secondary search. |

| HMMER / Pfam | Domain Analysis | Identify conserved protein domains (e.g., RdRp1, ViralCapsid) within contigs. |

| MAFFT | Alignment Software | Accurate multiple sequence alignment of divergent viral sequences. |

| IQ-TREE (ModelFinder) | Phylogenetic Software | Model-based tree inference with branch support evaluation (bootstrap). |

| ICTV Virus Metadata | Taxonomic Authority | Final arbiter for taxonomic nomenclature and classification. |

| Geneious / CLC Bio | Workbench Platform | Integrates many steps into a single graphical workflow. |

Handling Recombinant Viruses and Sequences with Chimeric Origins

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My NGS data shows high read-depth regions mapping to divergent viral references. Is this evidence of recombination or contamination? A: Possibly both. First, perform a de novo assembly of the reads. Map the resulting contigs against a comprehensive viral database (e.g., NCBI Virus, VIPR) using BLASTn or tBLASTx. Use recombination detection software (see table below) on the aligned contigs. For contamination, check for adapter sequences and analyze per-base quality scores (Q<30). Re-run library prep controls.

Q2: After identifying a potential recombinant, how do I definitively confirm its chimeric structure and determine breakpoints? A: Confirmation requires a multi-tool approach. Generate a multiple sequence alignment of the query and putative parental sequences. Run at least three different recombination detection algorithms (e.g., RDP5, SimPlot, BootScan) and only trust breakpoints supported by multiple methods with high statistical confidence (p-value < 0.05, bootstrap > 70%). Sanger sequencing of PCR products spanning the suspected breakpoints provides wet-lab validation.

Q3: How should I correctly label the taxonomy of a confirmed recombinant virus in my publication and database submission? A: This is a critical step for fixing incorrect taxonomic labelling. Do not assign it to a single parent's taxonomy. The recommendation is to:

- Annotate it as a "recombinant" derived from GenusX/speciesA and GenusY/speciesB.

- Submit to GenBank/ENA/DDBJ with the

/recombinationqualifier in the source feature. - In the publication, use the format: Recombinant [Virus Name] (Parental Strain1 × Parental Strain2).

Q4: What are the primary bioinformatics tools for recombination analysis, and what are their key metrics? A: The following table summarizes core tools and their outputs:

| Tool Name | Algorithm Type | Key Output Metric | Optimal Use Case |

|---|---|---|---|

| RDP5 | Multiple (GENECONV, MaxChi, etc.) | P-value, Breakpoint Positions | Initial broad detection & multi-parent analysis. |

| SimPlot | Similarity Plot / BootScan | Similarity Percentage, Bootstrap Support | Visualizing recombination and estimating breakpoints. |

| BootScan (within RDP5) | Phylogenetic Bootscan | Bootstrap Support (%) | Confirming recombination with phylogenetic methods. |

| jpHMM | Hidden Markov Model | Probability of Origin per Position | Fine-scale mapping in HIV, HBV, and other highly recombinant viruses. |

Experimental Protocol: Validating Recombinant Breakpoints via PCR and Sanger Sequencing

Objective: To experimentally confirm in silico-predicted recombination breakpoints in a viral genome.

Materials:

- Template: Purified viral DNA/RNA or cDNA.

- Primers: Design outward-facing primers ~150-200 bp upstream and downstream of the in silico predicted breakpoint.

- PCR Reagents: High-fidelity DNA polymerase, dNTPs, appropriate buffer.

- Protocol:

- Perform PCR amplification using the outward-facing primer pair.

- Gel-purify the amplified product.

- Clone the purified product into a suitable sequencing vector (e.g., using TA/Blunt-end cloning).

- Sequence multiple clones (minimum of 5) using vector-specific primers.

- Align the sequenced fragments against the putative parental sequences. The exact nucleotide switch from one parental lineage to the other at the same position across multiple clones confirms the breakpoint.

Visualizations

Title: Recombinant Virus Detection & Validation Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Recombinant Virus Research |

|---|---|

| High-Fidelity DNA Polymerase (e.g., Q5, Phusion) | Critical for error-free amplification of viral sequences prior to cloning and sequencing, avoiding artificial recombination. |

| Viral Nucleic Acid Isolation Kit | Provides pure template DNA/RNA, free of host cell contaminants that can confound sequence analysis. |

| RNA/Demo cDNA Synthesis Kit | For RNA viruses, generates stable cDNA for subsequent PCR analysis of recombinant genomes. |

| TA/Blunt-End Cloning Kit | Allows for the ligation of PCR products into plasmids for Sanger sequencing of individual recombinant molecules. |

| Sanger Sequencing Primers (M13/pUC) | Standard primers for sequencing cloned fragments to confirm breakpoints at single-nucleotide resolution. |

| Positive Control Plasmids | Plasmids containing known recombinant sequences are essential for validating bioinformatics pipelines and wet-lab protocols. |

Strategies for Classifying Metagenomic-Assembled Genomes (MAGs) and Incomplete Genomes

Technical Support Center

FAQs & Troubleshooting Guides

Q1: My viral MAG is being labelled as "unclassified" by standard tools. What are the primary reasons for this? A: This is common and stems from:

- Low Completeness/High Contamination: Most classifiers have strict completeness thresholds (>50% is common). Viral genomes are often fragmented.

- Database Bias: Reference databases (RefSeq, GenBank) are skewed toward cultured prokaryotes and well-studied eukaryotic viruses, missing vast viral diversity.

- Sequence Divergence: Novel viruses lack significant homology to known sequences in marker gene or whole-genome databases.

- Misassembly: Chimeric contigs from co-assembled similar strains can produce conflicting signals.

Q2: I suspect my bacterial MAG has been mislabelled at the species level due to horizontal gene transfer (HGT). How can I troubleshoot this? A: Follow this diagnostic protocol:

- Run Multi-Tool Classification: Use at least two tools with different algorithms (e.g., GTDB-Tk [phylogenetic], CheckM [marker-based], Kaiju [k-mer-based]).

- Check for Consistency: Inconsistent labels across tools signal potential HGT or contamination.

- Perform Single-Copy Core Gene (SCG) Phylogeny: Extract and align SCGs from the MAG. Build a maximum-likelihood tree with close references. Visualize discordance.

- Analyze GC Content & Tetranucleotide Frequency: Plot these across the contig. Abrupt shifts in a contig assigned to one species may indicate misassembled regions from a different organism.

Q3: What are the best strategies for classifying highly novel or incomplete viral sequences (<50% complete)? A: Standard binning often fails. Employ a cascade approach:

- Large-Scale Homology Search: Use

DIAMONDorMMseqs2against viral protein databases (ViPTree, pVOGs, EBI viral). - Viral Feature Detection: Use tools like

VirSorter2,DeepVirFinder, orVIBRANTto identify hallmark viral genes (capsid, terminase, integrase). - Cluster Analysis: Use vConTACT2 or

PPR-Metafor network-based classification, which groups genomes based on gene-sharing patterns, not just alignment.